The most recent 27B parameters Keras mannequin: Gemma 2

Following within the footsteps of Gemma 1.1 (Kaggle, Hugging Face), CodeGemma (Kaggle, Hugging Face) and the PaliGemma multimodal mannequin (Kaggle, Hugging Face), we’re completely satisfied to announce the discharge of the Gemma 2 mannequin in Keras.

Gemma 2 is accessible in two sizes – 9B and 27B parameters – with commonplace and instruction-tuned variants. Yow will discover them right here:

Gemma 2’s top-notch outcomes on LLM benchmarks are coated elsewhere (see goo.gle/gemma2report). On this publish we wish to showcase how the mix of Keras and JAX may also help you’re employed with these giant fashions.

JAX is a numerical framework constructed for scale. It leverages the XLA machine studying compiler and trains the biggest fashions at Google.

Keras is the modeling framework for ML engineers, now working on JAX, TensorFlow or PyTorch. Keras now brings the facility mannequin parallel scaling by means of a pleasant Keras API. You may strive the brand new Gemma 2 fashions in Keras right here:

Distributed fine-tuning on TPUs/GPUs with ModelParallelism

Due to their dimension, these fashions can solely be loaded and fine-tuned at full precision by splitting their weights throughout a number of accelerators. JAX and XLA have intensive help for weights partitioning (SPMD mannequin parallelism) and Keras provides the keras.distribution.ModelParallel API that will help you specify shardings layer by layer in a easy method:

# Checklist accelerators

units = keras.distribution.list_devices()

# Organize accelerators in a logical grid with named axes

device_mesh = keras.distribution.DeviceMesh((2, 8), ["batch", "model"], units)

# Inform XLA methods to partition weights (defaults for Gemma)

layout_map = gemma2_lm.spine.get_layout_map()

# Outline a ModelParallel distribution

model_parallel = keras.distribution.ModelParallel(device_mesh, layout_map, batch_dim_name="batch")

# Set is because the default and cargo the mannequin

keras.distribution.set_distribution(model_parallel)

gemma2_lm = keras_nlp.fashions.GemmaCausalLM.from_preset(...)

The gemma2_lm.spine.get_layout_map()operate is a helper returning a layer by layer sharding configuration for all of the weights of the mannequin. It follows the Gemma paper (goo.gle/gemma2report) suggestions. Right here is an excerpt:

layout_map = keras.distribution.LayoutMap(device_mesh)

layout_map["token_embedding/embeddings"] = ("model", "data")

layout_map["decoder_block.*attention.*(query|key|value).kernel"] =

("model", "data", None)

layout_map["decoder_block.*attention_output.kernel"] = ("model", None, "data")

...

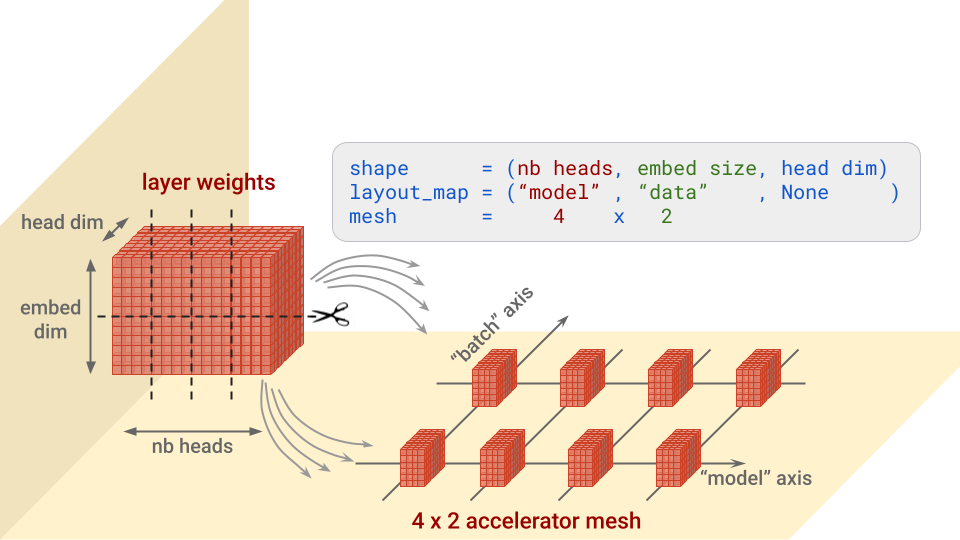

In a nutshell, for every layer, this config specifies alongside which axis or axes to separate every block of weights, and on which accelerators to put the items. It’s simpler to know with an image. Let’s take for example the “query” weights within the Transformer consideration structure, that are of form (nb heads, embed dimension, head dim):

Weight partitioning instance for the question (or key or worth) weights within the Transformer consideration structure.

Observe: mesh dimensions for which there are not any splits will obtain copies. This may be the case for instance if the structure map above was (“model”, None, None).

Discover additionally the batch_dim_name="batch" parameter in ModelParallel. If the “batch” axis has a number of rows of accelerators on it, which is the case right here, knowledge parallelism may even be used. Every row of accelerators will load and prepare on solely part of every knowledge batch, after which the rows will mix their gradients.

As soon as the mannequin is loaded, listed below are two useful code snippets to show the load shardings that had been truly utilized:

for variable in gemma2_lm.spine.get_layer('decoder_block_1').weights:

print(f'{variable.path:} {str(variable.form):}

{str(variable.worth.sharding.spec)}')

#... set an optimizer by means of gemma2_lm.compile() after which:

gemma2_lm.optimizer.construct(gemma2_lm.trainable_variables)

for variable in gemma2_lm.optimizer.variables:

print(f'{variable.path:} {str(variable.form):}

{str(variable.worth.sharding.spec)}')

And if we take a look at the output (beneath), we discover one thing vital: the regexes within the structure spec matched not solely the layer weights, but in addition their corresponding momentum and velocity variables within the optimizer and sharded them appropriately. This is a crucial level to test when partitioning a mannequin.

# for layers:

# weight title . . . . . . . . . . form . . . . . . structure spec

decoder_block_1/consideration/question/kernel (16, 3072, 256)

PartitionSpec('mannequin', None, None)

decoder_block_1/ffw_gating/kernel (3072, 24576)

PartitionSpec(None, 'mannequin')

...

# for optimizer vars:

# var title . . . . . . . . . . . .form . . . . . . structure spec

adamw/decoder_block_1_attention_query_kernel_momentum

(16, 3072, 256) PartitionSpec('mannequin', None, None)

adamw/decoder_block_1_attention_query_kernel_velocity

(16, 3072, 256) PartitionSpec('mannequin', None, None)

...

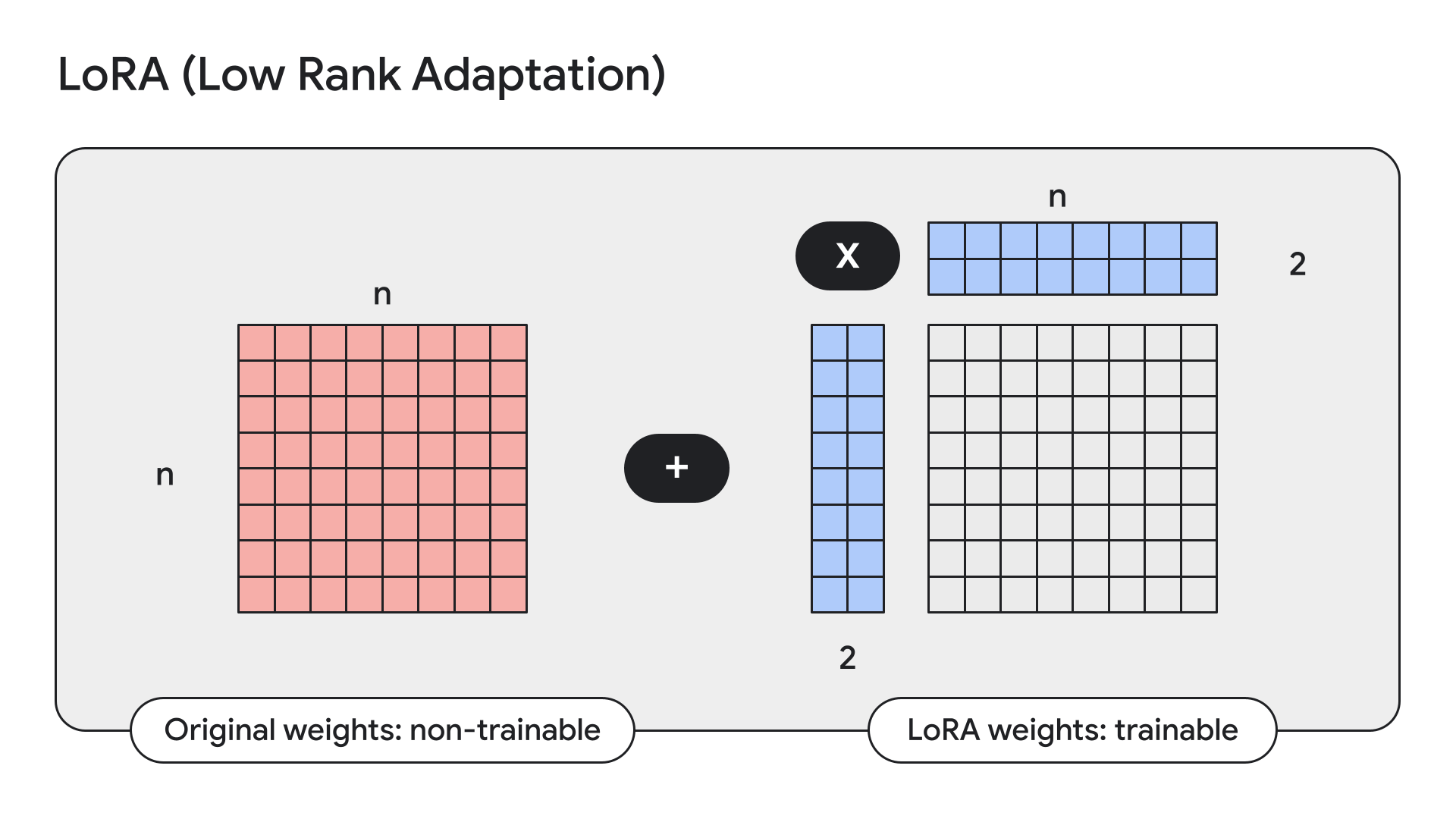

Coaching on restricted HW with LoRA

LoRA is a method that freezes mannequin weights and replaces them with low-rank, i.e. small, adapters.

Keras additionally has simple APIs for this:

gemma2_lm.spine.enable_lora(rank=4) # Rank picked from empirical testing

Displaying mannequin particulars with mannequin.abstract() after enabling LoRA, we will see that LoRA reduces the variety of trainable parameters in Gemma 9B from 9 billion to 14.5 million.

An replace from Hugging Face

Final month, we introduced that Keras fashions can be obtainable, for obtain and person uploads, on each Kaggle and Hugging Face. Right now, we’re pushing the Hugging Face integration even additional: now you can load any fine-tuned weights for supported fashions, whether or not they have been skilled utilizing a Keras model of the mannequin or not. Weights can be transformed on the fly to make this work. Which means you now have entry to the handfuls of Gemma fine-tunes uploaded by Hugging Face customers, instantly from KerasNLP. And never simply Gemma. This may finally work for any Hugging Face Transformers mannequin that has a corresponding KerasNLP implementation. For now Gemma and Llama3 work. You may strive it out on the Hermes-2-Professional-Llama-3-8B fine-tune for instance utilizing this Colab:

causal_lm = keras_nlp.fashions.Llama3CausalLM.from_preset(

"hf://NousResearch/Hermes-2-Pro-Llama-3-8B"

)

Discover PaliGemma with Keras 3

PaliGemma is a robust open VLM impressed by PaLI-3. Constructed on open parts together with the SigLIP imaginative and prescient mannequin and the Gemma language mannequin, PaliGemma is designed for class-leading fine-tune efficiency on a variety of vision-language duties. This contains picture captioning, visible query answering, understanding textual content in photographs, object detection, and object segmentation.

Yow will discover the Keras implementation of PaliGemma on GitHub, Hugging Face fashions and Kaggle.

We hope you’ll get pleasure from experimenting or constructing with the brand new Gemma 2 fashions in Keras!