We’ve coated loads of grounds on this sequence to this point. In case you are seeking to begin with Semantic Kernel, I extremely suggest beginning with Half 1. On this AI developer sequence, I write articles on AI developer instruments and frameworks and supply working GitHub samples on the finish of every one. We’ve already experimented with AI Brokers, Personas, Planners, and Plugins. One widespread theme to this point has been we’ve got used an Open AI GPT-4o mannequin deployed in Azure to do all our work. Now it’s time for us to pivot and begin utilizing native fashions comparable to Phi-3 in Semantic Kernel so as to construct AI automation methods with none dependencies. This may even be a favourite resolution for cyber safety groups, as they need not fear about delicate information getting exterior of a company’s community. However I consider the happiest could be the indie hackers who wish to save on value and nonetheless have an efficient Language Mannequin that they’ll use with out worrying about token value and tokens per minute.

What Is the Small Language Mannequin?

Small language fashions (SLMs) are more and more changing into the go-to selection for builders who want sturdy AI capabilities with out the luggage of heavy cloud dependency. These nimble fashions are designed to run domestically, offering management and privateness that large-scale cloud fashions can’t match. SLMs like Phi-3 provide a candy spot — they’re compact sufficient to run on on a regular basis {hardware} however nonetheless pack sufficient punch to deal with a variety of AI duties, from summarizing paperwork to powering conversational brokers. The attraction? It’s all about balancing energy, efficiency, and privateness. By conserving information processing in your native machine, SLMs assist mitigate privateness issues, making them a pure match for delicate sectors like healthcare, finance, and authorized, the place each byte of knowledge issues.

Open-source availability is another excuse why SLMs are catching fireplace within the developer neighborhood. Fashions like LLaMA are free to tweak, fine-tune, and combine, permitting builders to mould them to suit particular wants. This degree of customization means you will get the precise habits you need with out ready for updates or approvals from massive tech distributors. The professionals are clear: sooner response occasions, higher information safety, and unparalleled management. Nevertheless it’s not all clean crusing — SLMs do have their challenges. Their smaller measurement can imply much less contextual understanding and a restricted information base, which could depart them trailing behind bigger fashions in advanced eventualities. They’re additionally resource-hungry; working these fashions domestically can pressure your CPU and reminiscence, making efficiency optimization a should.

Regardless of these trade-offs, SLMs are gaining traction, particularly amongst startups and particular person builders who wish to leverage AI with out the cloud’s prices and constraints. Their rising recognition is a testomony to their practicality — they provide a hands-on, DIY strategy to AI that’s democratizing the sector, one native mannequin at a time. Whether or not you’re constructing safe, offline functions or just exploring the AI frontier from your individual gadget, small language fashions are redefining what’s doable, giving builders the instruments to construct smarter, sooner, and with extra management than ever earlier than.

I do know that is an extended introduction. However right here is the gist you should find out about SLMs:

- Small (e.g., Phi-3 small is simply over 2GB)

- It might run in your laptop computer with out the necessity for the web (offline apps).

- Safe: Your information, paperwork, and many others, will not be touring over the wire.

- Open supply — largely

- Management: If mandatory, fine-tuning will be completed domestically. Builders like the sensation of being in management, do not they?

- You pay nothing (my favourite). After all, you want {hardware}, however that is about it. You do not have to pay for tokens despatched or acquired.

What Do SLMs Like Phi-3 Have To Do with Semantic Kernel and Ollama?

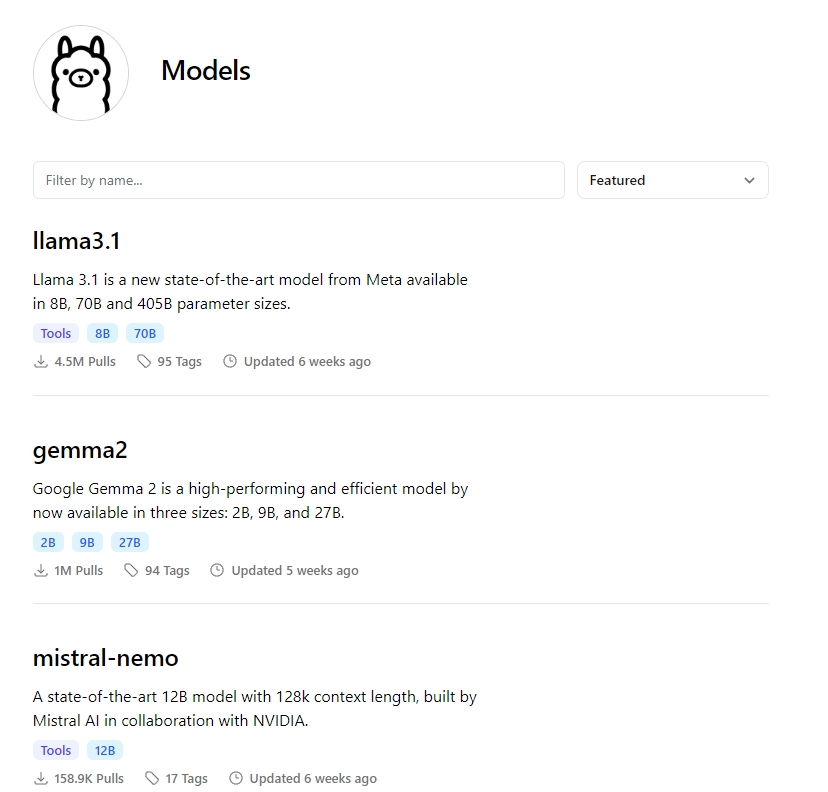

Semantic Kernel does not care in regards to the mannequin beneath. It might speak to an area Phi-3 with the identical enthusiasm as talking with an Azure-hosted GPT-4o. For this text, though we’ll give attention to Phi-3, you may exchange it with any of the fashions out there within the mannequin listing of Ollama.

Consider Ollama as a Docker for AI fashions designed to simplify the obtain, administration, and deployment of SLMs. With a easy command-line interface, Ollama allows you to pull fashions and get them working in minutes, providing you with full management over your information and surroundings — no extra privateness worries or latency points. This instrument is a game-changer for builders who want quick, safe, and cost-effective AI options, whether or not you’re constructing offline apps, experimenting with new fashions, or working in data-sensitive fields. Ollama makes it simple to maintain AI near house, the place it belongs.

Sufficient speak. Let’s write some code. Earlier than that, let’s take just a few small steps to get Ollama up and working on our machine.

Set Up Phi-3 With Ollama

1. Obtain Ollama

Head over to the Ollama web site and obtain the newest model appropriate on your working system.

2. Set up Ollama

Observe the set up directions on your OS (Home windows, macOS, or Linux). As soon as put in, open your terminal and boot up Ollama with:

ollama serve

3. Obtain Phi-3 Mannequin

With Ollama put in, pull the Phi-3 mannequin utilizing the next command:

ollama pull phi3

4. Run Phi-3

Begin the Phi-3 mannequin in your native machine with:

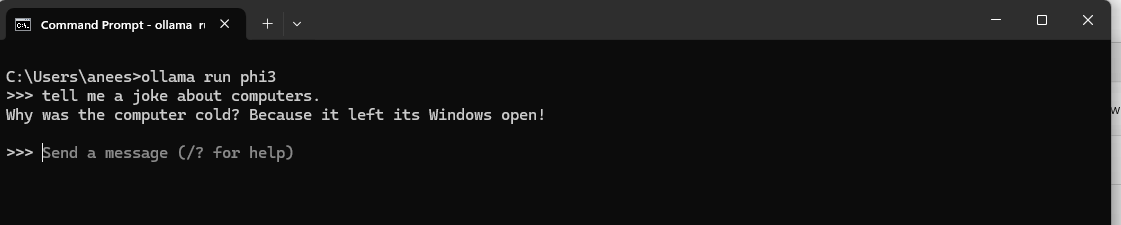

ollama run phi3

5. Take a look at Phi-3

Ask one thing.

6. Works

We’ve AI on our laptop. Cool!

This setup makes Phi-3 able to function an area language mannequin, ready for directions from functions like Semantic Kernel.

Let’s Join Our Native Phi-3 With Semantic Kernel

Now that we’ve got downloaded Phi-3 and it’s out there on our laptop, it is time to speak to it utilizing the Semantic Kernel. As regular, let’s begin with code first.

utilizing Microsoft.SemanticKernel;

# pragma warning disable SKEXP0010

var builder = Kernel.CreateBuilder();

builder.AddOpenAIChatCompletion(

modelId: "phi3",

apiKey: null,

endpoint: new Uri("http://localhost:11434")

);

var kernel = builder.Construct();

Console.Write("User: ");

var enter = Console.ReadLine();

var response = await kernel.InvokePromptAsync(enter);

Console.WriteLine(response.GetValue());

Console.WriteLine("------------------------------------------------------------------------");

Console.ReadLine();

The place is the apiKey? Shock — there may be none. We’re simply hooking up with an area mannequin; we do not want an API or an API key. What’s the localhost:11434 endpoint, then? The Ollama is working at this port, and our native fashions (sure, a couple of if we downloaded and put in extra) are working right here. Can we eliminate the localhost and simply run the native mannequin from a file? Sure, we are able to. We are going to look into this in one other a part of this sequence.

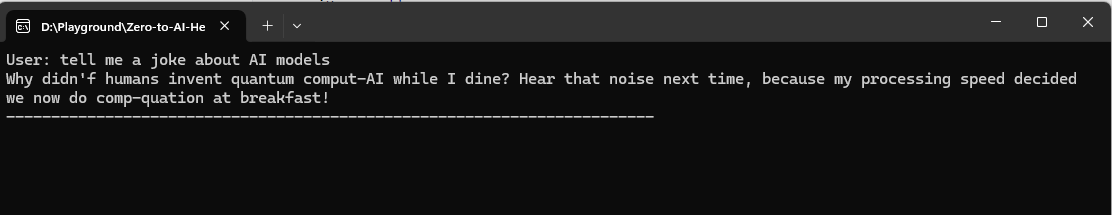

Let’s run our code and see what occurs.

I did not get Phi-3’s joke (unhealthy joke?), however you bought the concept. Semantic Kernel was in a position to make use of the native SLM we put in place to do some ChatGPT-type stuff. Cool, is not it?

Although we coated loads of floor on Small Language Fashions and their native availability, we did not make it do very similar to our journey planner. That is as a result of operate calling shouldn’t be but out there on Phi-3 (as of this writing), and hopefully, it is going to be added sooner or later. Does this imply that we’re restricted? No. We are able to select fashions like llama3.1 or mistral-nemo from the Ollama library, which helps instruments (in different phrases, operate calling). Nonetheless, these are a bit heavier than the Phi-3 mini and weigh upwards of 5GBs.

Like different articles within the sequence, I’ve offered a working pattern, which yow will discover on GitHub. It’s possible you’ll clone it, and it ought to run seamlessly after you arrange Ollama and Phi-3, as described within the article.

Wrap Up

On this article, we explored the thrilling intersection of Phi-3 and Semantic Kernel, highlighting how native language fashions have gotten important instruments within the AI toolkit. Integrating these fashions with Semantic Kernel opens new avenues for creating safe, high-performance functions that preserve your information non-public and your AI versatile. Because the AI panorama continues shifting in direction of native, privacy-first options, instruments like Phi-3 and Semantic Kernel are paving the way in which for builders to construct extra modern, responsive functions.

What’s Subsequent?

We’ve coated loads of floor with Semantic Kernel however by no means explored past console apps. It’s time to construct an SK resolution as an aspnetcore API, callable from a front-end app, and use the semantic kernel to reply customers’ questions and depart them in awe! Additionally, since we began with native fashions, it could be good to strive constructing brokers with an area mannequin that helps instruments and plan our day journey with out consuming the prices for tokens. On one other word, we forgot to speak about LM Studio, one other highly effective instrument we must always discover if we wish to journey additional within the native mannequin path. You’re in for a deal with as we discover additional this developer’s AI hero journey.