This text analyzes the correlation between block sizes and their affect on storage efficiency. This paper offers with definitions and understanding of structured knowledge vs unstructured knowledge, how numerous storage segments react to dam dimension adjustments, and variations between I/O-driven and throughput-driven workloads. It additionally highlights the calculation of throughput and the selection of storage product based mostly on workload kind.

Block Measurement and Its Significance

In computing, a bodily report or knowledge storage block is a sequence of bits/bytes known as a block. The quantity of information processed or transferred in a single block inside a system or storage gadget is known as the block dimension. It is without doubt one of the deciding components for storage efficiency. Block dimension is an important aspect in efficiency benchmarking for storage merchandise and categorizing the merchandise into block, file, and object segments.

Structured vs Unstructured Information

Structured knowledge is organized in a standardized format, often in tables with rows and columns, making it straightforward for people and software program to entry. It’s usually quantitative knowledge, which means it may be counted or measured, and might embody knowledge varieties like numbers, quick textual content, and dates. Structured knowledge is good for evaluation and may be mixed with different knowledge units for storage in a relational database.

Unstructured merely refers to datasets (typical massive collections of recordsdata) that aren’t saved in a structured database format. Unstructured knowledge has an inner construction, nevertheless it’s not predefined by means of knowledge fashions. It is likely to be human-generated, or machine-generated in a textual or a non-textual format. (Supply)

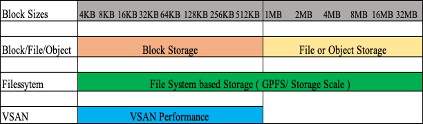

Normally, the block dimension of structured knowledge is within the vary of 4KB to 128KB, and in some instances, it might go to 512KB as nicely. In distinction, the block dimension for unstructured knowledge ranges a lot larger and will simply be within the MB vary, as proven within the determine under.

Determine 1: The block dimension for structured vs unstructured knowledge

OLTP or On-line Transaction Processing is a kind of information processing that consists of executing a number of transactions occurring concurrently — on-line banking, purchasing, order entry, or sending textual content messages — whereas OLAP is a web based analytical processing software program expertise you should use to research enterprise knowledge from totally different factors of view. Organizations accumulate and retailer knowledge from a number of knowledge sources, similar to web sites, functions, good meters, and inner methods. (Supply)

Many of the OLTP workload follows structured knowledge and many of the OLAP workload follows unstructured knowledge patterns and the key distinction between them is the block dimension.

Throughput/IOPS Components Utilizing Block Measurement

Storage throughput (additionally known as knowledge switch fee) measures the quantity of information transferred to and from the storage gadget per second. Usually, throughput is measured in MB/s. Throughput is carefully associated to IOPS and block dimension.

IOPS (enter/output operations per second) is the usual unit of measurement for the utmost variety of reads/writes to noncontiguous storage places. Right here is the method highlighting the IOPS and throughput relation:

MBps = (IOPS * KB per IO) /1024

or

IOPS = (MBps Throughput / KB per IO) * 1024

Within the above method, KB per IO is the block dimension. Therefore, every workload is IO-driven or throughput-driven relying on the block dimension. If the IOPS are larger for any workload, it implies that the block dimension is smaller, and if the throughput numbers are larger for any workload, then the block dimension is on the upper aspect.

Storage Efficiency Primarily based on Block Sizes

Storage applied sciences reply based mostly on block sizes, and therefore, there can be totally different storage suggestions based mostly on the block dimension and response time. Block storage can be extra appropriate for functions with smaller block sizes, whereas file degree and object storage can be extra appropriate with larger block sizes.

Determine 2: Storage expertise and its vary are based mostly on block sizes

As proven within the determine above, block storage has been the selection for manufacturing workloads with smaller block sizes and these functions have larger IOPS limits. Every block storage launch be aware comprises the efficiency numbers in regards to the variety of IOPS every storage field can obtain. On the identical time, file-level storage or any NFS storage is extra appropriate for bigger block sizes, bigger than 1MB.

Object storage, which is relatively a brand new providing available in the market, was launched for storing recordsdata and folders throughout a number of websites and has a efficiency vary like NFS.

Object storage would want load balancers to distribute the chunks throughout the storage methods which additionally helps in boosting efficiency. Each NFS and object storage have excessive response occasions in comparison with block storage because the I/O has to undergo a community to succeed in the disk and again to finish the I/O cycle. The typical response time for NFS and object storage is within the vary of 10+ milliseconds.

Filesystem storage can cater to a bigger vary of block sizes. The structure of filesystem storage may be tuned to deal with most block-size striping and enhance total efficiency. Typically, filesystem storage is getting used within the implementation of information lakes, analytical workloads, and high-performance computing. Most filesystem storage additionally makes use of agent-level software program put in on the servers for higher knowledge distribution and improved efficiency over the community.

InfiniBand setup is most well-liked with file system storage for large-scale deployment of information lakes or HPC methods the place the workload is throughput-driven and big ingest knowledge is predicted in a brief interval.

VSAN was launched as a block storage providing for VMware workloads and has been very profitable throughout OLTP workloads. Within the latest previous, VSAN has been used for workloads with larger block sizes as nicely, particularly for backup workloads the place response time necessities will not be important. What works within the favor of VSAN is the brand new improved structure and the cluster sizing which helps in total efficiency.

Workloads, Block Measurement, and Appropriate Storage

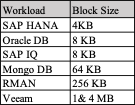

Since storage merchandise have totally different efficiency ranges for numerous block sizes, how will we select the storage based mostly on the block dimension of the workload? Listed here are a number of such examples:

Determine 3: Workloads and their respective block sizes

Within the desk above, workloads and their respective block sizes are talked about for instance. This determine helps in selecting the correct of storage product based mostly on the workload block dimension and total efficiency necessities.

For block sizes which are lower than 256 KB, many of the block storage would carry out nicely, whatever the vendor firm, because the block storage structure is best suited for small block-size workloads. Equally, larger block-size workloads similar to RMAN or Veeam backup software program can be extra appropriate for NFS or object storage, as these are throughput-driven workloads. There can be different design parameters like throughput necessities, whole capability, and skim/write share that might assist in sizing the answer.

Ultimate Ideas

It’s hoped that this research will assist IT engineers and designers design their setups based mostly on the character of the appliance workload and block sizes.