A specter is haunting trendy improvement: as our structure has grown extra mature, developer velocity has slowed. A major reason for misplaced developer velocity is a decline in testing: in testing velocity, accuracy, and reliability. Duplicating environments for microservices has develop into a standard observe within the quest for constant testing and manufacturing setups. Nevertheless, this method usually incurs vital infrastructure prices that may have an effect on each finances and effectivity.

Testing companies in isolation isn’t normally efficient; we wish to take a look at these elements collectively.

Excessive Prices of Setting Duplication

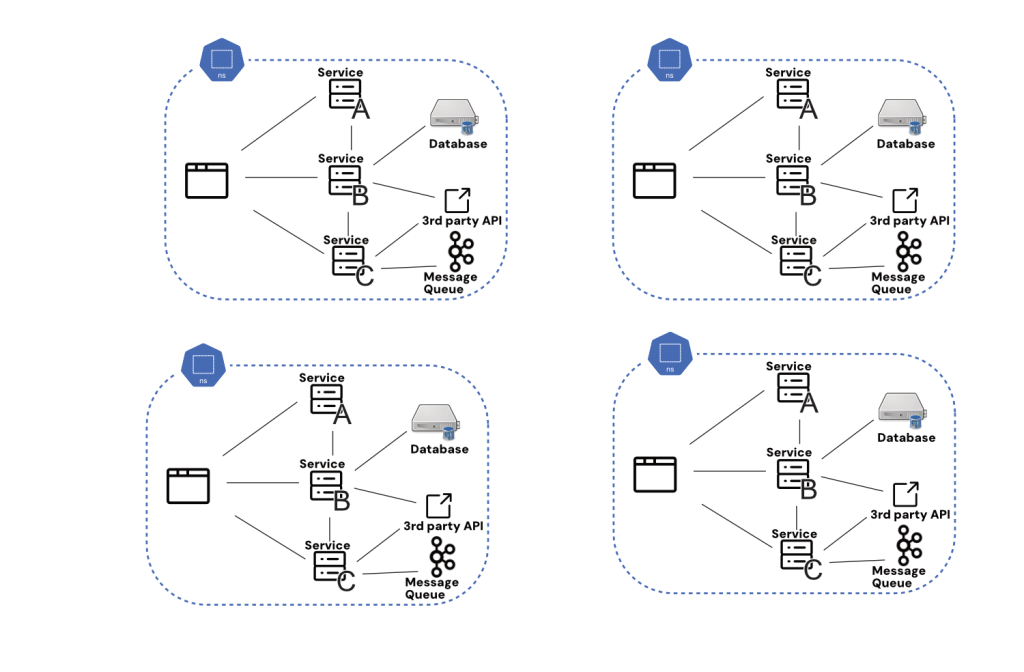

Duplicating environments entails replicating whole setups, together with all microservices, databases, and exterior dependencies. This method has the benefit of being technically fairly easy, a minimum of at first blush. Beginning with one thing like a namespace, we are able to use trendy container orchestration to copy companies and configuration wholesale.

The issue, nonetheless, comes within the precise implementation.

For instance, a serious FinTech firm was reported to have spent over $2 million yearly simply on cloud prices. The corporate spun up many environments for previewing adjustments and for the builders to check them, every mirroring their manufacturing setup. The prices included server provisioning, storage, and community configurations, all of which added up considerably. Every staff wanted its personal duplicate, they usually anticipated it to be obtainable more often than not. Additional, they didn’t wish to watch for lengthy startup occasions, so ultimately, all these environments had been working 24/7 and racking up internet hosting prices the entire time.

Whereas namespacing looks like a intelligent resolution to atmosphere replication, it simply borrows the identical complexity and price points from replicating environments wholesale.

Synchronization Issues

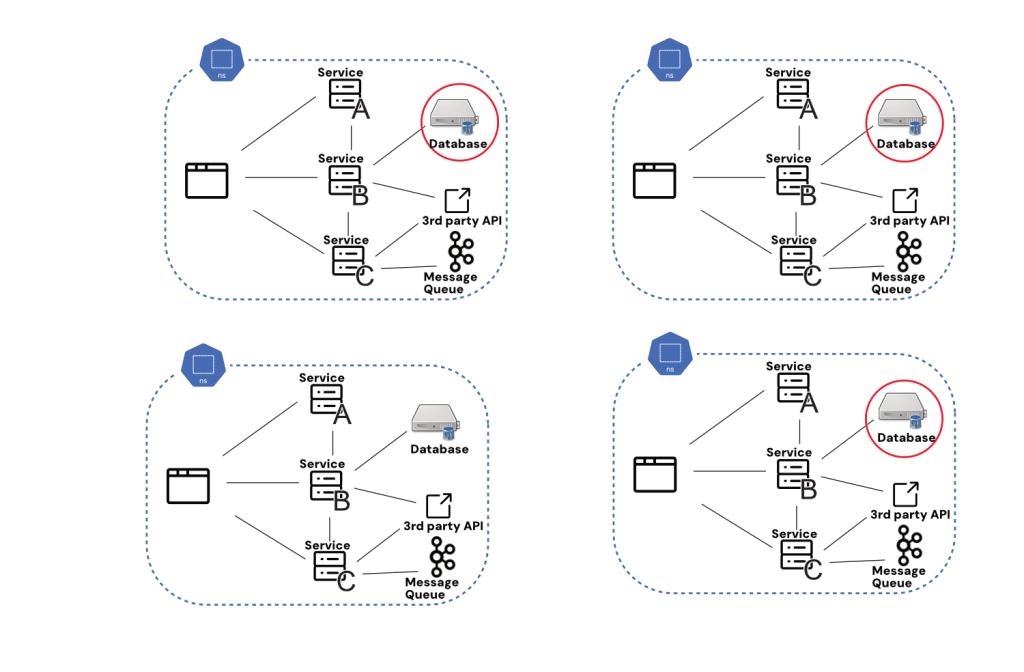

Issues of synchronization are one concern that rears its head when making an attempt to implement replicated testing environments at scale. Primarily, for all inside companies, how sure are we that every replicated atmosphere is working essentially the most up to date model of each service? This seems like an edge case or small concern till we bear in mind the entire level of this setup was to make testing extremely correct. Discovering out solely when pushing to manufacturing that latest updates to Service C have damaged my adjustments to Service B is greater than irritating; it calls into query the entire course of.

Once more, there appear to be technical options to this downside: Why don’t we simply seize the latest model of every service at startup? The difficulty right here is the affect on velocity: If we’ve got to attend for an entire clone to be pulled configured after which began each time we wish to take a look at, we’re rapidly speaking about many minutes and even hours to attend earlier than our supposedly remoted duplicate testing atmosphere is prepared for use.

Who’s ensuring particular person assets are synced?

This concern, just like the others talked about right here, is restricted to scale: You probably have a small cluster that may be cloned and began in two minutes, little or no of this text applies to you. But when that’s the case, it’s doubtless you’ll be able to sync all of your companies’ states by sending a fast Slack message to your single two-pizza staff.

Third-party dependencies are one other wrinkle with a number of testing environments. Secrets and techniques dealing with insurance policies usually imply that third-party dependencies can’t have all their authentication information on a number of replicated testing environments; because of this, these third-party dependencies can’t be examined at an early stage. This places strain again on staging as that is the one level the place an actual end-to-end take a look at can occur.

Upkeep Overhead

Managing a number of environments additionally brings a substantial upkeep burden. Every atmosphere must be up to date, patched, and monitored independently, resulting in elevated operational complexity. This could pressure IT assets, as groups should be certain that every atmosphere stays in sync with the others, additional escalating prices.

A notable case concerned a big enterprise that discovered its duplicated environments more and more difficult to keep up. Testing environments grew to become so divergent from manufacturing that it led to vital points when deploying updates. The corporate skilled frequent failures as a result of adjustments examined in a single atmosphere didn’t precisely mirror the state of the manufacturing system, resulting in expensive delays and rework. The consequence was small groups “going rogue,” pushing their adjustments straight to staging, and solely checking in the event that they labored there. Not solely had been the replicated environments deserted, hurting staging’s reliability, but it surely additionally meant that the platform staff was nonetheless paying to run environments that nobody was utilizing.

Scalability Challenges

As purposes develop, the variety of environments may have to extend to accommodate numerous levels of improvement, testing, and manufacturing. Scaling these environments can develop into prohibitively costly, particularly when coping with excessive volumes of microservices. The infrastructure required to help quite a few replicated environments can rapidly outpace finances constraints, making it difficult to keep up cost-effectiveness.

As an example, a tech firm that originally managed its environments by duplicating manufacturing setups discovered that as its service portfolio expanded, the prices related to scaling these environments grew to become unsustainable. The corporate confronted problem in maintaining with the infrastructure calls for, resulting in a reassessment of its technique.

Various Methods

Given the excessive prices related to atmosphere duplication, it’s price contemplating different methods. One method is to make use of dynamic atmosphere provisioning, the place environments are created on demand and torn down when not wanted. This methodology might help optimize useful resource utilization and cut back prices by avoiding the necessity for completely duplicated setups. This could maintain prices down however nonetheless comes with the trade-off of sending some testing to staging anyway. That’s as a result of there are shortcuts that we should take to spin up these dynamic environments like utilizing mocks for third-party companies. This will put us again at sq. one when it comes to testing reliability, that’s how properly our exams mirror what is going to occur in manufacturing.

At this level, it’s affordable to think about different strategies that use technical fixes to make staging and different near-to-production environments simpler to check on. One such is request isolation, a mannequin for letting a number of exams happen concurrently in the identical shared atmosphere.

Conclusion: A Price That Doesn’t Scale

Whereas duplicating environments may look like a sensible resolution for guaranteeing consistency in microservices, the infrastructure prices concerned might be vital. By exploring different methods comparable to dynamic provisioning and request isolation, organizations can higher handle their assets and mitigate the monetary affect of sustaining a number of environments. Actual-world examples illustrate the challenges and prices related to conventional duplication strategies, underscoring the necessity for extra environment friendly approaches in trendy software program improvement.