Code embeddings are a transformative technique to symbolize code snippets as dense vectors in a steady area. These embeddings seize the semantic and purposeful relationships between code snippets, enabling highly effective purposes in AI-assisted programming. Much like phrase embeddings in pure language processing (NLP), code embeddings place related code snippets shut collectively within the vector area, permitting machines to grasp and manipulate code extra successfully.

What are Code Embeddings?

Code embeddings convert complicated code buildings into numerical vectors that seize the that means and performance of the code. Not like conventional strategies that deal with code as sequences of characters, embeddings seize the semantic relationships between elements of the code. That is essential for numerous AI-driven software program engineering duties, reminiscent of code search, completion, bug detection, and extra.

For instance, think about these two Python features:

def add_numbers(a, b):

return a + b

def sum_two_values(x, y):

outcome = x + y

return outcome

Whereas these features look completely different syntactically, they carry out the identical operation. code embedding would symbolize these two features with related vectors, capturing their purposeful similarity regardless of their textual variations.

Vector Embedding

How are Code Embeddings Created?

There are completely different methods for creating code embeddings. One widespread method entails utilizing neural networks to study these representations from a big dataset of code. The community analyzes the code construction, together with tokens (key phrases, identifiers), syntax (how the code is structured), and doubtlessly feedback to study the relationships between completely different code snippets.

Let’s break down the method:

- Code as a Sequence: First, code snippets are handled as sequences of tokens (variables, key phrases, operators).

- Neural Community Coaching: A neural community processes these sequences and learns to map them to fixed-size vector representations. The community considers elements like syntax, semantics, and relationships between code parts.

- Capturing Similarities: The coaching goals to place related code snippets (with related performance) shut collectively within the vector area. This enables for duties like discovering related code or evaluating performance.

Here is a simplified Python instance of the way you may preprocess code for embedding:

import ast

def tokenize_code(code_string):

tree = ast.parse(code_string)

tokens = []

for node in ast.stroll(tree):

if isinstance(node, ast.Identify):

tokens.append(node.id)

elif isinstance(node, ast.Str):

tokens.append('STRING')

elif isinstance(node, ast.Num):

tokens.append('NUMBER')

# Add extra node sorts as wanted

return tokens

# Instance utilization

code = """

def greet(title):

print("Hello, " + title + "!")

"""

tokens = tokenize_code(code)

print(tokens)

# Output: ['def', 'greet', 'name', 'print', 'STRING', 'name', 'STRING']

This tokenized illustration can then be fed right into a neural community for embedding.

Current Approaches to Code Embedding

Current strategies for code embedding could be categorised into three predominant classes:

Token-Primarily based Strategies

Token-based strategies deal with code as a sequence of lexical tokens. Strategies like Time period Frequency-Inverse Doc Frequency (TF-IDF) and deep studying fashions like CodeBERT fall into this class.

Tree-Primarily based Strategies

Tree-based strategies parse code into summary syntax bushes (ASTs) or different tree buildings, capturing the syntactic and semantic guidelines of the code. Examples embrace tree-based neural networks and fashions like code2vec and ASTNN.

Graph-Primarily based Strategies

Graph-based strategies assemble graphs from code, reminiscent of management movement graphs (CFGs) and knowledge movement graphs (DFGs), to symbolize the dynamic conduct and dependencies of the code. GraphCodeBERT is a notable instance.

TransformCode: A Framework for Code Embedding

TransformCode: Unsupervised studying of code embedding

TransformCode is a framework that addresses the restrictions of current strategies by studying code embeddings in a contrastive studying method. It’s encoder-agnostic and language-agnostic, that means it might leverage any encoder mannequin and deal with any programming language.

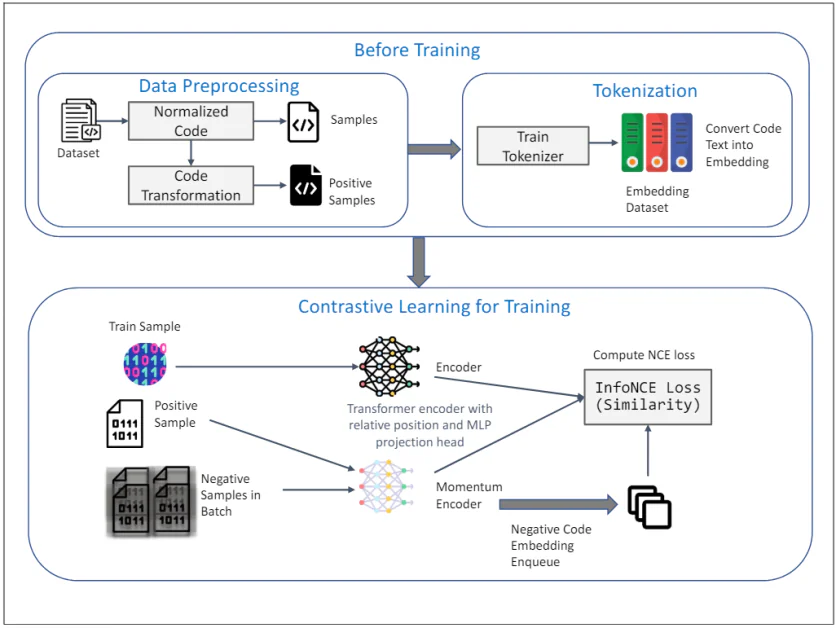

The diagram above illustrates the framework of TransformCode for unsupervised studying of code embedding utilizing contrastive studying. It consists of two predominant phases: Earlier than Coaching and Contrastive Studying for Coaching. Here is an in depth rationalization of every element:

Earlier than Coaching

1. Information Preprocessing:

- Dataset: The preliminary enter is a dataset containing code snippets.

- Normalized Code: The code snippets bear normalization to take away feedback and rename variables to a normal format. This helps in decreasing the affect of variable naming on the educational course of and improves the generalizability of the mannequin.

- Code Transformation: The normalized code is then remodeled utilizing numerous syntactic and semantic transformations to generate optimistic samples. These transformations be certain that the semantic that means of the code stays unchanged, offering numerous and strong samples for contrastive studying.

2. Tokenization:

- Practice Tokenizer: A tokenizer is skilled on the code dataset to transform code textual content into embeddings. This entails breaking down the code into smaller models, reminiscent of tokens, that may be processed by the mannequin.

- Embedding Dataset: The skilled tokenizer is used to transform your entire code dataset into embeddings, which function the enter for the contrastive studying section.

Contrastive Studying for Coaching

3. Coaching Course of:

- Practice Pattern: A pattern from the coaching dataset is chosen because the question code illustration.

- Optimistic Pattern: The corresponding optimistic pattern is the remodeled model of the question code, obtained in the course of the knowledge preprocessing section.

- Adverse Samples in Batch: Adverse samples are all different code samples within the present mini-batch which can be completely different from the optimistic pattern.

4. Encoder and Momentum Encoder:

- Transformer Encoder with Relative Place and MLP Projection Head: Each the question and optimistic samples are fed right into a Transformer encoder. The encoder incorporates relative place encoding to seize the syntactic construction and relationships between tokens within the code. An MLP (Multi-Layer Perceptron) projection head is used to map the encoded representations to a lower-dimensional area the place the contrastive studying goal is utilized.

- Momentum Encoder: A momentum encoder can be used, which is up to date by a shifting common of the question encoder’s parameters. This helps keep the consistency and variety of the representations, stopping the collapse of the contrastive loss. The unfavourable samples are encoded utilizing this momentum encoder and enqueued for the contrastive studying course of.

5. Contrastive Studying Goal:

- Compute InfoNCE Loss (Similarity): The InfoNCE (Noise Contrastive Estimation) loss is computed to maximise the similarity between the question and optimistic samples whereas minimizing the similarity between the question and unfavourable samples. This goal ensures that the discovered embeddings are discriminative and strong, capturing the semantic similarity of the code snippets.

The whole framework leverages the strengths of contrastive studying to study significant and strong code embeddings from unlabeled knowledge. Using AST transformations and a momentum encoder additional enhances the standard and effectivity of the discovered representations, making TransformCode a robust device for numerous software program engineering duties.

Key Options of TransformCode

- Flexibility and Adaptability: May be prolonged to varied downstream duties requiring code illustration.

- Effectivity and Scalability: Doesn’t require a big mannequin or in depth coaching knowledge, supporting any programming language.

- Unsupervised and Supervised Studying: May be utilized to each studying situations by incorporating task-specific labels or targets.

- Adjustable Parameters: The variety of encoder parameters could be adjusted primarily based on obtainable computing assets.

TransformCode introduces An information-augmentation approach referred to as AST transformation, making use of syntactic and semantic transformations to the unique code snippets. This generates numerous and strong samples for contrastive studying.

Functions of Code Embeddings

Code embeddings have revolutionized numerous features of software program engineering by remodeling code from a textual format to a numerical illustration usable by machine studying fashions. Listed below are some key purposes:

Improved Code Search

Historically, code search relied on key phrase matching, which regularly led to irrelevant outcomes. Code embeddings allow semantic search, the place code snippets are ranked primarily based on their similarity in performance, even when they use completely different key phrases. This considerably improves the accuracy and effectivity of discovering related code inside giant codebases.

Smarter Code Completion

Code completion instruments counsel related code snippets primarily based on the present context. By leveraging code embeddings, these instruments can present extra correct and useful options by understanding the semantic that means of the code being written. This interprets to sooner and extra productive coding experiences.

Automated Code Correction and Bug Detection

Code embeddings can be utilized to establish patterns that usually point out bugs or inefficiencies in code. By analyzing the similarity between code snippets and identified bug patterns, these techniques can robotically counsel fixes or spotlight areas which may require additional inspection.

Enhanced Code Summarization and Documentation Era

Giant codebases typically lack correct documentation, making it troublesome for brand spanking new builders to grasp their workings. Code embeddings can create concise summaries that seize the essence of the code’s performance. This not solely improves code maintainability but additionally facilitates information switch inside growth groups.

Improved Code Evaluations

Code evaluations are essential for sustaining code high quality. Code embeddings can help reviewers by highlighting potential points and suggesting enhancements. Moreover, they’ll facilitate comparisons between completely different code variations, making the overview course of extra environment friendly.

Cross-Lingual Code Processing

The world of software program growth is just not restricted to a single programming language. Code embeddings maintain promise for facilitating cross-lingual code processing duties. By capturing the semantic relationships between code written in numerous languages, these methods may allow duties like code search and evaluation throughout programming languages.