Introduction to the Downside

Managing concurrency in monetary transaction programs is likely one of the most complicated challenges confronted by builders and system architects. Concurrency points come up when a number of transactions are processed concurrently, which might result in potential conflicts and knowledge inconsistencies. These points manifest in varied varieties, equivalent to overdrawn accounts, duplicate transactions, or mismatched information, all of which might severely undermine the system’s reliability and trustworthiness.

Within the monetary world, the place the stakes are exceptionally excessive, even a single error can lead to important monetary losses, regulatory violations, and reputational injury to the group. Consequently, it’s crucial to implement sturdy mechanisms to deal with concurrency successfully, making certain the system’s integrity and reliability.

Complexities in Cash Switch Purposes

At first look, managing a buyer’s account steadiness would possibly look like a simple activity. The core operations — crediting an account, permitting withdrawals, or transferring funds between accounts — are basically easy database transactions. These transactions sometimes contain including or subtracting from the account steadiness, with the first concern being to forestall overdrafts and keep a constructive or zero steadiness always.

Nevertheless, the fact is much extra complicated. Earlier than executing any transaction, it is usually essential to carry out a sequence of checks with different programs. For instance, the system should confirm that the account in query truly exists, which normally includes querying a central account database or service. Furthermore, the system should be sure that the account will not be blocked as a consequence of points equivalent to suspicious exercise, regulatory compliance issues, or pending verification processes.

These further steps introduce layers of complexity that transcend easy debit and credit score operations. Sturdy checks and balances are required to make sure that buyer balances are managed securely and precisely, including important complexity to the general system.

Actual-World Necessities (KYC, Fraud Prevention, and so on.)

Take into account a sensible instance of a cash switch firm that permits clients to switch funds throughout completely different currencies and international locations. From the shopper’s perspective, the method is straightforward:

- The client opens an account within the system.

- A EUR account is created to obtain cash.

- The client creates a recipient within the system.

- The client initiates a switch of €100 to $110 to the recipient.

- The system waits for the inbound €100.

- As soon as the funds arrive, they’re transformed to $110.

- Lastly, the system sends $110 to the recipient.

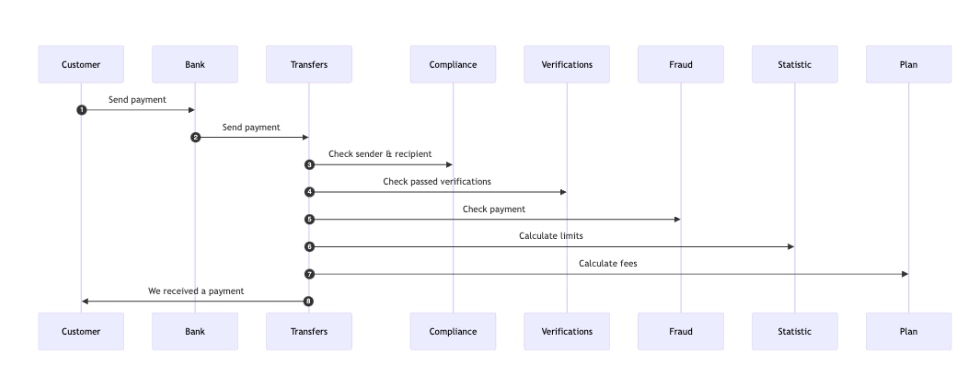

This course of could be visualized as follows:

Whereas this sequence seems easy, real-world necessities introduce further complexity:

- Cost verification:

- The system should confirm the origin of the inbound cost.

- The payer’s checking account have to be legitimate.

- The financial institution’s BIC code have to be licensed inside the system.

- If the cost originates from a non-bank cost system, further checks are required.

- Recipient validation:

- The recipient’s checking account have to be lively.

- Buyer validation:

- The recipient should cross varied checks, equivalent to identification verification (e.g., a sound passport and a confirmed selfie ID).

- Supply of funds and compliance:

- Relying on the inbound switch quantity, the supply of funds could must be verified.

- The fraud prevention system ought to assessment the inbound cost.

- Neither the sender nor the recipient ought to seem on any sanctions listing.

- Transaction limits and costs:

- The system ought to calculate month-to-month and annual cost limits to find out relevant charges.

- If the transaction includes forex conversion, the system should deal with international change charges.

- Audit and compliance:

- The system should log all transactions for auditing and compliance functions.

These necessities add important complexity to what initially looks as if a simple course of. Moreover, primarily based on the outcomes of those checks, the cost could require handbook assessment, additional extending the cost course of.

Visualization of Knowledge Stream and Potential Failure Factors

In a monetary transaction system, the information circulation for dealing with inbound funds includes a number of steps and checks to make sure compliance, safety, and accuracy. Nevertheless, potential failure factors exist all through this course of, significantly when exterior programs impose restrictions or when the system should dynamically resolve on the plan of action primarily based on real-time knowledge.

Normal Inbound Cost Stream

Here is a simplified visualization of the information circulation when dealing with an inbound cost, together with the sequence of interactions between varied parts:

Rationalization of the Stream

- Buyer initiates cost: The client sends a cost to their financial institution.

- Financial institution sends cost: The financial institution forwards the cost to the switch system.

- Compliance examine: The switch system checks the sender and recipient towards compliance laws.

- Verification checks: The system verifies if the sender and recipient have handed essential identification and doc verifications.

- Fraud detection: A fraud examine is carried out to make sure the cost will not be suspicious.

- Statistic calculation: The system calculates transaction limits and different related metrics.

- Price calculation: Any relevant charges are calculated.

- Affirmation: The system confirms receipt of the cost to the shopper.

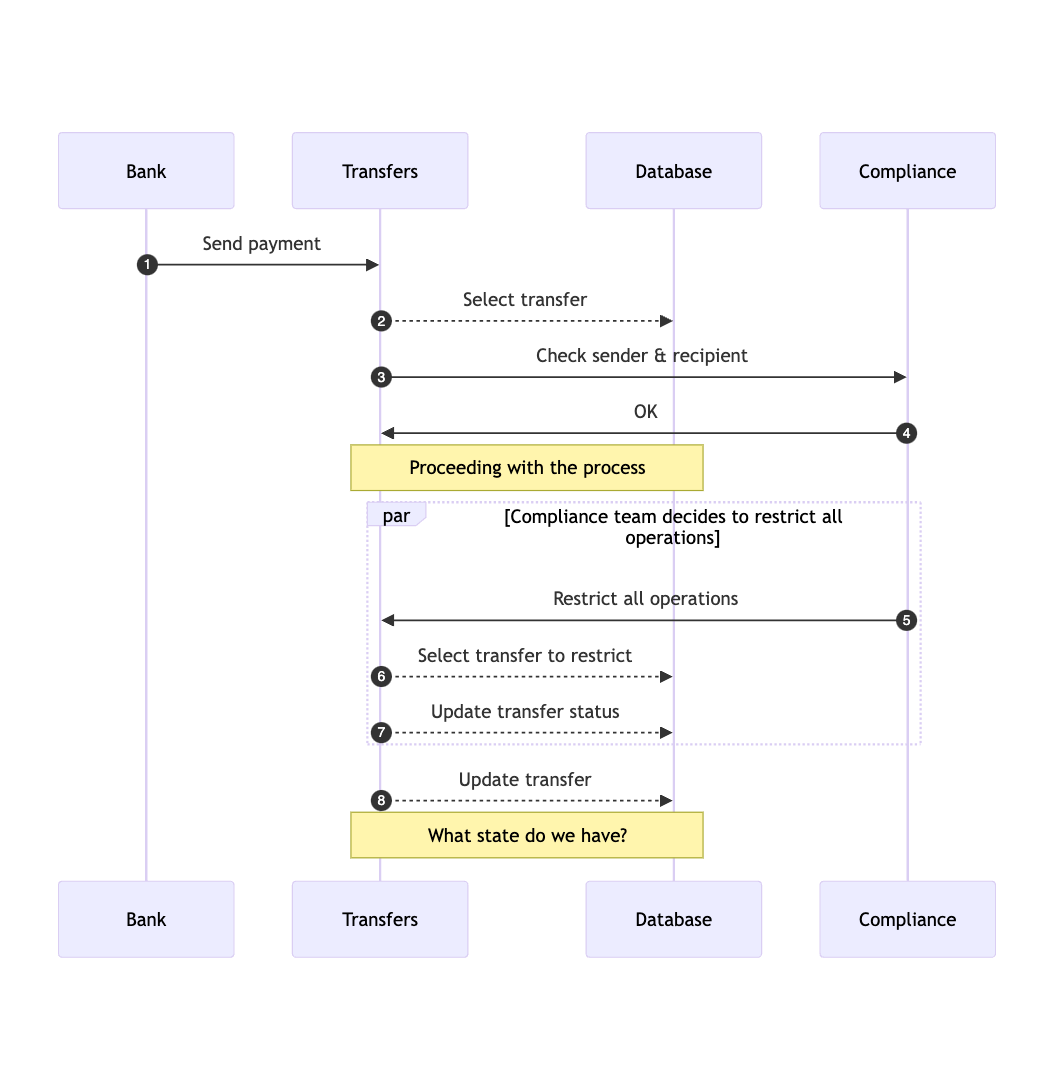

Potential Failure Factors and Dynamic Restrictions

Whereas the above circulation appears simple, the method can change into difficult as a consequence of dynamic adjustments, equivalent to when an exterior system imposes restrictions on a buyer’s account.

Here is how the method would possibly unfold, highlighting the potential failure factors:

Rationalization of the Potential Failure Factors

- Dynamic restrictions:

- Through the course of, the compliance group could resolve to limit all operations for a selected buyer as a consequence of sanctions or different regulatory causes. This introduces a possible failure level the place the method could possibly be halted or altered mid-way.

- Database state conflicts:

- After compliance decides to limit operations, the switch system must replace the state of the switch within the database. The problem right here lies in managing the state consistency, significantly if a number of operations happen concurrently or if there are conflicting updates.

- The system should be sure that the switch’s state is precisely mirrored within the database, taking into consideration the restriction imposed. If not dealt with rigorously, this might result in inconsistent states or failed transactions.

- Determination factors:

- The system’s capability to dynamically recalculate the state and resolve whether or not to simply accept or reject an inbound cost is essential. Any misstep on this decision-making course of may lead to unauthorized transactions, blocked funds, or authorized violations.

Visualizing the information circulation and figuring out potential failure factors in monetary transaction programs reveals the complexity and dangers concerned in dealing with funds. By understanding these dangers, system architects can design extra sturdy mechanisms to handle state, deal with dynamic adjustments, and make sure the integrity of the transaction course of.

Conventional Approaches to Concurrency

There are numerous approaches to addressing concurrency challenges in monetary transaction programs.

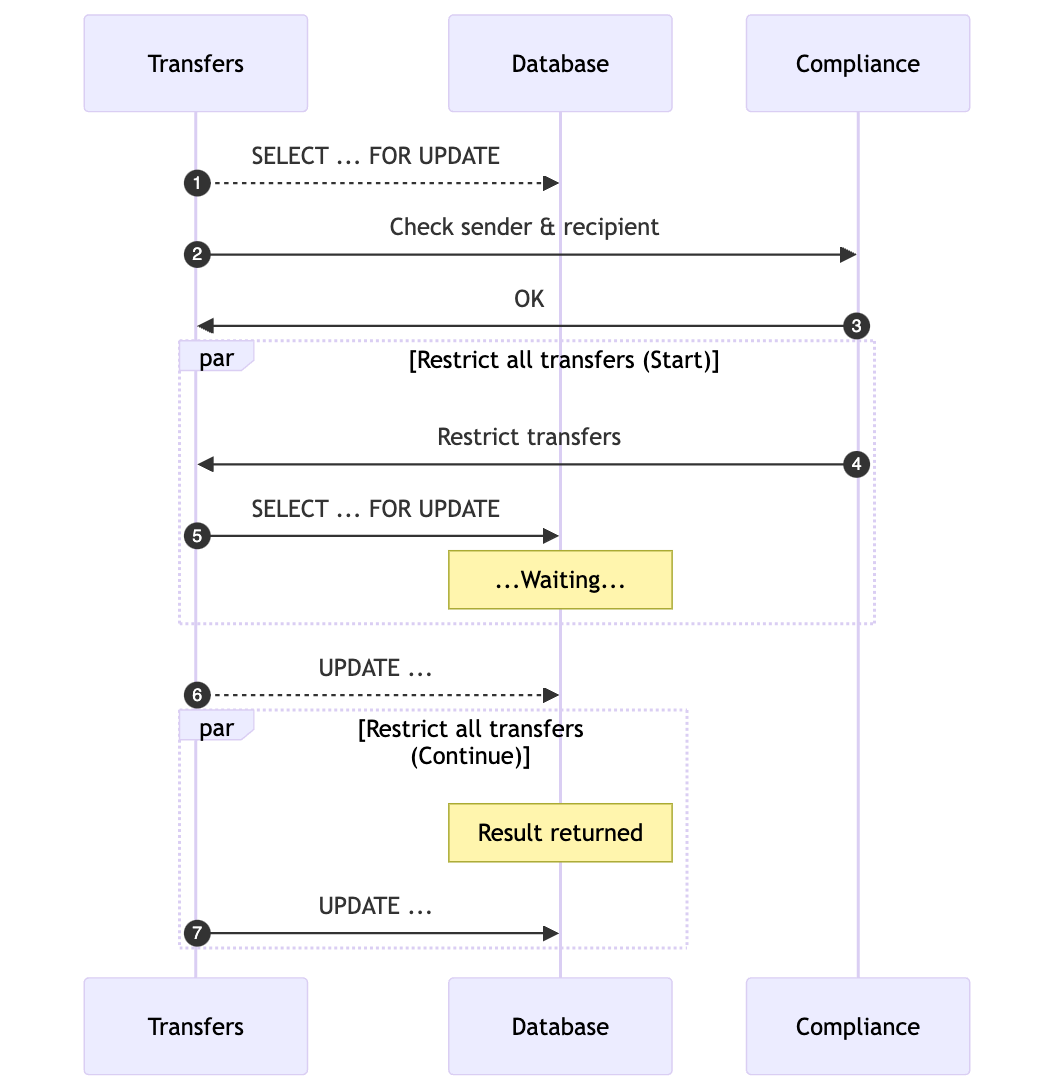

Database Transactions and Their Limitations

Probably the most simple strategy to managing concurrency is thru database transactions. To begin, let’s outline our context: the switch system shops its knowledge in a Postgres database. Whereas the database topology can differ — whether or not shared throughout a number of situations, knowledge facilities, places, or areas — our focus right here is on a easy, single Postgres database occasion dealing with each reads and writes.

To make sure that one transaction doesn’t override one other’s knowledge, we will lock the row related to the switch:

SELECT * FROM transfers WHERE id = 'ABCD' FOR UPDATE;

This command locks the row firstly of the method and releases the lock as soon as the transaction is full. The next diagram illustrates how this strategy addresses the difficulty of misplaced updates:

Whereas this strategy can remedy the issue of misplaced updates in easy eventualities, it turns into much less efficient because the system scales and the variety of lively transactions will increase.

Scaling Points and Useful resource Exhaustion

Let’s take into account the implications of scaling this strategy. Assume that processing one cost takes 5 seconds, and the system handles 100 inbound funds each second. This leads to 500 lively transactions at any given time. Every of those transactions requires a database connection, which might shortly result in useful resource exhaustion, elevated latency, and degraded system efficiency, significantly below excessive load circumstances.

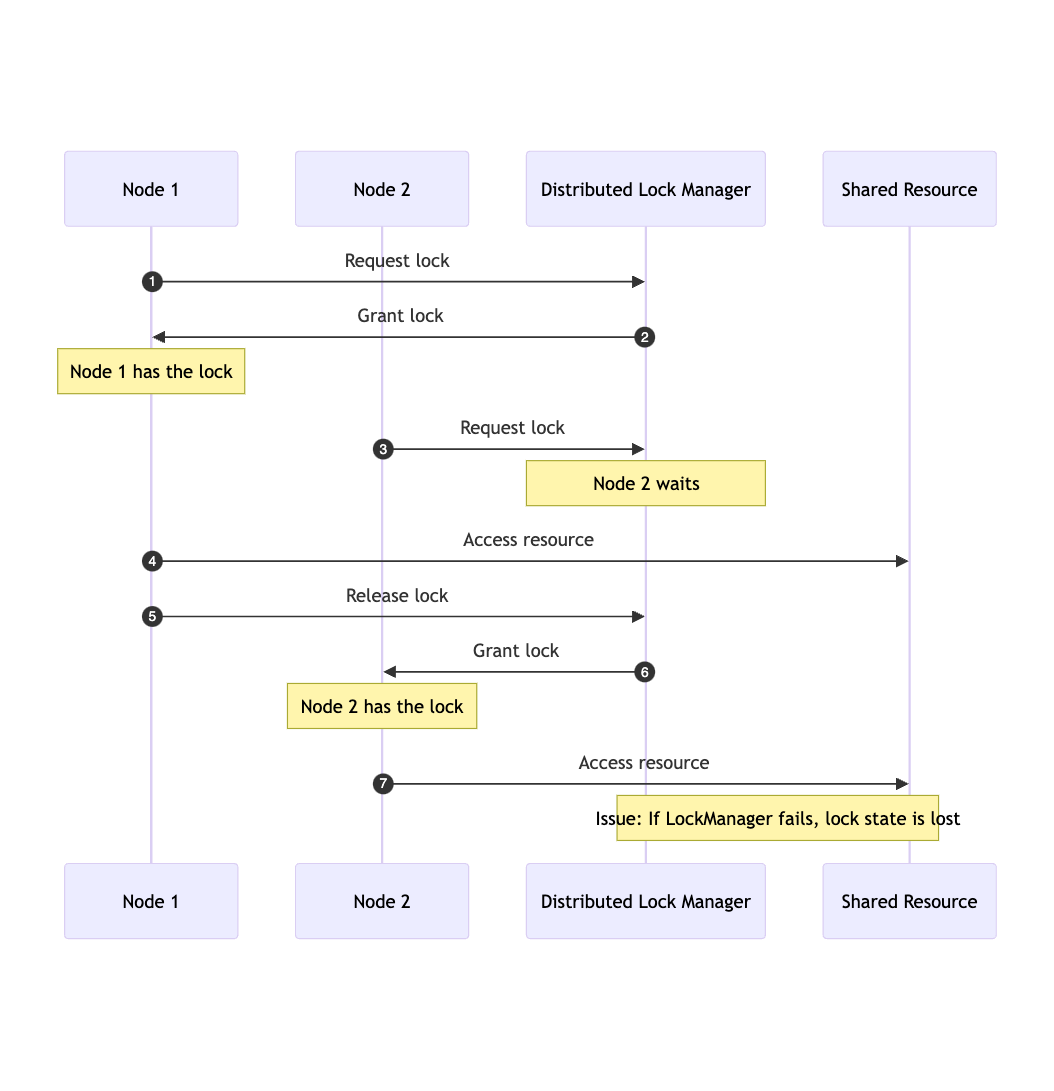

Locks: Native and Distributed

Native locks are one other frequent technique for managing concurrency inside a single software occasion. They be sure that crucial sections of code are executed by just one thread at a time, stopping race circumstances and making certain knowledge consistency. Implementing native locks is comparatively easy utilizing constructs like synchronized blocks or ReentrantLocks in Java, which manages entry to shared sources successfully inside a single system.

Nevertheless, native locks fall brief in distributed environments the place a number of situations of an software have to coordinate their actions. In such eventualities, a neighborhood lock on one occasion doesn’t forestall conflicting actions on different situations. That is the place distributed locks come into play. Distributed locks be sure that just one occasion of an software can entry a selected useful resource at any given time, no matter which node within the cluster is executing the code.

Implementing distributed locks is inherently extra complicated, usually requiring exterior programs like ZooKeeper, Consul, Hazelcast, or Redis to handle the lock state throughout a number of nodes. These programs must be extremely out there and constant to forestall the distributed lock mechanism from changing into a single level of failure or a bottleneck.

The next diagram illustrates the standard circulation of a distributed lock system:

The Downside of Ordering

In distributed programs, the place a number of nodes could request locks concurrently, making certain honest processing and sustaining knowledge consistency could be difficult. Attaining an ordered queue of lock requests throughout nodes includes a number of difficulties:

- Community latency: Various latencies could make strict ordering troublesome to take care of

- Fault Tolerance: The ordering mechanism have to be fault-tolerant and never change into a single level of failure, which provides complexity to the system.

Ready of Lock Shoppers and Deadlocks

When a number of nodes maintain varied sources and anticipate one another to launch locks, a impasse can happen, halting system progress. To mitigate this, distributed locks usually incorporate timeouts.

Timeouts

- Lock acquisition timeouts: Nodes specify a most wait time for a lock. If the lock will not be granted inside this time, the request instances out, stopping indefinite ready.

- Lock holding timeouts: Nodes holding a lock have a most period to carry it. If the time is exceeded, the lock is robotically launched to forestall sources from being held indefinitely.

- Timeout dealing with: When a timeout happens, the system should deal with it gracefully, whether or not by retrying, aborting, or triggering compensatory actions.

Contemplating these challenges, guaranteeing dependable cost processing in a system that depends on distributed locking is a fancy endeavor. Balancing the necessity for concurrency management with the realities of distributed programs requires cautious planning and sturdy design.

A Paradigm Shift: Simplifying Concurrency

Let’s take a step again and assessment our switch processing strategy. By breaking the method into smaller steps, we will simplify every operation, making the complete system extra manageable and decreasing the chance of concurrency points.

When a cost is obtained, it triggers a sequence of checks, every requiring computations from completely different programs. As soon as all the outcomes are in, the system decides on the subsequent plan of action. These steps resemble transitions in a finite state machine (FSM).

Introducing a Message-Based mostly Processing Mannequin

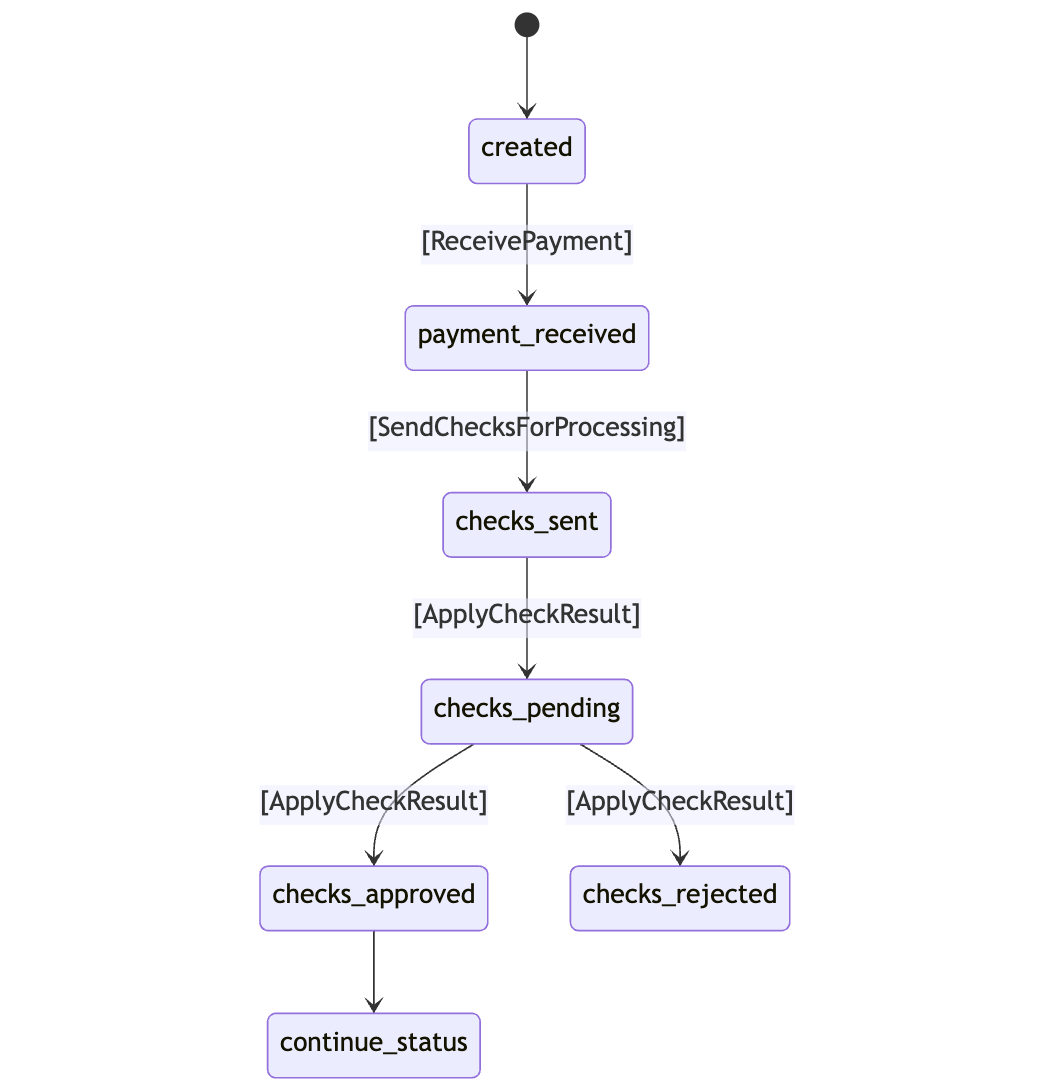

As proven within the diagram, cost processing includes a mix of instructions and state transitions. For every command, the system identifies the preliminary state and the attainable transition states.

For instance, if the system receives the [ReceivePayment] command, it checks if the switch is within the created state. If not, it does nothing. For the [ApplyCheckResult] command, the system transitions the switch to both checks_approved or checks_rejected primarily based on the outcomes of the checks.

These checks are designed to be granular and fast to course of, as every examine operates independently and doesn’t modify the switch state instantly. It solely requires the enter knowledge to find out the results of the examine.

Right here is how the code for such processing would possibly look:

Let’s see how these parts work together to ship, obtain, and course of checks:

enum CheckStatus {

NEW,

ACCEPTED,

REJECTED

}

file Examine(UUID transferId, CheckType sort, CheckStatus standing, Knowledge knowledge);

class CheckProcessor {

void course of(Examine examine) {

// Run all required calculations

// Ship consequence to `TransferProcessor`

}

}

enum TransferStatus {

CREATED,

PAYMENT_RECEIVED,

CHECKS_SENT,

CHECKS_PENDING,

CHECKS_APPROVED,

CHECKS_REJECTED

}

file Switch(UUID id, Checklist checks);

sealed interface Command permits

ReceivePayment,

SendChecks,

ApplyCheckResult {}

class TransferProcessor {

State course of(State state, Command command) {

// (1) If standing == CREATED and command is `ReceivePayment`

// (2) Write cost particulars to the state

// (3) Ship command `SendChecks` to self

// (4) Set standing = PAYMENT_RECEIVED

// (4) If state = PAYMENT_RECEIVED and command is `SendChecks`

// (5) Calculate all required checks (with out processing)

// (6) Ship checks for processing to different processors

// (7) Set standing = CHECKS_SENT

// (10) If standing = CHECKS_SENT or CHECKS_PENDING

// and command is ApplyCheckResult

// (11) Replace `switch.checks()`

// (12) Compute total standing

// (13) If all checks are accepted - set standing = CHECKS_APPROVED

// (14) If any of the checks is rejected - set standing CHECKS_REJECTED

// (15) In any other case - set standing = CHECKS_PENDING

}

}

This strategy reduces processing latency by offloading examine consequence calculations to separate processes, resulting in fewer concurrent operations. Nevertheless, it doesn’t fully remedy the issue of making certain atomic processing for instructions.

Communication By means of Messages

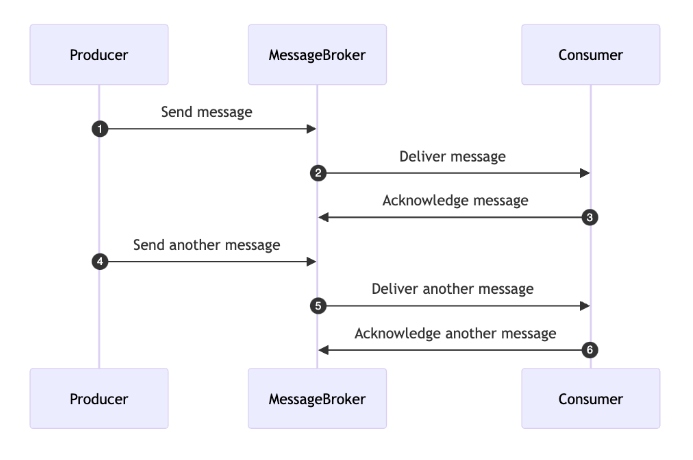

On this mannequin, communication between completely different elements of the system happens by messages. This strategy allows asynchronous communication, decoupling parts and enhancing flexibility and scalability. Messages are managed by queues and message brokers, which guarantee orderly transmission and reception of messages.

The diagram under illustrates this course of:

One-at-a-Time Message Dealing with

To make sure right and constant command processing, it’s essential to order and linearize all messages for a single switch. This implies messages needs to be processed within the order they have been despatched, and no two messages for a similar switch needs to be processed concurrently. Sequential processing ensures that every step within the transaction lifecycle happens within the right sequence, stopping race circumstances, knowledge corruption, or inconsistent states.

Right here’s the way it works:

- Message queue: A devoted queue is maintained for every switch to make sure that messages are processed within the order they’re obtained.

- Shopper: The buyer fetches messages from the queue, processes them, and acknowledges profitable processing.

- Sequential processing: The buyer processes every message one after the other, making certain that no two messages for a similar switch are processed concurrently.

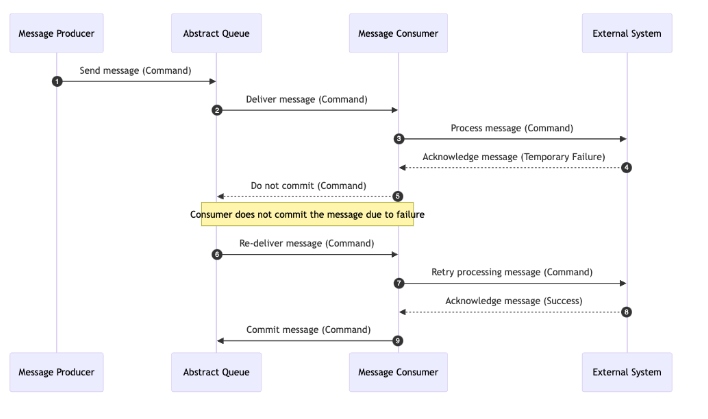

Sturdy Message Storage

Making certain message sturdiness is essential in monetary transaction programs as a result of it permits the system to replay a message if the processor fails to deal with the command as a consequence of points like exterior cost failures, storage failures, or community issues.

Think about a state of affairs the place a cost processing command fails as a consequence of a short lived community outage or a database error. With out sturdy message storage, this command could possibly be misplaced, resulting in incomplete transactions or different inconsistencies. By storing messages durably, we be sure that each command and transaction step is persistently recorded. If a failure happens, the system can recuperate and replay the message as soon as the difficulty is resolved, making certain the transaction completes efficiently.

Sturdy message storage can also be invaluable for coping with exterior cost programs. If an exterior system fails to verify a cost, we will replay the message to retry the operation with out shedding crucial knowledge, sustaining the integrity and consistency of our transactions.

Moreover, sturdy message storage is important for auditing and compliance, offering a dependable log of all transactions and actions taken by the system, and making it simpler to trace and confirm operations when wanted.

The next diagram illustrates how sturdy message storage works:

By utilizing sturdy message storage, the system turns into extra dependable and resilient, making certain that failures are dealt with gracefully with out compromising knowledge integrity or buyer belief.

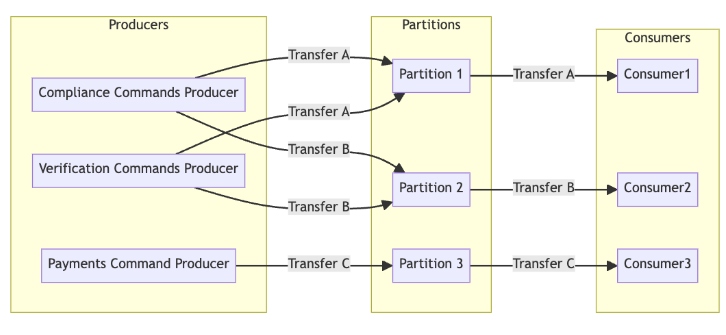

Kafka as a Messaging Spine

Apache Kafka is a distributed streaming platform designed for high-throughput, low-latency message dealing with. It’s broadly used as a messaging spine in complicated programs as a consequence of its capability to deal with real-time knowledge feeds effectively. Let’s discover Kafka’s core parts, together with producers, subjects, partitions, and message routing, to grasp the way it operates inside a distributed system.

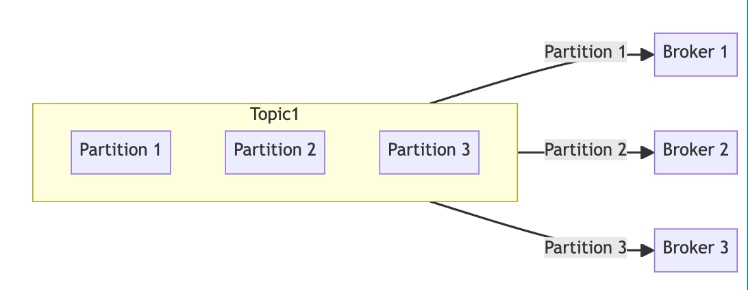

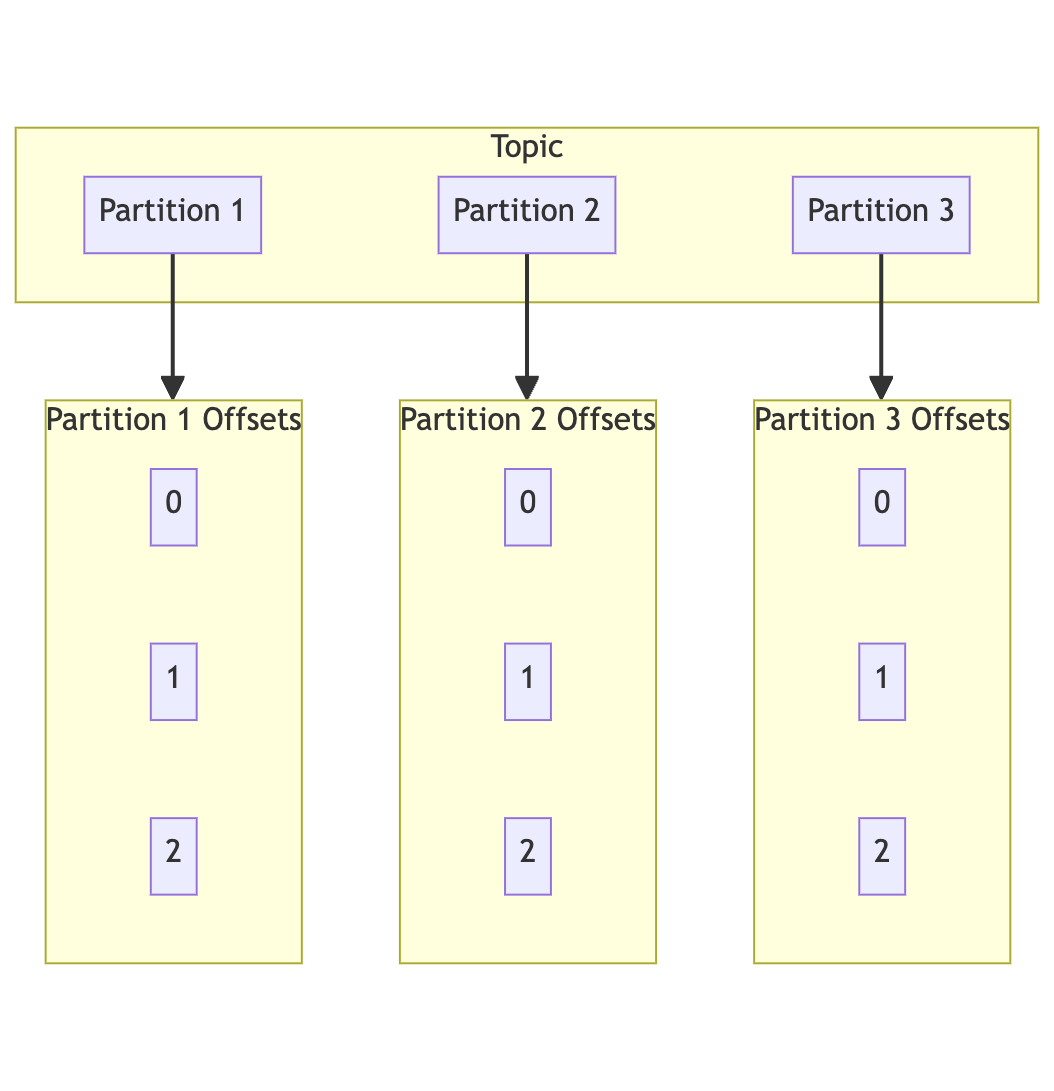

Subjects and Partitions

Subjects

In Kafka, a matter is a class or feed identify to which information are saved and printed. Subjects are divided into partitions to facilitate parallel processing and scalability.

Partitions

Every matter could be divided into a number of partitions, that are the basic items of parallelism in Kafka. Partitions are ordered, immutable sequences of information frequently appended to a structured commit log. Kafka shops knowledge in these partitions throughout a distributed cluster of brokers. Every partition is replicated throughout a number of brokers to make sure fault tolerance and excessive availability. The replication issue determines the variety of copies of the information, and Kafka robotically manages the replication course of to make sure knowledge consistency and reliability.

Every file inside a partition has a novel offset, serving because the identifier for the file’s place inside the partition. This offset permits shoppers to maintain monitor of their place and proceed processing from the place they left off in case of a failure.

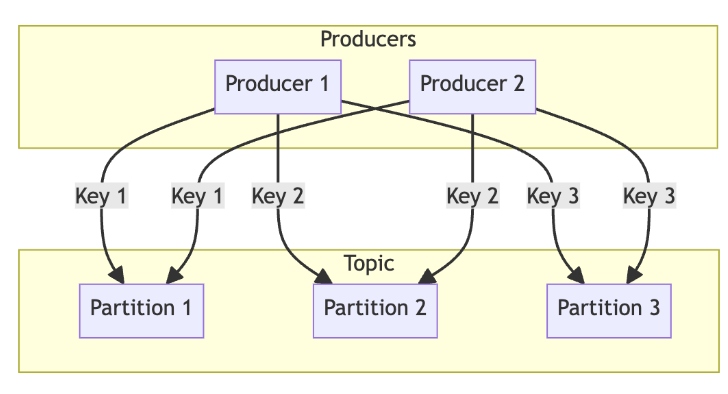

Message Routing

Kafka’s message routing is a key mechanism that determines how messages are distributed throughout the partitions of a subject. There are a number of strategies for routing messages:

- Spherical-robin: The default technique the place messages are evenly distributed throughout all out there partitions to make sure a balanced load and environment friendly use of sources

- Key-based routing: Messages with the identical key are routed to the identical partition, which is beneficial for sustaining the order of associated messages and making certain they’re processed sequentially. For instance, all transactions for a selected account could be routed to the identical partition utilizing the account ID as the important thing.

- Customized partitioners: Kafka permits customized partitioning logic to outline how messages needs to be routed primarily based on particular standards. That is helpful for complicated routing necessities not coated by the default strategies.

This routing mechanism optimizes efficiency, maintains message order when wanted, and helps scalability and fault tolerance.

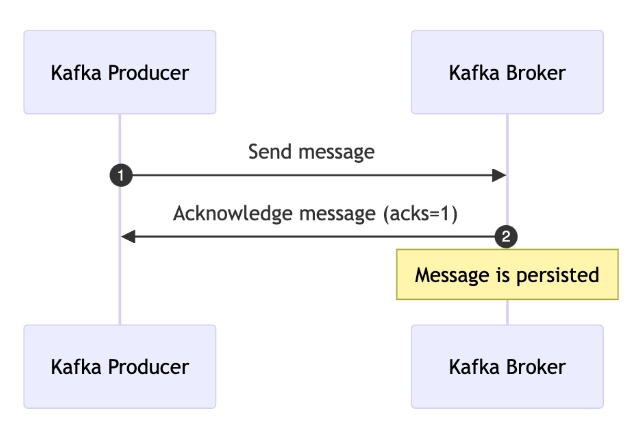

Producers

Kafka producers are liable for publishing information to subjects. They will specify acknowledgment settings to regulate when a message is taken into account efficiently despatched:

acks=0: No acknowledgment is required, offering the bottom latency however no supply ensuresacks=1: The chief dealer acknowledges the message, making certain it has been written to the chief’s log.acks=all: All in-sync replicas should acknowledge the message, offering the very best degree of sturdiness and fault tolerance.

These configurations permit Kafka producers to fulfill varied software necessities for message supply and persistence, making certain that knowledge is reliably saved and out there for shoppers.

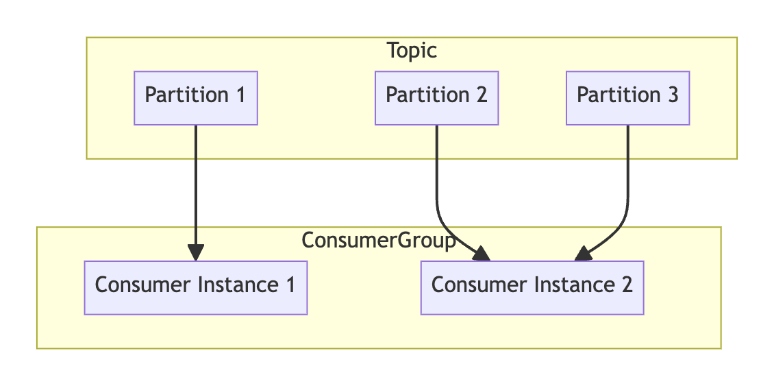

Shoppers

Kafka shoppers learn knowledge from Kafka subjects. A key idea in Kafka’s shopper mannequin is the shopper group. A shopper group consists of a number of shoppers working collectively to learn knowledge from a subject. Every shopper within the group reads from completely different partitions of the subject, permitting for parallel processing and elevated throughput.

When a shopper fails or leaves the group, Kafka robotically reassigns the partitions to the remaining shoppers, making certain fault tolerance and excessive availability. This dynamic balancing of partition assignments ensures that the workload is evenly distributed among the many shoppers within the group, optimizing useful resource utilization and processing effectivity.

Kafka’s capability to handle excessive volumes of knowledge, guarantee fault tolerance, and keep message order makes it a super selection for serving as a messaging spine in distributed programs, significantly in environments requiring real-time knowledge processing and sturdy concurrency administration.

Messaging System Utilizing Kafka

Incorporating Apache Kafka because the messaging spine into our system permits us to deal with varied challenges related to message dealing with, sturdiness, and scalability. Let’s discover how Kafka aligns with our necessities and facilitates the implementation of an Actor model-based system.

One-at-a-Time Message Dealing with

To make sure that messages for a selected switch are dealt with sequentially and with out overlap, we will create a Kafka matter named switch.instructions with a number of partitions. Every message’s key would be the transferId, making certain that every one instructions associated to a selected switch are routed to the identical partition. Since a partition can solely be consumed by one shopper at a time, this setup ensures one-at-a-time message dealing with for every switch.

Sturdy Message Retailer

Kafka’s structure is designed to make sure message sturdiness by persisting messages throughout its distributed brokers. Listed here are some key Kafka configurations that improve message sturdiness and reliability:

retention.ms: Specifies how lengthy Kafka retains a file earlier than it’s deleted; for instance,setting log.retention.ms=604800000retains messages for 7 dayslog.section.bytes: Controls the dimensions of every log section; as an illustration, settinglog.section.bytes=1073741824creates new segments after 1 GBmin.insync.replicas: Defines the minimal variety of replicas that should acknowledge a write earlier than it’s thought-about profitable; settingmin.insync.replicas=2ensures that at the least two replicas affirm the write.acks: A producer setting that specifies the variety of acknowledgments required. Settingacks=allensures that every one in-sync replicas should acknowledge the message, offering excessive sturdiness.

Instance configurations for making certain message sturdiness:

# Instance 1: Retention Coverage

log.retention.ms=604800000 # Retain messages for 7 days

log.section.bytes=1073741824 # 1 GB section measurement

# Instance 2: Replication and Acknowledgment

min.insync.replicas=2 # No less than 2 replicas should acknowledge a write

acks=all # Producer requires acknowledgment from all in-sync replicas

# Instance 3: Producer Configuration

acks=all # Ensures excessive sturdiness

retries=5 # Variety of retries in case of transient failures

Revealing the Mannequin: The Actor Sample

In our system, the processor we beforehand mentioned will now be known as an Actor. The Actor mannequin is well-suited for managing state and dealing with instructions asynchronously, making it a pure match for our Kafka-based system.

Core Ideas of the Actor Mannequin

Actorsas elementary items: EveryActoris liable for receiving messages, processing them, and modifying its inside state. This aligns with our use of processors to deal with instructions for every switch.- Asynchronous message passing: Communication between

Actorshappens by Kafka subjects, permitting for decoupled, asynchronous interactions. - State isolation: Every

Actormaintains its personal state, which might solely be modified by sending a command to theActor. This ensures that state adjustments are managed and sequential. - Sequential message processing: Kafka ensures that messages inside a partition are processed so as, which helps the

Actormannequin’s want for sequential dealing with of instructions. - Location transparency:

Actorscould be distributed throughout completely different machines or places, enhancing scalability and fault tolerance. - Fault tolerance: Kafka’s built-in fault-tolerance mechanisms, mixed with the

Actormannequin’s distributed nature, be sure that the system can deal with failures gracefully. - Scalability: The system’s scalability is decided by the variety of Kafka partitions. As an example, with 64 partitions, the system can deal with 64 concurrent instructions. Kafka’s structure permits us to scale by including extra partitions and shoppers as wanted.

Implementing the Actor Mannequin within the System

We begin by defining a easy interface for managing the state:

interface StateStorage {

S newState();

S get(Okay key);

void put(Okay key, S state);

}

Subsequent, we outline the Actor interface:

interface Actor {

S obtain(S state, C command);

}

To combine Kafka, we want helper interfaces to learn the important thing and worth from Kafka information:

interface KafkaMessageKeyReader {

Okay readKey(byte[] key);

}

interface KafkaMessageValueReader {

V readValue(byte[] worth);

}

Lastly, we implement the KafkaActorConsumer, which manages the interplay between Kafka and our Actor system:

class KafkaActorConsumer {

non-public last Provider> actorFactory;

non-public last StateStorage storage;

non-public last KafkaMessageKeyReader keyReader;

non-public last KafkaMessageValueReader valueReader;

public KafkaActorConsumer(Provider> actorFactory, StateStorage storage,

KafkaMessageKeyReader keyReader, KafkaMessageValueReader valueReader) {

this.actorFactory = actorFactory;

this.storage = storage;

this.keyReader = keyReader;

this.valueReader = valueReader;

}

public void devour(ConsumerRecord file) {

// (1) Learn the important thing and worth from the file

Okay messageKey = keyReader.readKey(file.key());

C messageValue = valueReader.readValue(file.worth());

// (2) Get the present state from the storage

S state = storage.get(messageKey);

if (state == null) {

state = storage.newState();

}

// (3) Get the actor occasion

Actor actor = actorFactory.get();

// (4) Course of the message

S newState = actor.obtain(state, messageValue);

// (5) Save the brand new state

storage.put(messageKey, newState);

}

}

This implementation handles the consumption of messages from Kafka, processes them utilizing an Actor, and updates the state accordingly. Extra concerns like error dealing with, logging, and tracing could be added to reinforce the robustness of this method.

By combining Kafka’s highly effective messaging capabilities with the Actor mannequin’s structured strategy to state administration and concurrency, we will construct a extremely scalable, resilient, and environment friendly system for dealing with monetary transactions. This setup ensures that every command is processed appropriately, sequentially, and with full sturdiness ensures.

Superior Subjects

Outbox Sample

The Outbox Sample is a crucial design sample for making certain dependable message supply in distributed programs, significantly when integrating PostgreSQL with Kafka. The first situation it addresses is the chance of inconsistencies the place a transaction is likely to be dedicated in PostgreSQL, however the corresponding message fails to be delivered to Kafka as a consequence of a community situation or system failure. This will result in a state of affairs the place the database state and the message stream are out of sync.

The Outbox Sample solves this drawback by storing messages in a neighborhood outbox desk inside the identical PostgreSQL transaction. This ensures that the message is simply despatched to Kafka after the transaction is efficiently dedicated. By doing so, it gives exactly-once supply semantics, stopping message loss and making certain consistency between the database and the message stream.

Implementing the Outbox Sample

With the Outbox Sample in place, the KafkaActorConsumer and Actor implementations could be adjusted to accommodate this sample:

file OutboxMessage(UUID id, String matter, byte[] key, Map headers, byte[] payload) {}

file ActorReceiveResult(S newState, Checklist messages) {}

interface Actor {

ActorReceiveResult obtain(S state, C command);

}

class KafkaActorConsumer {

public void devour(ConsumerRecord file) {

// ... different steps

// (5) Course of the message

var consequence = actor.obtain(state, messageValue);

// (6) Save the brand new state

storage.put(messageKey, consequence.newState());

}

@Transactional

public void persist(S state, Checklist messages) {

// (7) Persist the brand new state

storage.put(stateKey, state);

// (8) Persist the outbox messages

for (OutboxMessage message : messages) {

outboxTable.save(message);

}

}

}

On this implementation:

- The

Actornow returns anActorReceiveResultcontaining the brand new state and a listing of outbox messages that must be despatched to Kafka. - The

KafkaActorConsumerprocesses these messages and persists each the state and the messages within the outbox desk inside the identical transaction. - After the transaction is dedicated, an exterior course of (e.g., Debezium) reads from the outbox desk and sends the messages to Kafka, making certain exactly-once supply.

Poisonous Messages and Useless-Letters

In distributed programs, some messages is likely to be malformed or trigger errors that forestall profitable processing. These problematic messages are sometimes called “toxic messages.” To deal with such eventualities, we will implement a dead-letter queue (DLQ). A DLQ is a particular queue the place unprocessable messages are despatched for additional investigation. This strategy ensures that these messages don’t block the processing of different messages and permits for the foundation trigger to be addressed with out shedding knowledge.

Here is a primary implementation for dealing with poisonous messages:

class ToxicMessage extends Exception {}

class LogicException extends ToxicMessage {}

class SerializationException extends ToxicMessage {}

class DefaultExceptionDecider {

public boolean isToxic(Throwable e) {

return e instanceof ToxicMessage;

}

}

interface DeadLetterProducer {

void ship(ConsumerRecord, ?> file, Throwable e);

}

class Shopper {

non-public last ExceptionDecider exceptionDecider;

non-public last DeadLetterProducer deadLetterProducer;

void devour(ConsumerRecord file) {

attempt {

// course of file

} catch (Exception e) {

if (exceptionDecider.isToxic(e)) {

deadLetterProducer.ship(file, e);

} else {

// throw exception to retry the operation

throw e;

}

}

}

}

On this implementation:

ToxicMessage: A base exception class for any errors deemed “toxic,” which means they shouldn’t be retried however quite despatched to the DLQDefaultExceptionDecider: Decides whether or not an exception is poisonous and may set off sending the message to the DLQDeadLetterProducer: Answerable for sending messages to the DLQShopper: Processes messages and makes use of theExceptionDeciderandDeadLetterProducerto deal with errors appropriately

Conclusion

By leveraging Kafka because the messaging spine and implementing the Actor mannequin, we will construct a sturdy, scalable, and fault-tolerant monetary transaction system. The Actor mannequin affords a simple strategy to managing state and concurrency, whereas Kafka gives the instruments essential for dependable message dealing with, sturdiness, and partitioning.

The Actor mannequin will not be a specialised or complicated framework however quite a set of straightforward abstractions that may considerably enhance the scalability and reliability of our system. Kafka’s built-in options, equivalent to message sturdiness, ordering, and fault tolerance, naturally align with the rules of the Actor mannequin, enabling us to implement these ideas effectively and successfully with out requiring further frameworks.

Incorporating superior patterns just like the Outbox Sample and dealing with poisonous messages with DLQs additional enhances the system’s reliability, making certain that messages are processed persistently and that errors are managed gracefully. This complete strategy ensures that our monetary transaction system stays dependable, scalable, and able to dealing with complicated workflows seamlessly.