Within the final article, I established the fundamental structure for a fundamental RAG app. In case you missed that, I like to recommend that you simply first learn that article. That can set the bottom from which we are able to enhance our RAG system. Additionally in that final article, I listed some frequent pitfalls that RAG purposes are inclined to fail on. We will likely be tackling a few of them with some superior strategies on this article.

To recap, a fundamental RAG app makes use of a separate information base that aids the LLM in answering the person’s questions by offering it with extra context. That is additionally referred to as a retrieve-then-read method.

The Drawback

To reply the person’s query, our RAG app will retrieve acceptable primarily based on the question itself. It’s going to discover chunks of textual content on the vector DB with comparable content material to regardless of the person is asking. Different information bases (search engines like google and yahoo, and many others.) additionally apply. The issue is that the chunk of data the place the reply lies won’t be much like what the person is asking. The query could be badly written, or expressed otherwise to what we count on. And, if our RAG app can’t discover the data wanted to reply the query, it received’t reply accurately.

There are numerous methods to unravel this drawback, however for this text, we’ll take a look at question rewriting.

What Is Question Rewriting?

Merely put, question rewriting means we’ll rewrite the person question in our personal phrases in order that our RAG app will know finest reply. As an alternative of simply doing retrieve-then-read, our app will do a rewrite-retrieve-read method.

We use a Generative AI mannequin to rewrite the query. This mannequin is usually a massive mannequin, like (or the identical as) the one we use to reply the query within the last step. It will also be a smaller mannequin, specifically skilled to carry out this process.

Moreover, question rewriting can take many various kinds relying on the wants of the app. More often than not, fundamental question rewriting will likely be sufficient. However, relying on the complexity of the questions we have to reply, we would want extra superior strategies like HyDE, multi-querying, or step-back questions. Extra info on these is within the following part.

Why Does It Work?

Question rewriting often offers higher efficiency in any RAG app that’s knowledge-intensive. It’s because RAG purposes are delicate to the phrasing and particular key phrases of the question. Paraphrasing this question is useful within the following eventualities:

- It restructures oddly written questions to allow them to be higher understood by our system.

- It erases context given by the person which is irrelevant to the question.

- It may introduce frequent key phrases, which can give it a greater likelihood of matching up with the proper context.

- It may cut up complicated questions into totally different sub-questions, which could be extra simply responded to individually, every with their corresponding context.

- It may reply questions that require a number of ranges of considering by producing a step-back query, which is a higher-level idea query to the one from the person. It then makes use of each the unique and the step-back inquiries to retrieve context.

- It may use extra superior question rewriting strategies like HyDE to generate hypothetical paperwork to reply the query. These hypothetical paperwork will higher seize the intent of the query and match up with the embeddings that include the reply within the vector DB.

How To Implement Question Rewriting

We have now established that there are totally different methods for question rewriting relying on the complexity of the questions. We are going to briefly focus on implement every of them. After, we’ll see an actual instance to check the results of a fundamental RAG app versus a RAG app with question rewriting. You can too observe all of the examples within the article’s Google Colab pocket book.

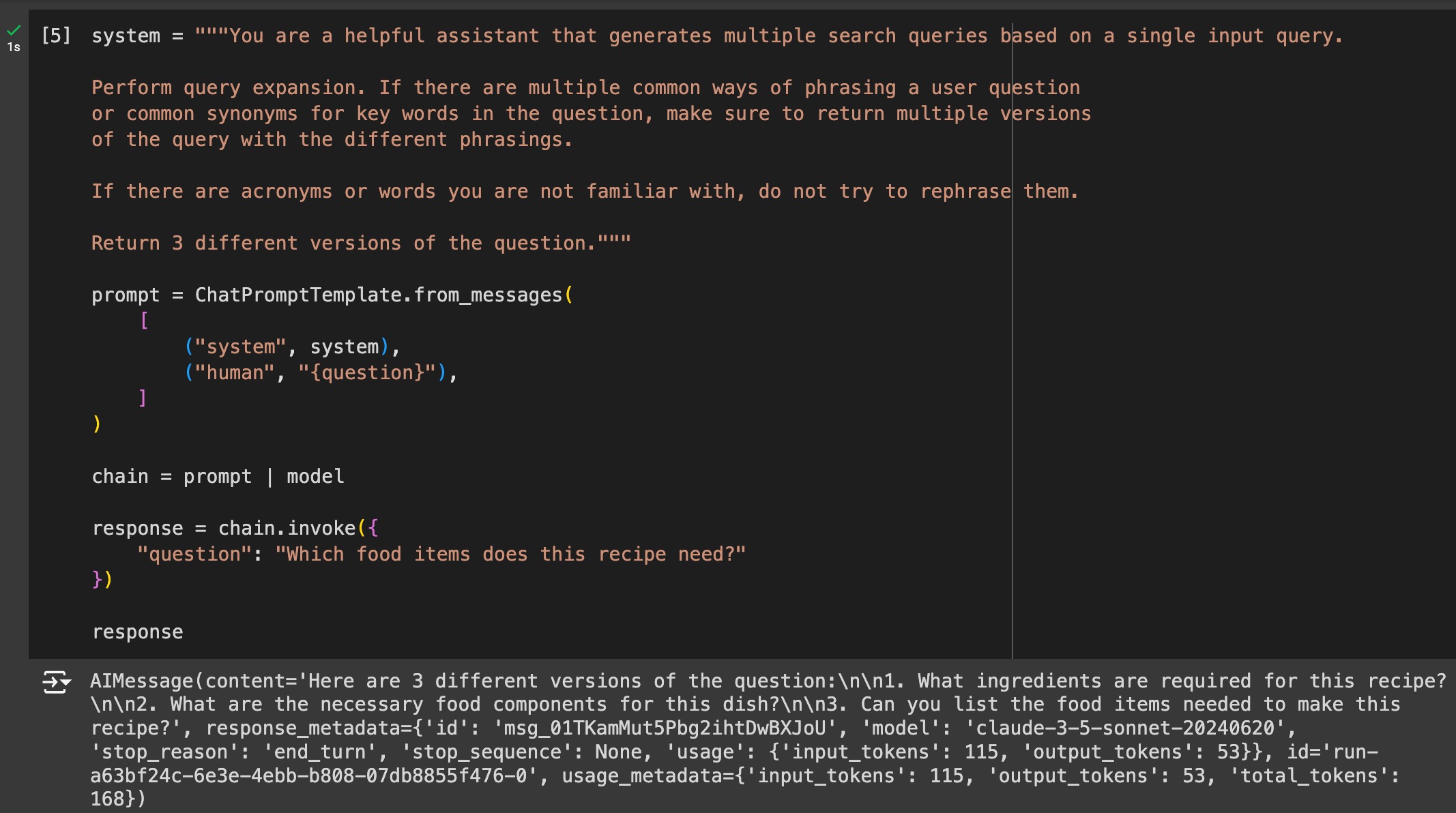

Zero-Shot Question Rewriting

That is easy question rewriting. Zero-shot refers back to the immediate engineering strategy of giving examples of the duty to the LLM, which on this case, we give none.

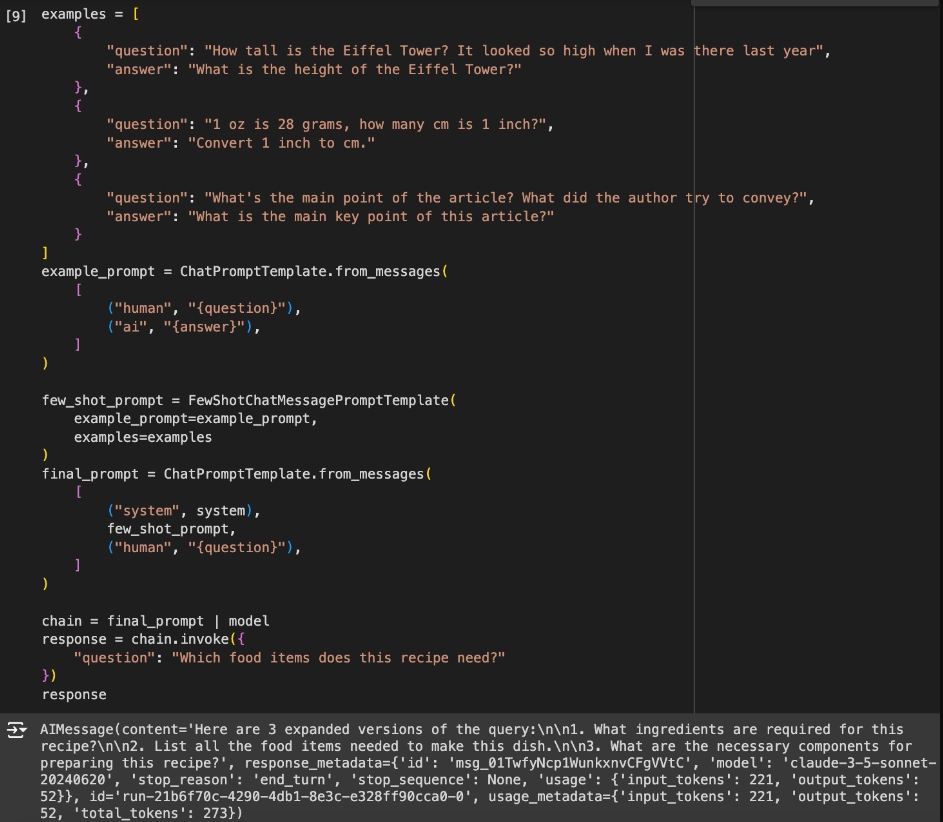

Few-Shot Question Rewriting

For a barely higher end result at the price of utilizing a number of extra tokens per rewrite, we can provide some examples of how we wish the rewrite to be carried out.

Trainable Rewriter

We are able to fine-tune a pre-trained mannequin to carry out the question rewriting process. As an alternative of counting on examples, we are able to educate it how question rewriting ought to be carried out to realize one of the best leads to context retrieving. Additionally, we are able to additional practice it utilizing reinforcement studying so it will probably study to acknowledge problematic queries and keep away from poisonous and dangerous phrases. We are able to additionally use an open-source mannequin that has already been skilled by someone else on the duty of question rewriting.

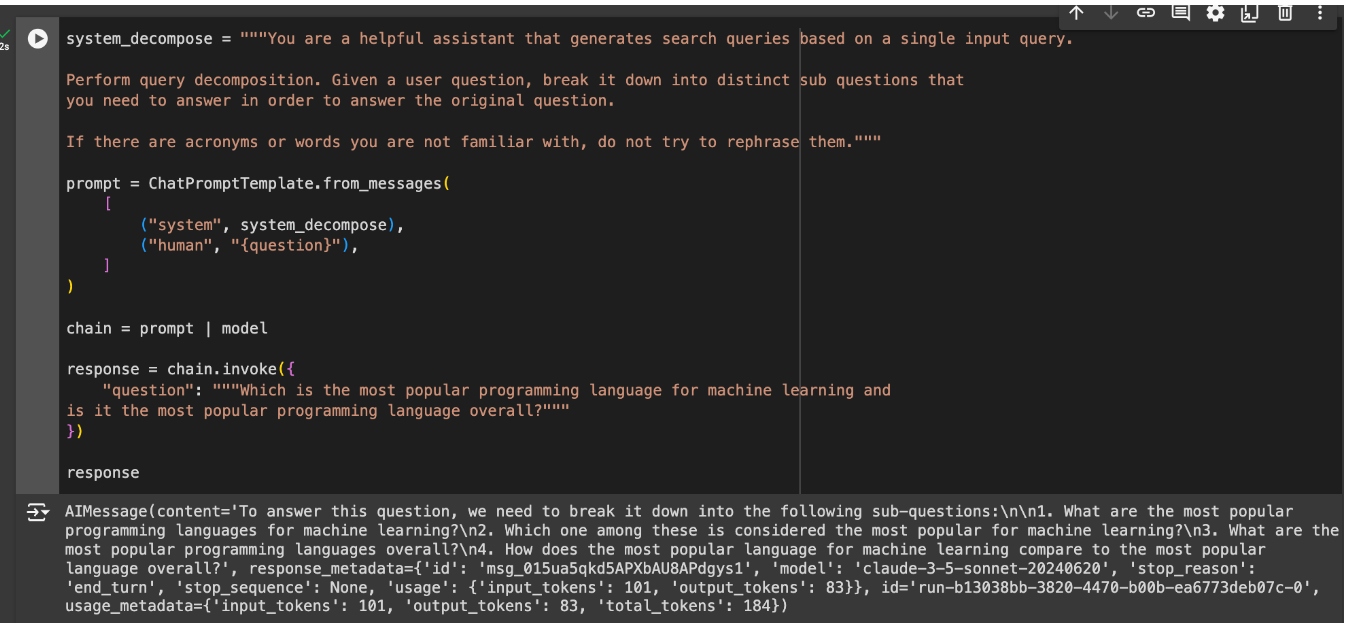

Sub-Queries

If the person question incorporates a number of questions, this may make context retrieval tough. Every query in all probability wants totally different info, and we aren’t going to get all of it utilizing all of the questions as the premise for info retrieval. To resolve this drawback, we are able to decompose the enter into a number of sub-queries, and carry out retrieval for every of the sub-queries.

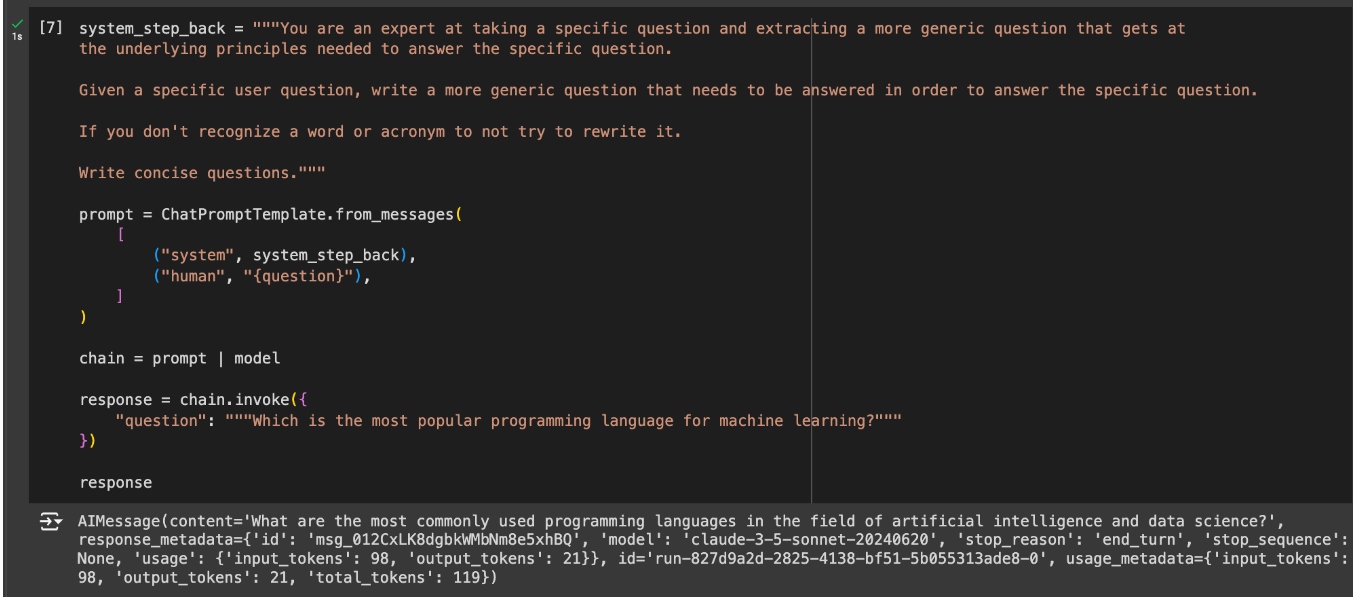

Step-Again Immediate

Many questions is usually a bit too complicated for the RAG pipeline’s retrieval to know the a number of ranges of data wanted to reply them. For these circumstances, it may be useful to generate a number of further queries to make use of for retrieval. These queries will likely be extra generic than the unique question. This can allow the RAG pipeline to retrieve related info on a number of ranges.

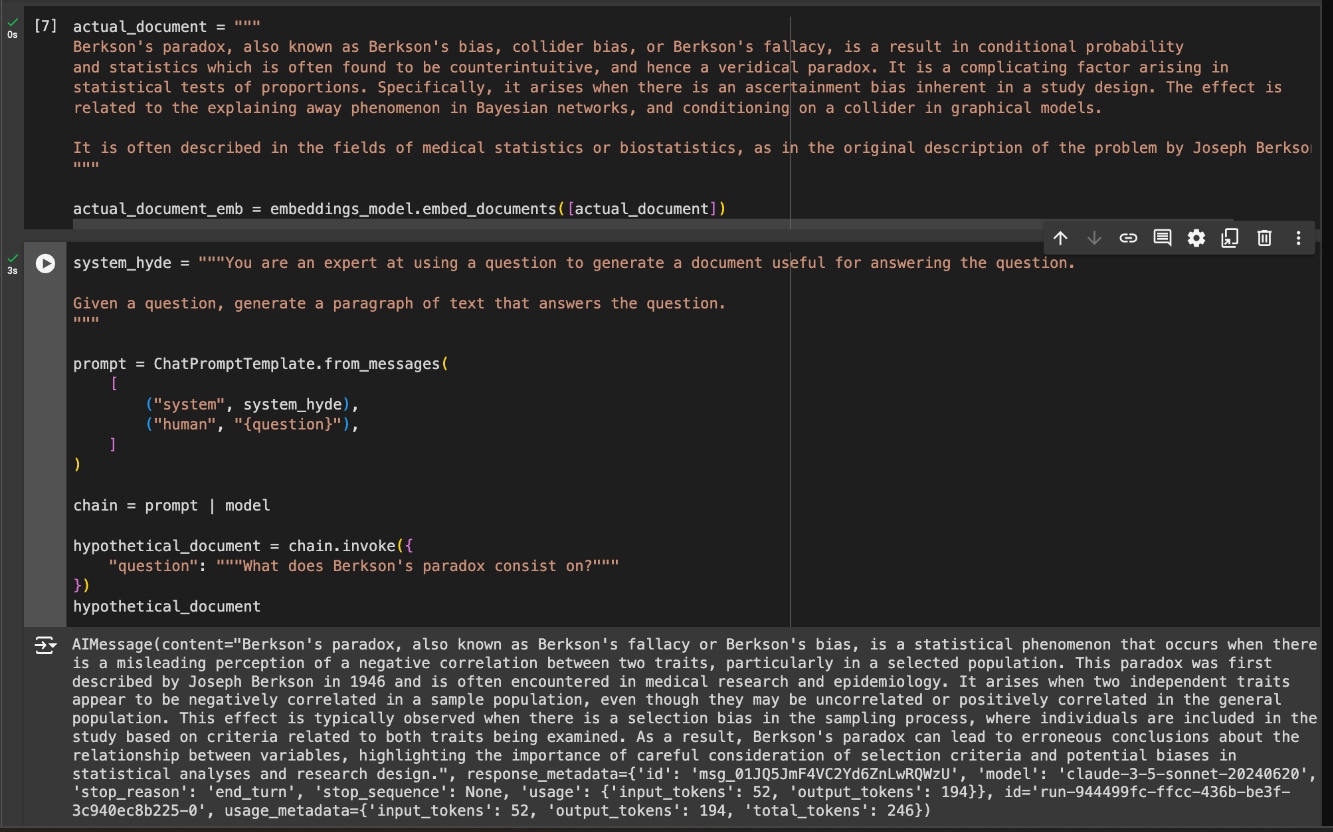

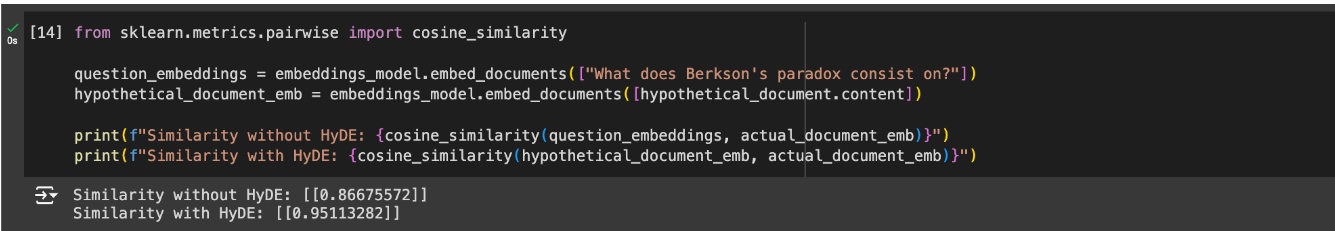

HyDE

One other methodology to enhance how queries are matched with context chunks is Hypothetical Document Embeddings or HyDE. Typically, questions and solutions usually are not that semantically comparable, which may trigger the RAG pipeline to overlook vital context chunks within the retrieval stage. Nevertheless, even when the question is semantically totally different, a response to the question ought to be semantically much like one other response to the identical question. The HyDE methodology consists of making hypothetical context chunks that reply the question and utilizing them to match the true context that may assist the LLM reply.

Instance: RAG With vs With out Question Rewriting

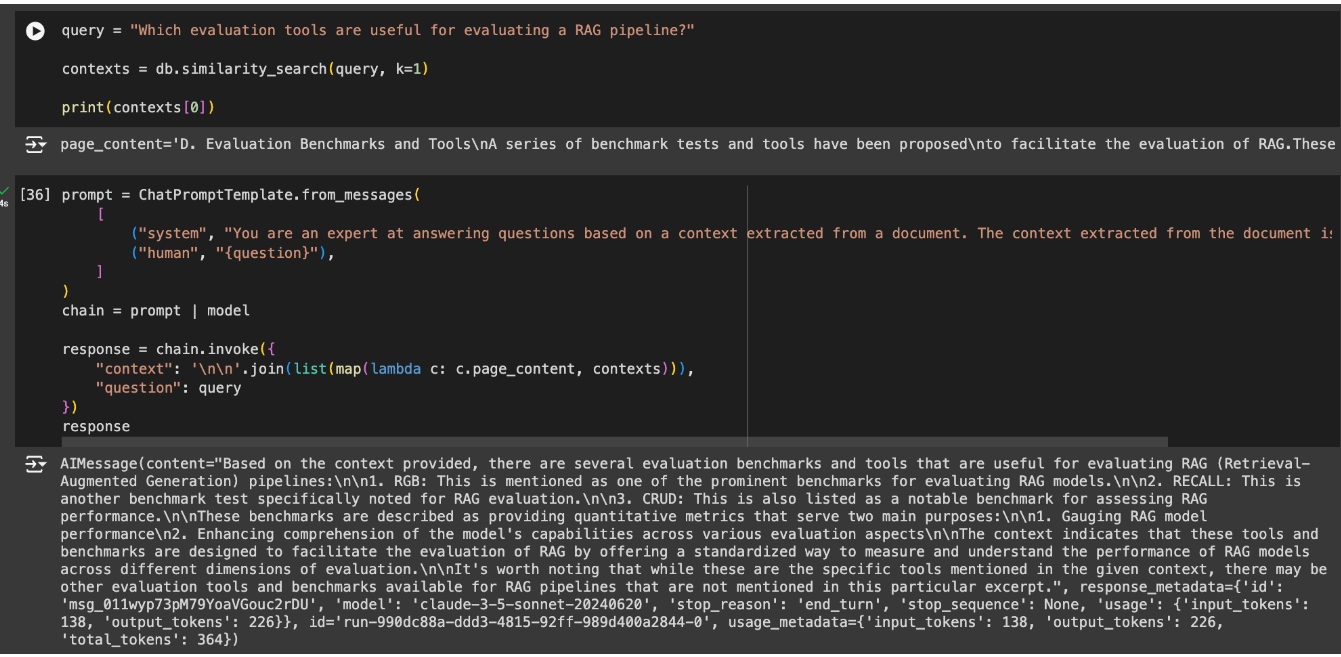

Taking the RAG pipeline from the final article, “How To Construct a Primary RAG App,” we’ll introduce question rewriting into it. We are going to ask it a query a bit extra superior than final time and observe whether or not the response improves with question rewriting over with out it. First, let’s construct the identical RAG pipeline. Solely this time, I’ll solely use the highest doc returned from the vector database to be much less forgiving to missed paperwork.

The response is sweet and primarily based on the context, nevertheless it received caught up in me asking about analysis and missed that I used to be particularly asking for instruments. Subsequently, the context used does have info on some benchmarks, nevertheless it misses the subsequent chunk of data that talks about instruments.

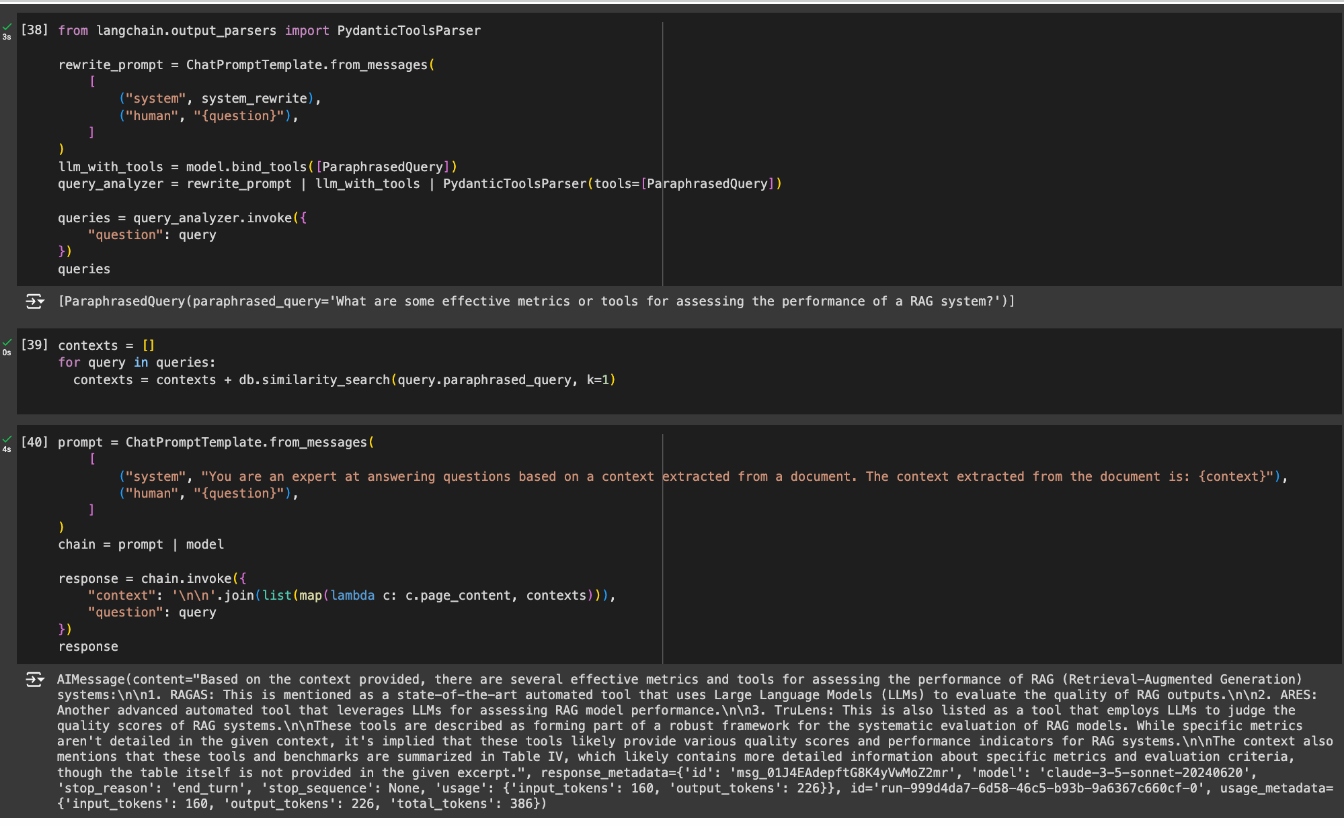

Now, let’s implement the identical RAG pipeline however now, with question rewriting. In addition to the question rewriting prompts, we have now already seen within the earlier examples, I’ll be utilizing a Pydantic parser to extract and iterate over the generated various queries.

The brand new question now matches with the chunk of data I wished to get my reply from, giving the LLM a greater likelihood of answering a significantly better response to my query.

Conclusion

We have now taken our first step out of fundamental RAG pipelines and into Superior RAG. Question rewriting is a quite simple Superior RAG method, however a strong one for enhancing the outcomes of a RAG pipeline. We have now gone over alternative ways to implement it relying on what sort of questions we have to enhance. In future articles, we’ll go over different Superior RAG strategies that may sort out totally different RAG points than these seen on this article.