As a sensible instance, this text reveals learn how to construct an AI Product assistant — an AI chatbot able to answering product-related questions utilizing knowledge from a number of sources. With the AI Product Assistant, you’ll be able to ask questions throughout all of your firm’s knowledge in a single place.

You may make the most of the Snowflake Cortex vacation spot to load vector knowledge into Snowflake, adopted by leveraging Snowflake’s Cortex features to carry out Retrieval-Augmented Era (RAG). Cortex affords general-purpose ML features and LLM-specific features, together with vector features for semantic search, every backed by a number of fashions, all without having to handle or host the underlying fashions or infrastructure.

With Airbyte’s newly launched Snowflake Cortex vacation spot, customers can create their very own devoted vector retailer instantly inside Snowflake — with no coding required! Creating a brand new pipeline with Snowflake Cortex takes just a few minutes, permitting you to load any of the 300-plus knowledge sources into your new Snowflake-backed vector retailer.

We’ll use the next three knowledge sources particular to Airbyte:

- Airbyte’s official documentation for the Snowflake Cortex vacation spot

- GitHub points, documenting deliberate options, identified bugs, and work in progress.

- Zendesk tickets comprising buyer particulars and inquiries/requests

RAG Overview

LLMs are general-purpose instruments educated on an unlimited corpus of information, permitting them to reply questions on virtually any subject. Nevertheless, if you’re trying to extract data out of your proprietary knowledge, LLMs fall quick, resulting in potential inaccuracies and hallucinations. That is the place RAG turns into tremendous related. RAG addresses this limitation by enabling LLMs to entry related and up-to-date knowledge from any knowledge supply. The LLM can then use this data to reply related questions on your proprietary knowledge.

Stipulations

To observe by the entire course of, you have to the next accounts. You too can work with your individual customized sources or work with a single supply:

- Airbyte cloud occasion

- Snowflake account with Cortex features enabled

- Open AI

- Supply-specific: Google Drive, GitHub and Zendesk

Step 1: Arrange Snowflake Cortex Vacation spot

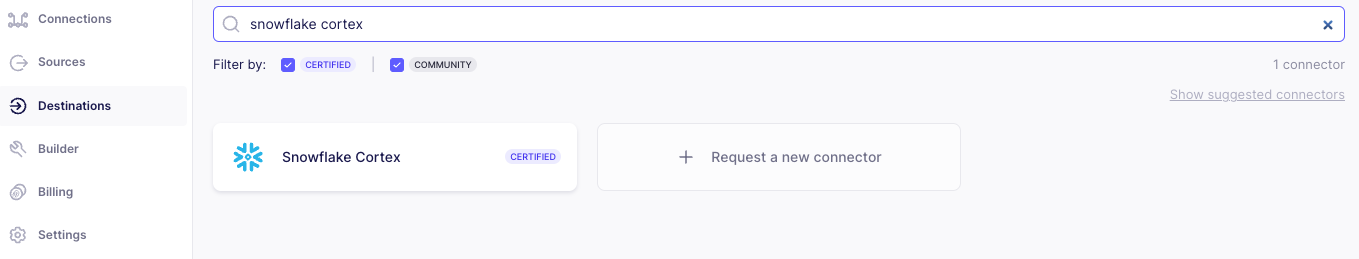

To pick out a vacation spot in Airbyte Cloud, click on on the Locations tab on the left, choose “New Destination” and filter for the vacation spot you’re searching for.

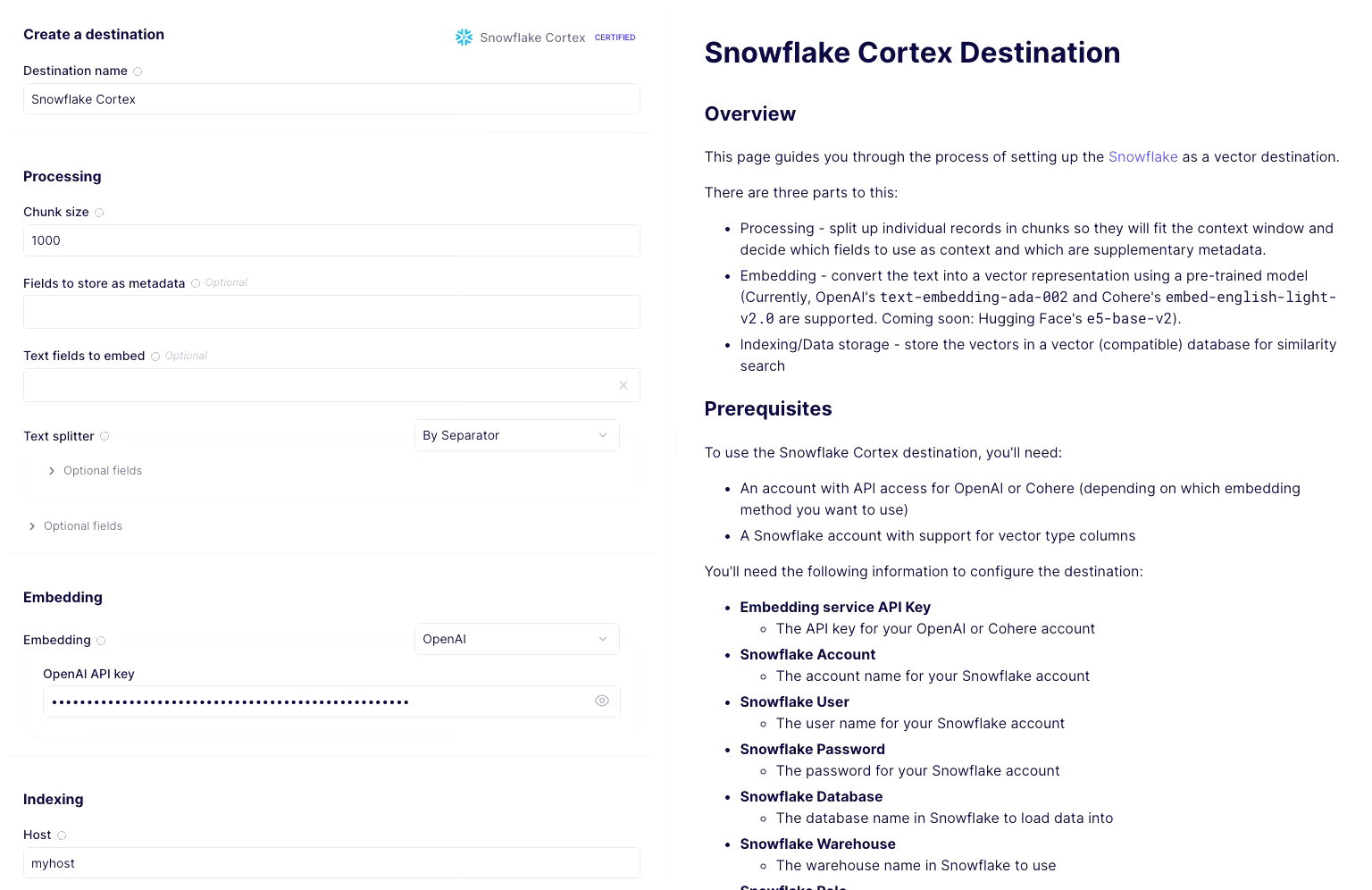

Configure the Snowflake Cortex vacation spot in Airbyte:

- Vacation spot identify: Present a pleasant identify.

- Processing: Set chunk dimension to 1000 (the scale refers back to the variety of tokens, not characters, so that is roughly 4KB of textual content. One of the best chunking depends on the info you’re coping with). You may depart different choices empty. You’ll often set the metadata and textual content fields to restrict the studying of information to particular columns in a given stream.

- Embedding: Choose OpenAI from the dropdown and supply your OpenAI API key for powering the embedding service. You need to use another out there fashions additionally. Simply keep in mind to embed the questions you ask the product assistant with the identical mannequin.

- Indexing: Present credentials for connecting to your Snowflake occasion.

Step 2: Arrange Google Drive, GitHub, and Zendesk Sources

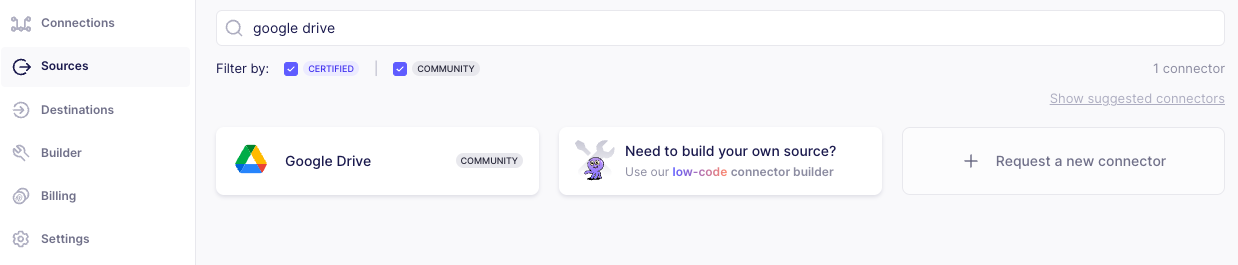

To pick out a supply, click on on the sources tab on the left, choose “New Source” and filter for the supply you’re searching for.

Google Drive

Choose Google Drive on the sources web page and configure the Google Drive supply. You will want to authenticate and supply the next:

- Folder URL: Present a hyperlink to your Google Drive folder. For this tutorial, you’ll be able to transfer the snowflake-cortex.md file from right here over to your Google Drive. Alternatively, you should use your individual file. Seek advice from the accompanying documentation to study legitimate file codecs.

- The record of streams to sync

- Format: Set the format dropdown to “Document File Type Format”

- Identify: Present a stream identify.

GitHub

Choose GitHub on the sources web page and configure the GitHub supply. You will want to authenticate together with your GitHub account and supply the next:

- GitHub repositories: You need to use the repo:

airbytehq/PyAirbyteor present your individual.

Zendesk

Elective: Choose Zendesk on the sources pages and configure the Zendesk supply. It is possible for you to to authenticate utilizing OAuth, or API token, and supply the next:

- Sub-domain identify: in case your Zendesk account URL is https://MY_SUBDOMAIN.zendesk.com/, then

MY_SUBDOMAINis your subdomain.

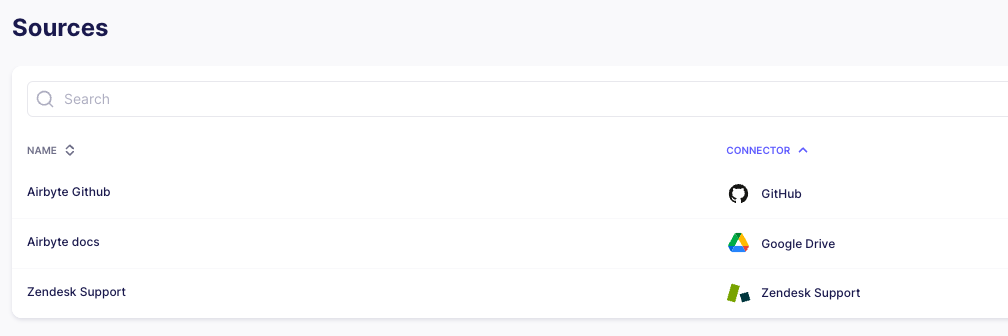

After you might have arrange all sources, that is what your supply display screen will appear like:

Step 3: Arrange All Connections

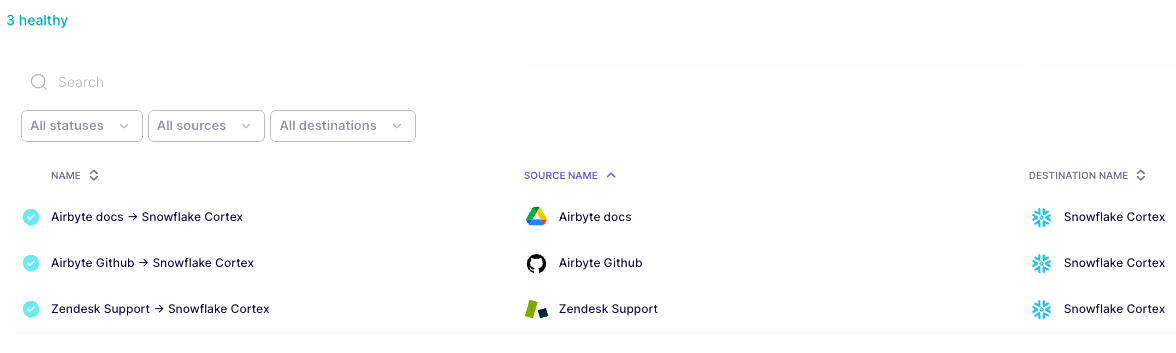

Subsequent, we’ll arrange the next connections:

- Google Drive → Snowflake Cortex

- Github → Snowflake Cortex

- Zendesk → Snowflake Cortex

Google Drive → Snowflake Cortex

Choose the Connections tab on the left navigation in Airbyte Cloud; then click on on “New connection” on the highest proper.

- Within the configuration circulation, choose the prevailing Google Drive supply and Snowflake Cortex because the vacation spot.

- On the choose streams display screen, choose

Full Refresh|Overwritebecause the sync mode. - On the configure connection, choose the Schedule kind to each 24 hours. Optionally present a stream prefix in your desk. In our case we prefix airbyte_ to all streams.

- Click on on End and Sync.

- If every part went nicely, there must be a connection now synching knowledge from Google Drive to Snowflake Cortex vacation spot. Give the sync a couple of minutes to run. As soon as the primary run has been accomplished, you’ll be able to test Snowflake to verify knowledge sync. The information must be synched beneath a desk named “[optional prefix][stream name]”

Github → Snowflake Cortex

Choose the Connections tab on the left navigation in Airbyte Cloud; then click on on “New connection” on the highest proper.

- Within the configuration circulation, choose the prevailing Github supply and Snowflake Cortex because the vacation spot.

- On the choose streams display screen, make certain all streams besides

pointsare unchecked. Additionally, set the sync mode toFull Refresh|Overwrite. - On the configure connection display screen, choose the Schedule kind each 24 hours. Optionally present a stream prefix in your desk. In our case, we prefix airbyte_ to all streams.

- Click on on End and Sync.

- If every part goes nicely, there must be a connection now synching knowledge from GitHub to the Snowflake Cortex vacation spot. Give the sync a couple of minutes to finish. As soon as the primary run has been accomplished, you’ll be able to test Snowflake to verify knowledge sync. The information must be synched beneath a desk named “[optional prefix]issues”

Zendesk → Snowflake Cortex

Choose the Connections tab on the left navigation in Airbyte Cloud; then click on on “New connection” on the highest proper.

- Within the configuration circulation, choose the prevailing Zendesk supply and Snowflake Cortex because the vacation spot.

- On the choose streams display screen, make certain all streams besides

ticketsandcustomersare unchecked. Additionally, set the sync mode toFull Refresh | Overwrite. - On the configure connection display screen, choose the Schedule kind to each 24 hours. Optionally present a stream prefix in your desk. In our case, we prefix

airbyte_to all streams. - Click on on End and Sync.

- If every part goes nicely, there must be a connection now synching knowledge from Zendesk to the Snowflake Cortex vacation spot. Give the sync a couple of minutes to finish. As soon as the primary run has been accomplished, you’ll be able to test Snowflake to verify knowledge sync. The information must be synched beneath a desk named “[optional prefix]tickets” and ““[optional prefix]tickets”

As soon as all of the three connections are set, the Connections display screen ought to appear like this.

Step 4: Discover Information in Snowflake

You need to see the next tables in Snowflake. Relying in your stream prefix, your desk names could be totally different.

- Google Drive associated:

airbyte_docs - GitHub associated:

airbyte_github_issues - Zendesk associated:

airbyte_zendesk_tickets,airbyte_zendesk_users

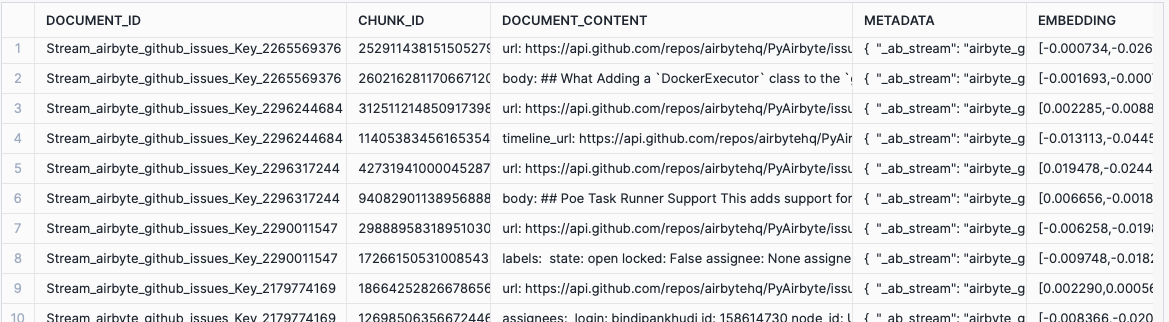

All tables have the next columns

DOCUMENT_ID: Distinctive primarily based on major keyCHUNK_ID: Randomly generated uuidDOCUMENT_CONTENT: Textual content context from supplyMETADATA: Metadata from supplyEMBEDDING: Vector illustration of the document_content

Outcomes from one among these tables ought to appear like the next:

Step 5: RAG Constructing Blocks

On this part, we’ll go over the important constructing blocks of a Retrieval-Augmented Era (RAG) system. This entails embedding the consumer’s query, retrieving matching doc chunks, and utilizing a language mannequin to generate the ultimate reply. Step one is finished utilizing OpenAI, and the second and third steps are achieved utilizing Snowflake’s Cortex features. Let’s break down these steps:

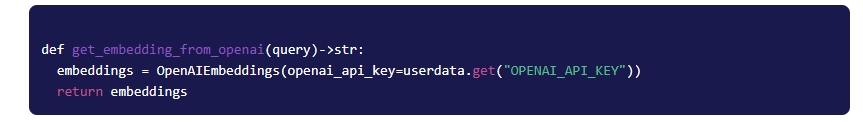

5.1 Embedding Consumer’s Query

When a consumer asks a query, it is essential to remodel the query right into a vector illustration utilizing the identical mannequin employed for producing vectors within the vector database. On this instance, we use Langchain to entry and use OpenAI’s embedding mannequin. This step ensures that the query is represented in a format that may be successfully in contrast with doc vectors.

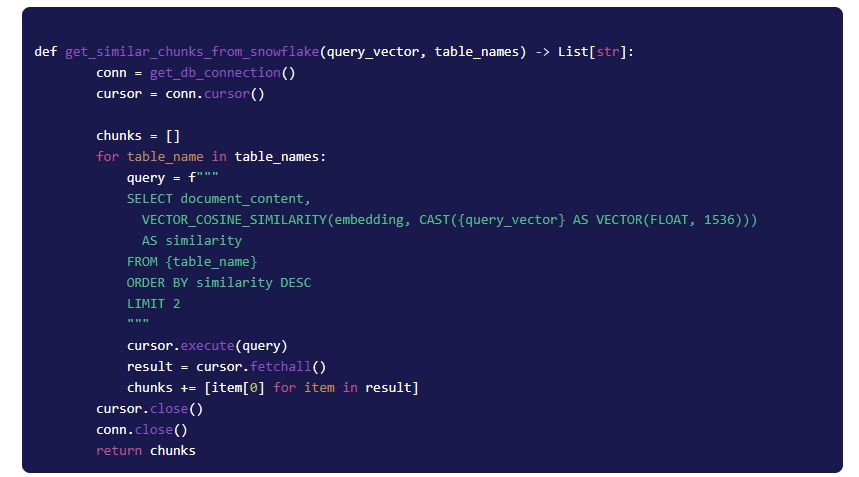

5.2 Retrieving Matching Chunks

The embedded query is then despatched to Snowflake, the place doc chunks with related vector representations are retrieved. All related chunks are returned.

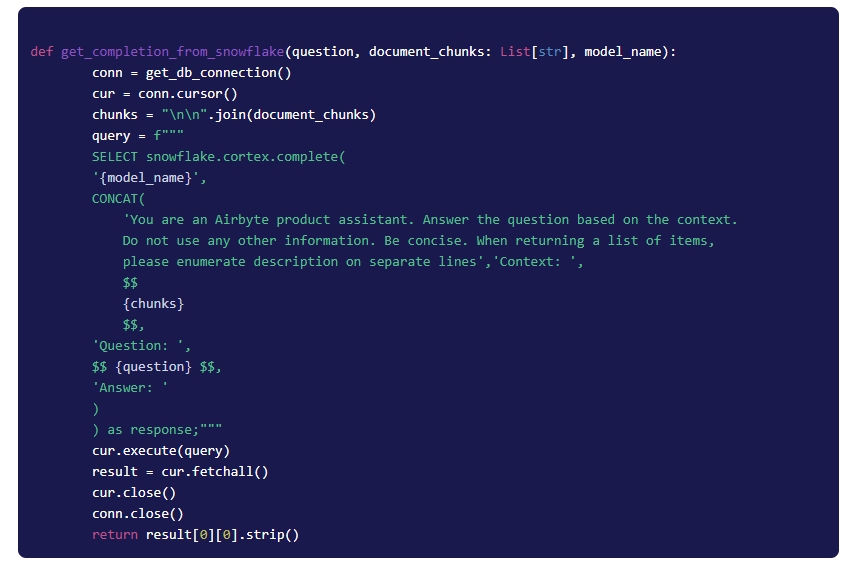

5.3 Passing Chunks to LLM for a Remaining Reply

All of the chunks are concatenated right into a single string and despatched to the Snowflake (utilizing Snowflake Python Connector) together with a immediate (set of directions) and the consumer’s query.

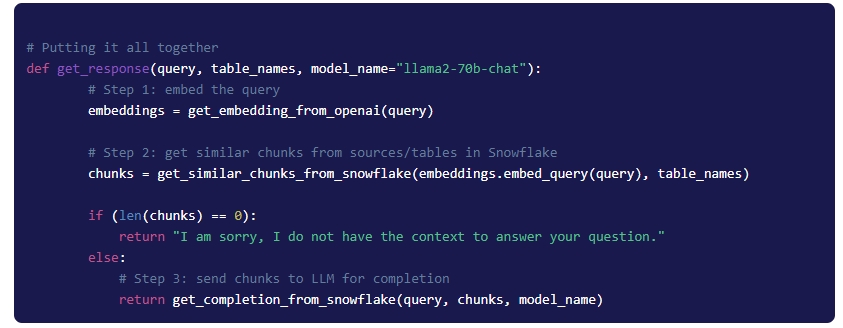

Step 6: Placing It All Collectively

Google Colab

For comfort, we now have built-in every part right into a Google Collab pocket book. Discover the totally useful RAG code in Google Colab.

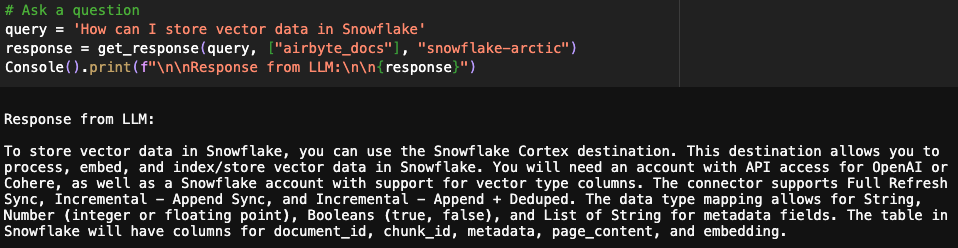

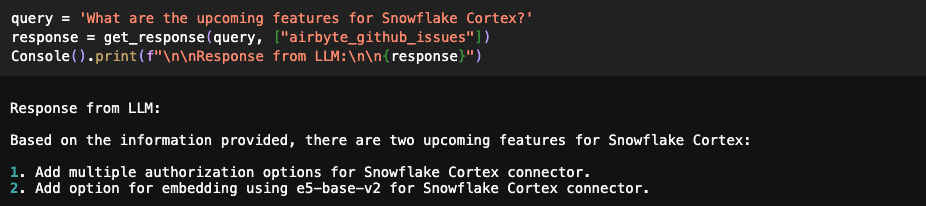

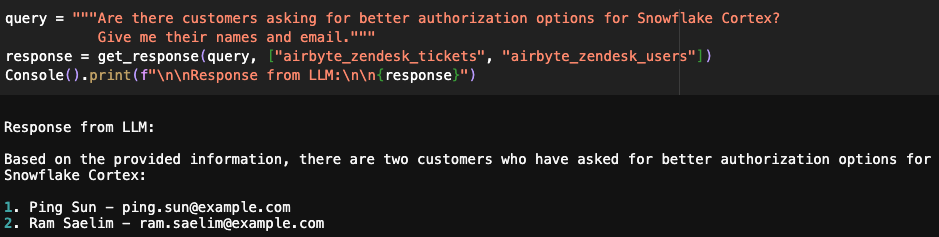

Each time a query is requested, we move the record of related tables and fashions. For the primary query under, the reply is retrieved from Airbyte docs solely, whereas, for the second query, the reply is retrieved from GitHub points.

You too can present a number of tables as enter, and the ensuing response can be a mixture of information from each sources.

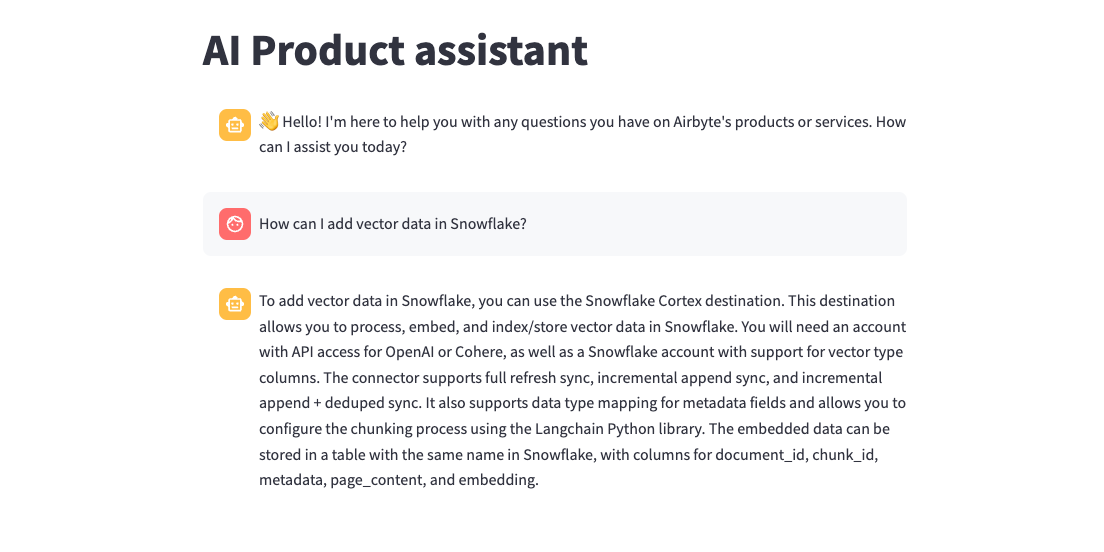

Streamlit App

We have now additionally built-in the above RAG performance right into a Streamlit chat app. Discover the totally useful code on GitHub.

Conclusion

Utilizing the LLM capabilities of Snowflake Cortex together with Airbyte’s catalog of 300-plus connectors, you’ll be able to construct an insightful AI assistant to reply questions throughout plenty of knowledge sources. We have now stored issues easy right here, however as your variety of sources will increase, think about integrating an AI agent to interpret consumer intent and retrieve solutions from the suitable supply.