Having labored with over 50 Snowflake prospects throughout Europe and the Center East, I’ve analyzed tons of of Question Profiles and recognized many points together with points round efficiency and price.

On this article, I will clarify:

- What’s the Snowflake Question Profile, and find out how to learn and perceive the parts

- How the Question Profile reveals how Snowflake executes queries and supplies insights about Snowflake and potential question tuning

- What to look out for in a Question Profile and find out how to establish and resolve SQL points

By the top of this text, you need to have a significantly better understanding and appreciation of this characteristic and learn to establish and resolve question efficiency points.

What Is a Snowflake Question Profile?

The Snowflake Question Profile is a visible diagram explaining how Snowflake has executed your question. It reveals the steps taken, the info volumes processed, and a breakdown of crucial statistics.

Question Profile: A Easy Instance

To display find out how to learn the question profile, let’s think about this comparatively easy Snowflake SQL:

choose o_orderstatus,

sum(o_totalprice)

from orders

the place yr(o_orderdate) = 1994

group by all

order by 1;

The above question was executed in opposition to a replica of the Snowflake pattern knowledge within the snowflake_sample_data.tpch_sf100.orders desk, which holds 150m rows or about 4.6GB of knowledge.

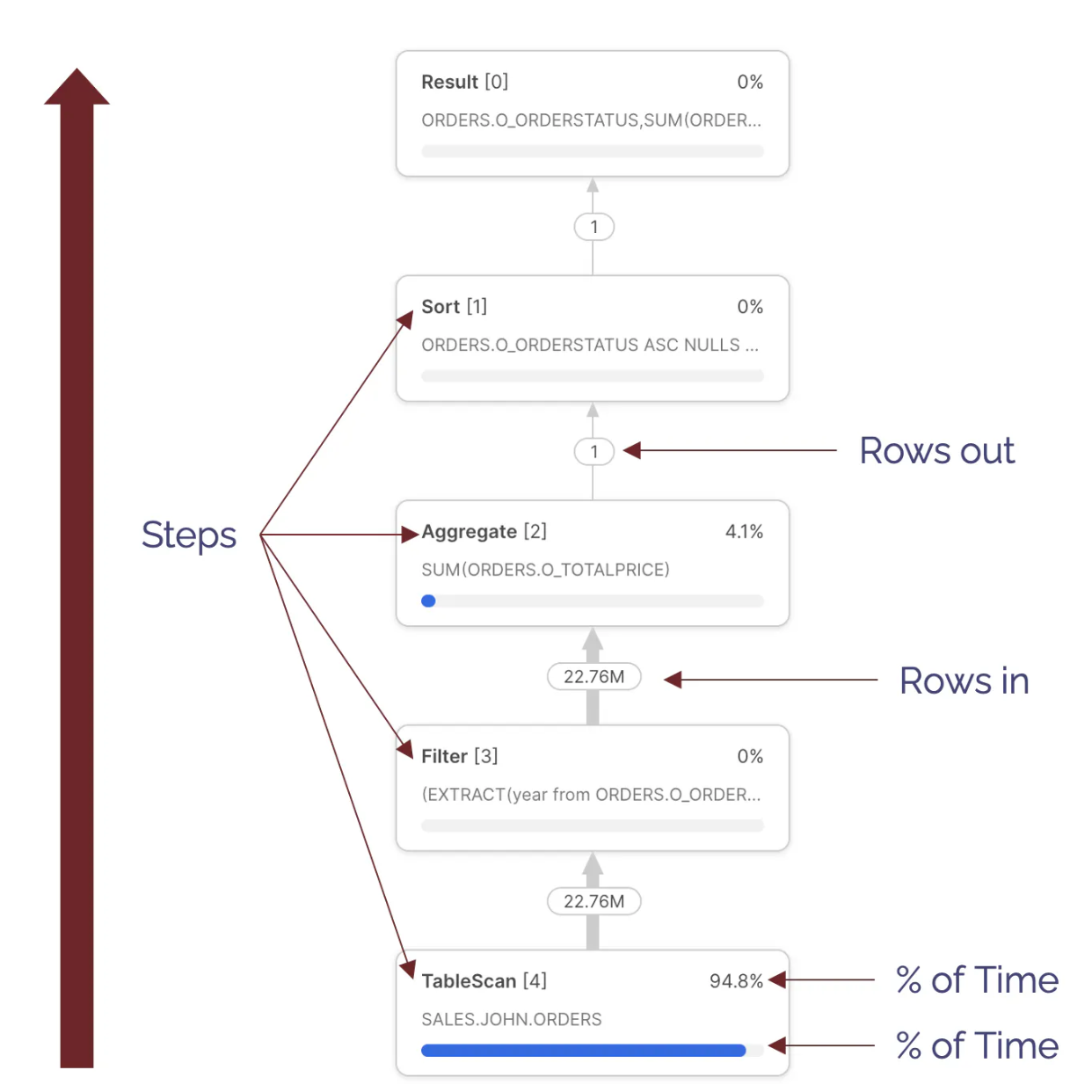

Here is the question profile it produced. We’ll clarify the parts under.

Question Profile: Steps

The diagram under illustrates the Question Steps. These are executed from the underside up, and every step represents an independently executing course of that processes a batch of some thousand rows of knowledge in parallel.

There are numerous sorts obtainable, however the commonest embody:

- TableScan [4] – Indicating knowledge being learn from a desk; Discover this step took 94.8% of the general execution time. This means the question spent more often than not scanning knowledge. Discover we can’t inform from this whether or not knowledge was learn from the digital warehouse cache or distant storage.

- Filter[3] – This makes an attempt to scale back the variety of rows processed by filtering out the info. Discover the

Filterstep takes in 22.76M rows and outputs the identical quantity. This raises the query of whether or not thethe placeclause filtered any outcomes. - Combination [2] – This means a step summarizing outcomes. On this case, it produced the

sum(orders.totalprice). Discover that this step acquired 22.76M rows and output only one row. - Type [1] – Which represents the

order by orderstatus. This types the outcomes earlier than returning them to the Outcome Set.

Be aware: Every step additionally features a sequence quantity to assist establish the sequence of operation. Learn these from highest to lowest.

Question Profile: Overview and Statistics

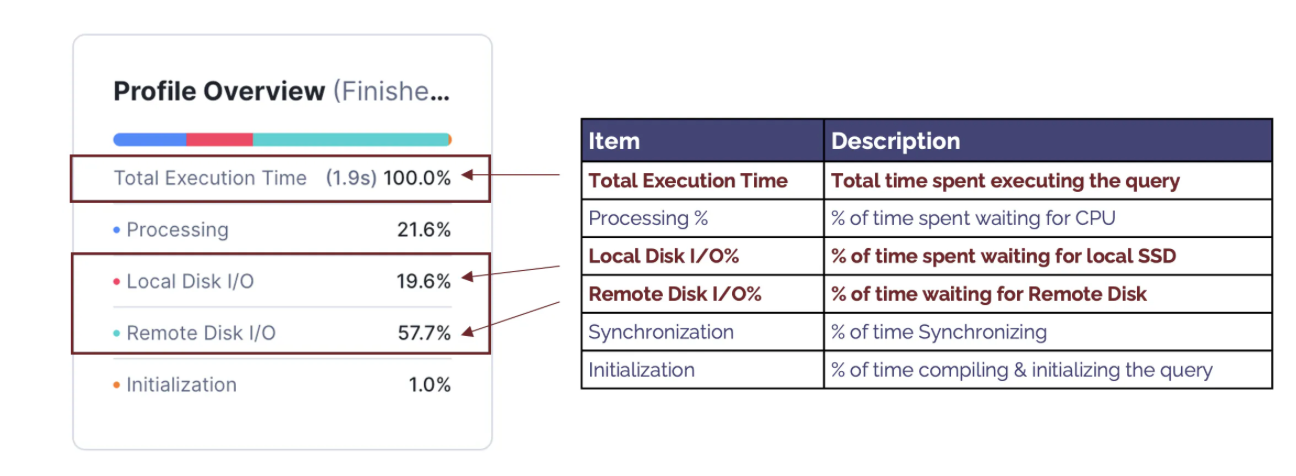

Question Profile: Overview

The diagram under summarises the parts of the Profile Overview, highlighting crucial parts.

The parts embody:

- Complete Execution Time: This means the precise time in seconds the question took to finish. Be aware: The elapsed time often is barely longer because it contains different components, together with compilation time and time spent queuing for sources.

- Processing %: Signifies the proportion of time the question spends ready for the CPU; When this can be a excessive proportion of whole execution time, it signifies the question is CPU-bound — performing advanced processing.

- Native Disk I/O %: Signifies the proportion of time ready for SSD

- Distant Disk I/O %: This means the proportion of time spent ready for Distant Disk I/O. A excessive proportion signifies the question was I/O sure. This implies that the efficiency could be greatest improved by decreasing the time spent studying from the disk.

- Synchronizing %: That is seldom helpful and signifies the proportion of time spent synchronizing between processes. This tends to be increased because of type operations.

- Initialization %: Tends to be a low proportion of general execution time and signifies time spent compiling the question; A excessive proportion usually signifies a doubtlessly over-complex question with many sub-queries however a brief execution time. This implies the question is greatest improved by simplifying the question design to scale back complexity and, subsequently, compilation time.

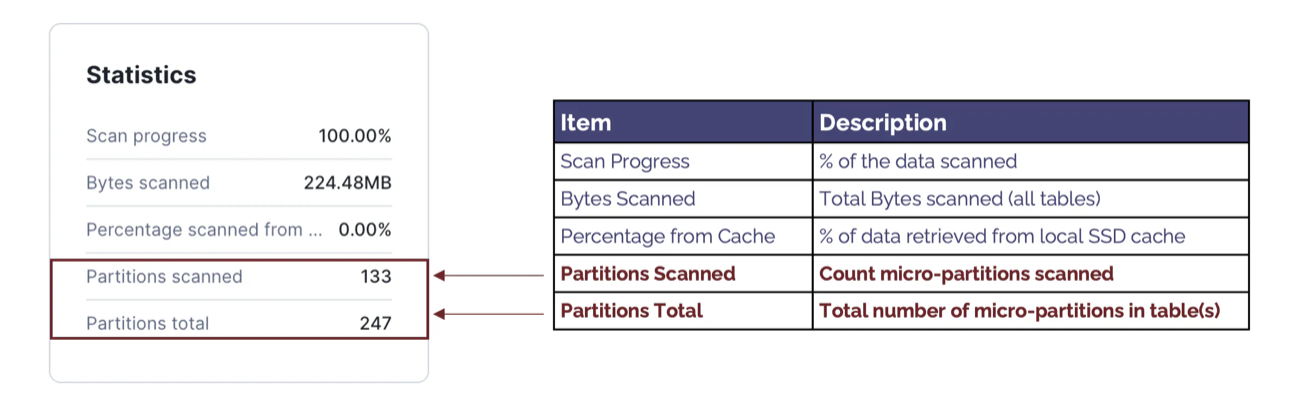

Question Profile Statistics

The diagram under summarises the parts of the Profile Statistics, highlighting crucial parts.

The parts embody:

- Scan Progress: This means the proportion of knowledge scanned. When the question continues to be executing, this can be utilized to estimate the proportion of time remaining.

- Bytes Scanned: This means the variety of bytes scanned. Not like row-based databases, Snowflake fetches solely the columns wanted, and this means the info quantity fetched from Native and Distant storage.

- Share Scanned from Cache: That is usually mistaken for an important statistic to observe. Nevertheless, when contemplating the efficiency of a selected SQL assertion, Share Scanned from Cache is a poor indicator of fine or dangerous question efficiency and ought to be largely ignored.

- Partitions Scanned: This means the variety of micro partitions scanned and tends to be a vital determinant of question efficiency. It additionally signifies the quantity of knowledge fetched from distant storage and the extent to which Snowflake might partition get rid of — to skip over partitions, defined under.

- Partitions Complete: Reveals the whole variety of partitions in all tables learn. That is greatest learn together with Partitions Scanned and signifies the effectivity of partition elimination. For instance, this question fetched 133 of 247 micro partitions and scanned simply 53% of the info. A decrease proportion signifies a better charge of partition elimination, which is able to considerably enhance queries which are I/O sure.

A Be part of Question Profile

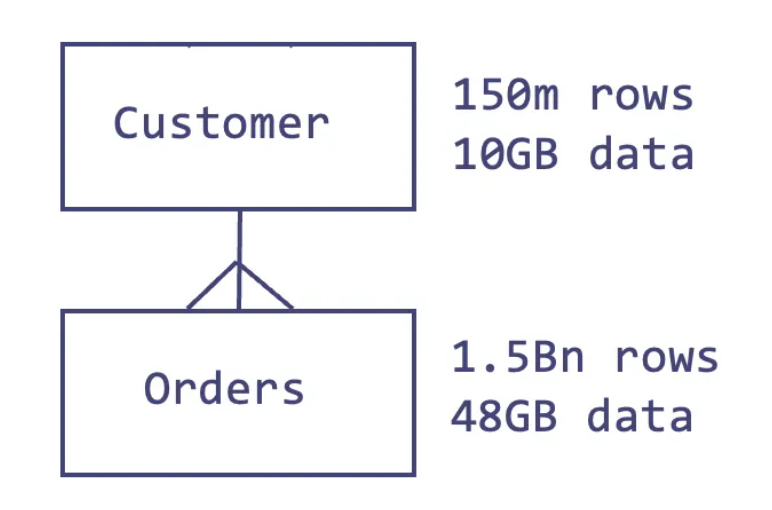

Whereas the straightforward instance above illustrates find out how to learn a question profile, we have to understand how Snowflake handles JOIN operations between tables to completely perceive how Snowflake works.

The SQL question under features a be a part of of the buyer and orders tables:

choose c_mktsegment

, rely(*)

, sum(o_totalprice)

, rely(*)

from buyer

, orders

the place c_custkey = o_custkey

and o_orderdate between ('01-JAN-1992') and ('31-JAN-1992')

group by 1

order by 1;The diagram under illustrates the connection between these tables within the Snowflake pattern knowledge within the snowflake_sample_data.tpch_sf100 schema.

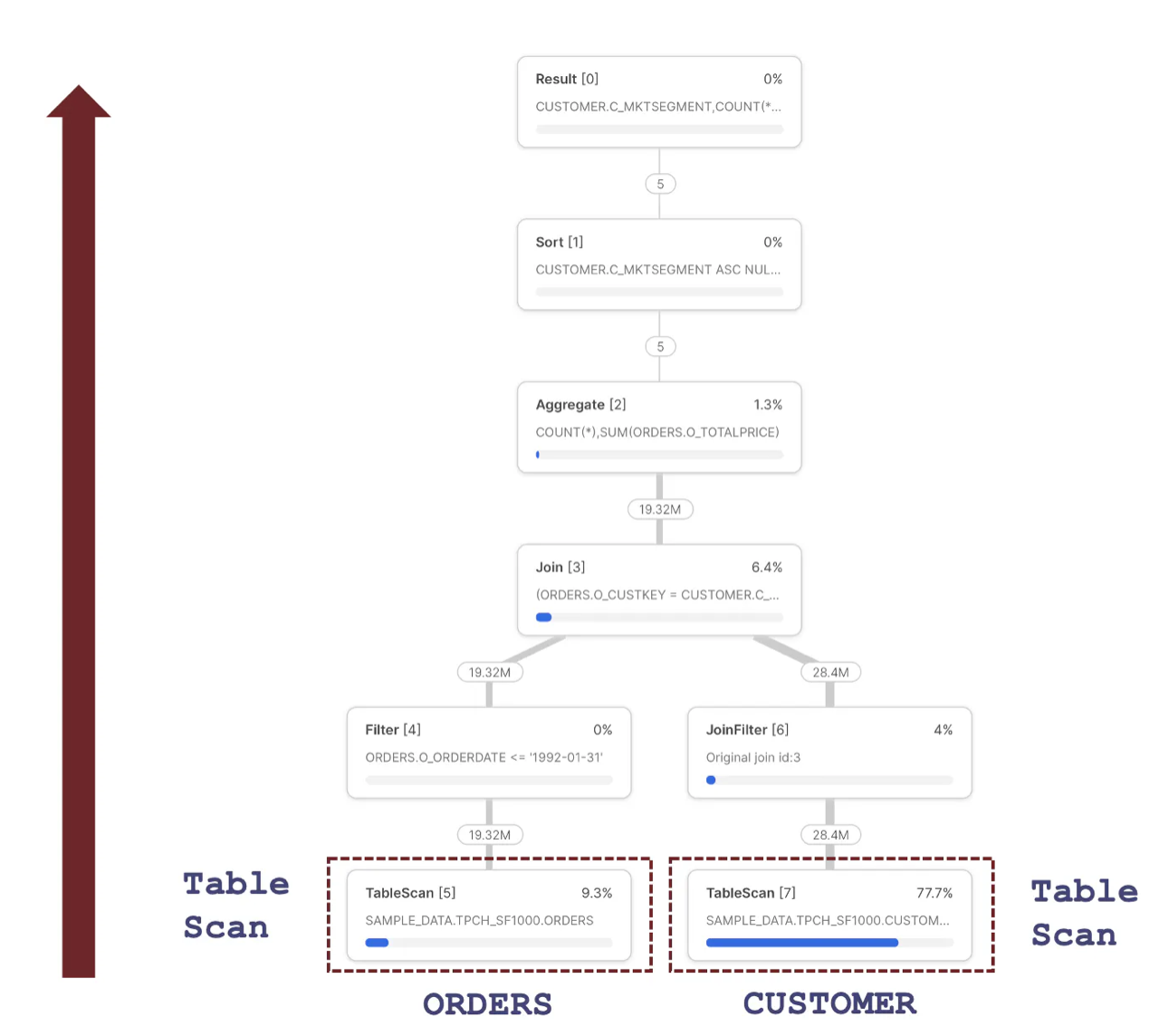

The diagram under illustrates the Snowflake Question Plan used to execute this question, highlighting the preliminary steps that contain fetching knowledge from storage.

The diagram under illustrates the Snowflake Question Plan used to execute this question, highlighting the preliminary steps that contain fetching knowledge from storage.

Some of the necessary insights in regards to the Question Profile above is that every step represents an independently working parallel course of that runs concurrently. This makes use of superior vectorized processing to fetch and course of just a few thousand rows at a time, passing them to the subsequent step to course of in parallel.

Snowflake can use this structure to interrupt down advanced question pipelines, executing particular person steps in parallel throughout all CPUs in a Digital Warehouse. It additionally means Snowflake can learn knowledge from the ORDERS and CUSTOMER knowledge in parallel utilizing the Desk Scan operations.

How Does Snowflake Execute a JOIN Operation?

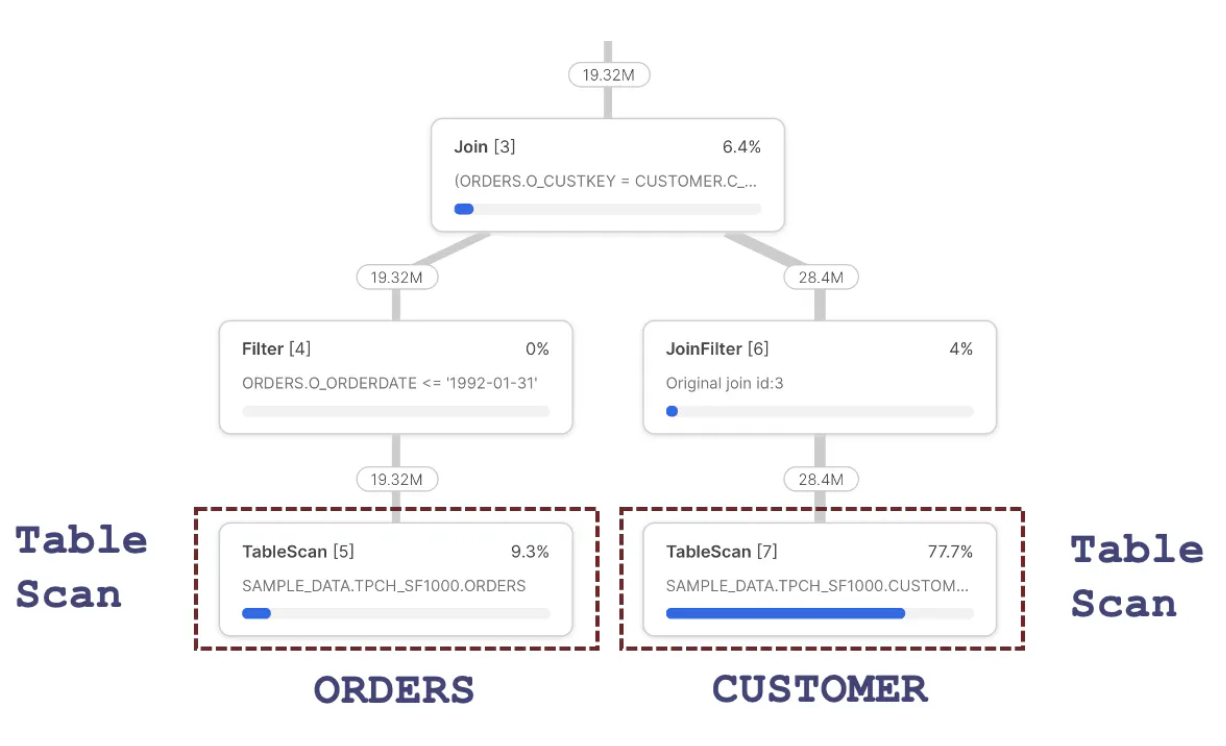

The diagram under illustrates the processing sequence of a Snowflake JOIN operation. To learn the sequence accurately, all the time begin from the Be part of step and take the left leg, on this case, right down to the TableScan of the ORDERS desk, step 5.

The diagram above signifies the steps have been as follows:

- TableScan [5]: This fetches knowledge from the

ORDERSdesk, which returns 19.32M rows out of 150M rows. This discount is defined by the Snowflake’s means to routinely partition get rid of – to skip over micro-partitions, as described within the article on Snowflake Cluster Keys. Discover that the question spent 9.3% of the time processing this step. - Filter [4]: Receives 19.32M rows and logically represents the next line within the above question:

and o_orderdate between ('01-JAN-1992') and ('31-JAN-1992')

This step represents filtering rows from the ORDERS desk earlier than passing them to the Be part of [3] step above. Surprisingly, this step seems to do no precise work because it receives and emits 19.32M rows. Nevertheless, Snowflake makes use of Predicate Pushdown, which filters the rows within the TableScan [4] step earlier than studying them into reminiscence. The output of this step is handed to the Be part of step.

- Be part of [3]: Receives

ORDERSrows however must fetch correspondingCUSTOMERentries. We, subsequently, have to skip right down to theTableScan [7]step. - TableScan [7]: Fetches knowledge from the

CUSTOMERdesk. Discover this step takes 77.7% of the general execution time and, subsequently, has essentially the most vital potential profit from question efficiency tuning. This step fetches 28.4M rows, though Snowflake routinely tunes this step, as there are 1.5 Bn rows on theCUSTOMERdesk. - JoinFilter [6]: This step represents an computerized Snowflake efficiency tuning operation that makes use of a Bloom Filter to keep away from scanning micro-partitions on the right-hand aspect of a

Be part ofoperation. In abstract, as Snowflake has already fetched theCUSTOMERentries, it solely must fetchORDERSfor the matchingCUSTOMERrows. This explains the actual fact theTableScan [7]returns solely 28M of the 1.5Bn attainable entries. It is price noting this efficiency tuning is routinely utilized, though it may very well be improved utilizing a Cluster Key on theORDERSdesk on the be a part of columns. - Be part of [3]: This represents the precise be a part of of knowledge within the

CUSTOMERandORDERStables. It is necessary to grasp that each SnowflakeBe part ofoperation is carried out as a Hash Be part of.

What Is a Snowflake Hash Be part of?

Whereas it might seem we’re disappearing into the Snowflake internals, bear with me. Understanding how Snowflake executes JOIN operations highlights a vital performance-tuning alternative.

The diagram under highlights the important statistics to be careful for in any Snowflake Be part of operation.

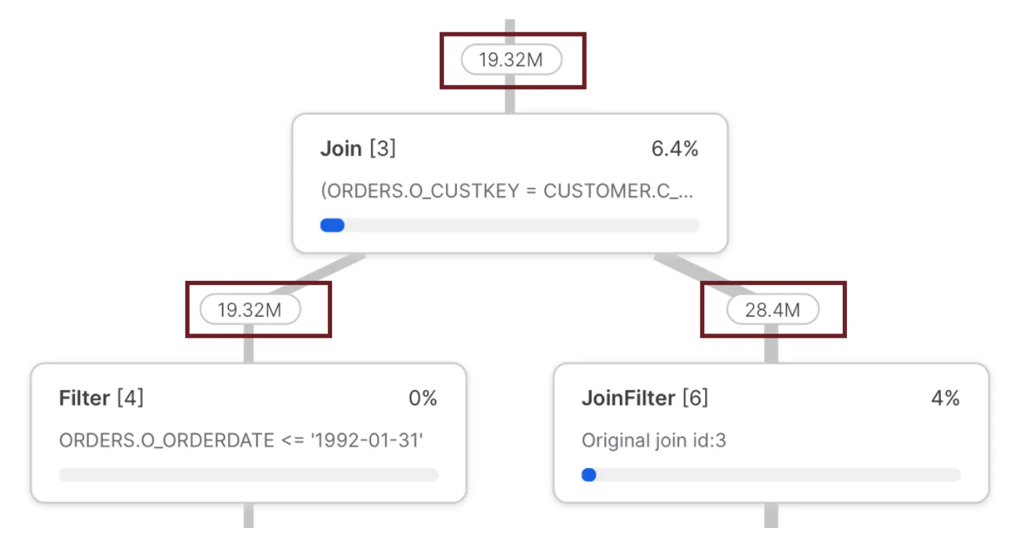

The diagram above reveals the variety of rows fed into the JOIN and the whole rows returned. Specifically, the left leg delivered fewer rows (19.32M) than the correct leg (28.4M). That is necessary as a result of it highlights an rare however vital efficiency sample: The variety of rows fed into the left leg of a JOIN should all the time be lower than the correct.

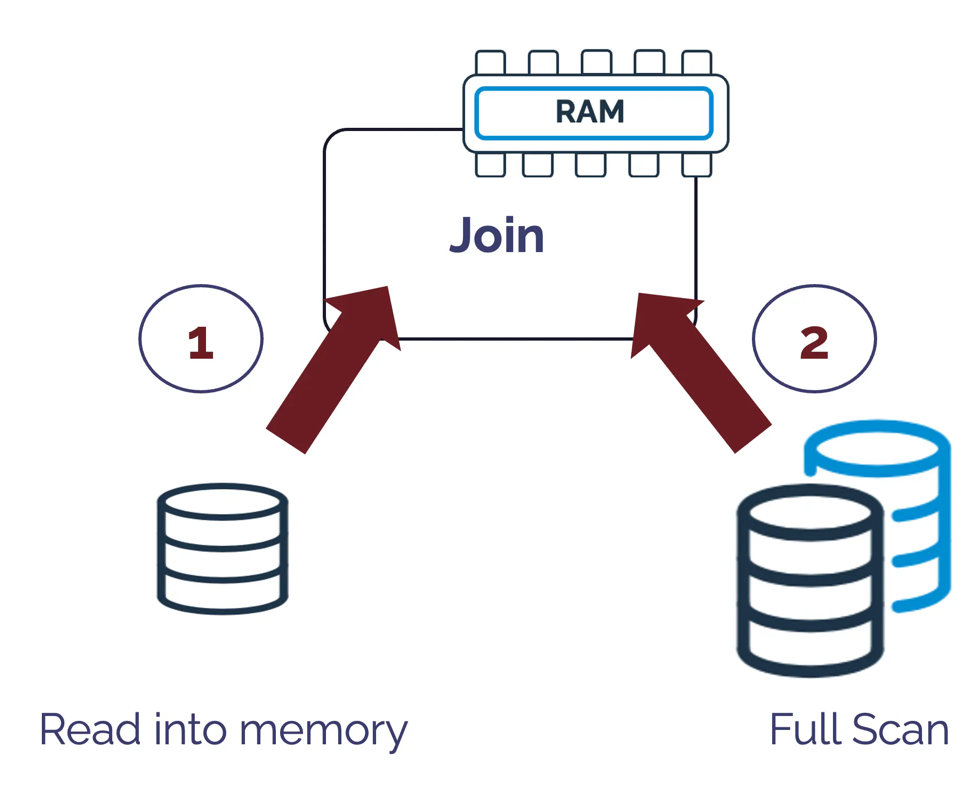

The explanation for this vital rule is revealed in the way in which Snowflake executes a Hash Be part of, which is illustrated within the diagram under:

The above diagram illustrates how a Hash Be part of operation works by studying a whole desk into reminiscence and producing a singular hash key for every row. It then performs a full desk scan, which seems to be up in opposition to the in-memory hash key to affix the ensuing knowledge units.

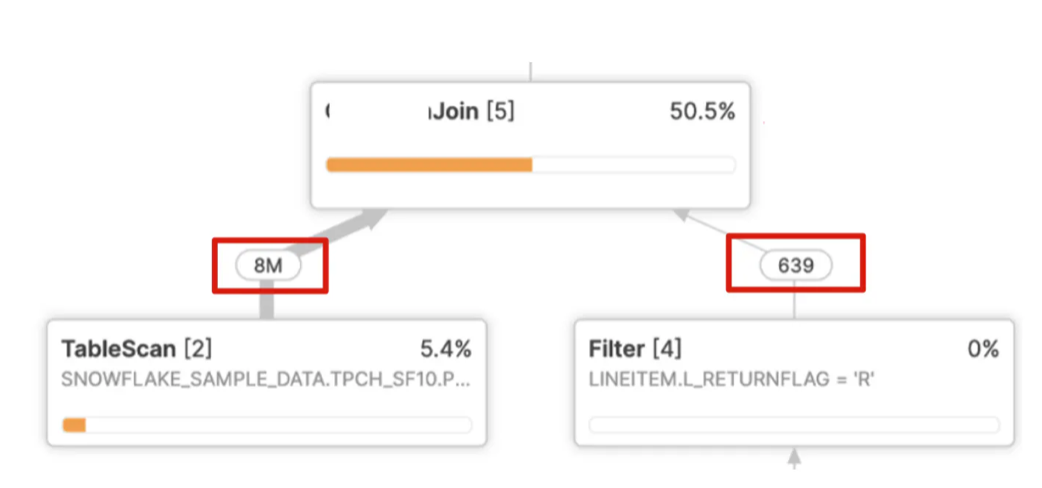

Due to this fact, it is important to accurately establish the smaller of the 2 knowledge units and skim it into reminiscence whereas scanning the bigger of the 2, however generally Snowflake will get it mistaken. The screen-shot under illustrates the scenario:

Within the above instance, Snowflake must learn eight million entries into reminiscence, create a hash key for every row, and carry out a full scan of simply 639 rows. This results in very poor question efficiency and a be a part of that ought to take seconds however usually takes hours.

As I’ve defined beforehand in an article on Snowflake Efficiency Tuning, that is usually the results of a number of nested joins and group by operations, which makes it troublesome for Snowflake to establish the cardinality accurately.

Whereas this occurs sometimes, it may possibly result in excessive efficiency degradation and one of the best apply method is to simplify the question, maybe breaking it down into a number of steps utilizing transient or short-term tables.

Figuring out Points Utilizing the Question Profile

Question Profile Be part of Explosion

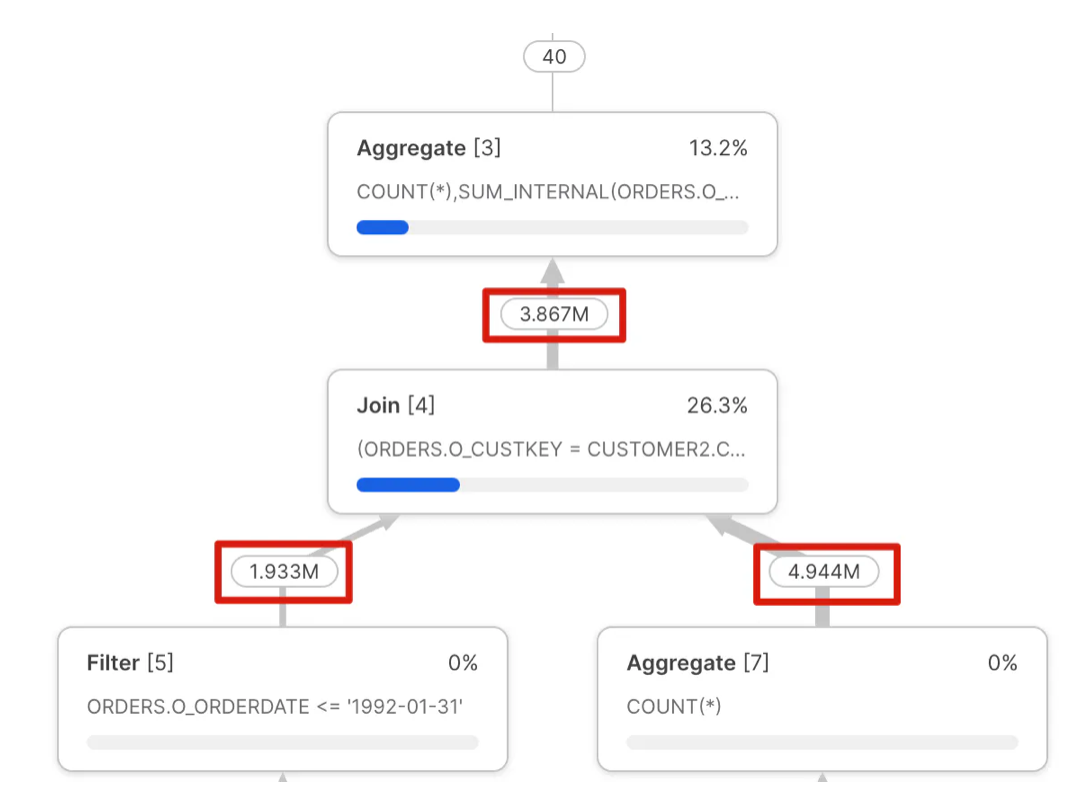

The screenshot under illustrates a typical concern that usually results in each poor question efficiency and (extra importantly) incorrect outcomes.

Discover the output of the Be part of [4] step would not match the values enter on the left or proper leg regardless of the actual fact the question be a part of clause is an easy be a part of by CUSTKEY?

This concern is commonly referred to as a “Join Explosion” and is often brought on by duplicate values in one of many tables. As indicated above, this ceaselessly results in poor question efficiency and ought to be investigated and glued.

GET_OPERATOR_QUERY_STATS , which permits programmatic entry to the question profile.

Unintended Cartesian Be part of

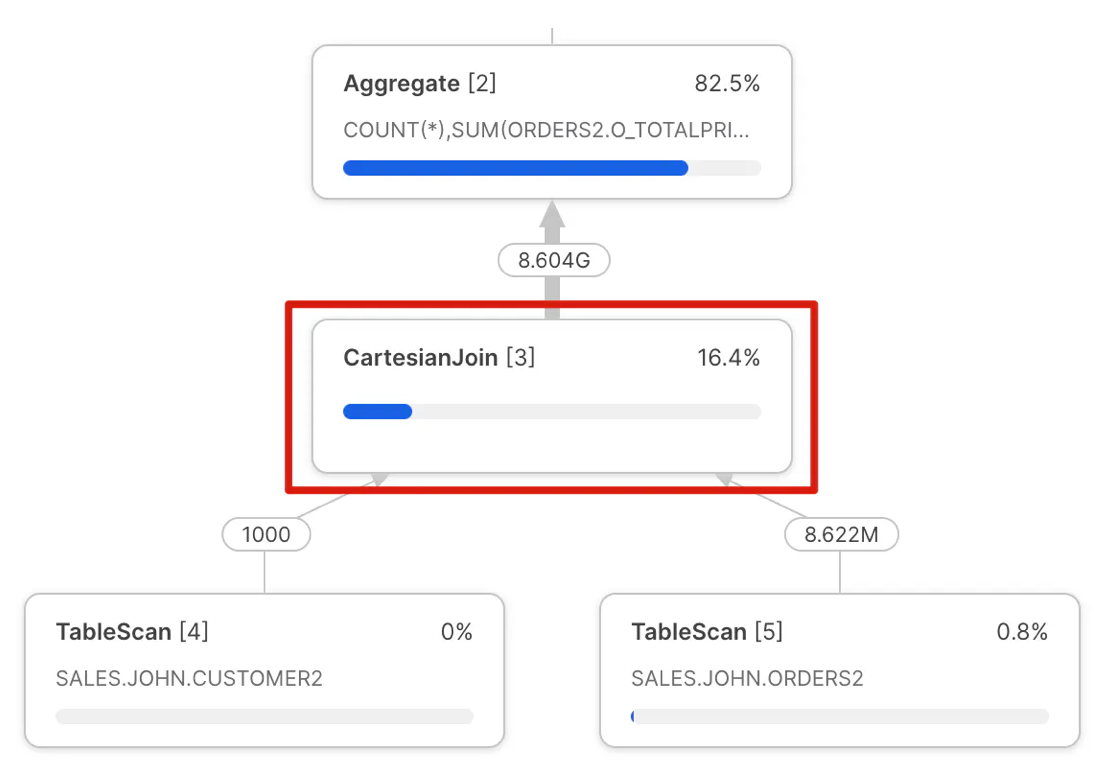

The screenshot under illustrates one other widespread concern simply recognized within the Snowflake question profile: a cartesian be a part of operation.

Much like the Be part of Explosion above, this question profile is produced by a mistake within the SQL question. This error produces an output that multiplies the dimensions of each inputs. Once more, that is simple to identify in a question profile, and though it might, in some circumstances, be intentional, if not, it may possibly result in very poor question efficiency.

Disjunctive OR Question

Disjunctive database queries are queries that embody an OR within the question WHEREclause. That is an instance of a legitimate use of the Cartesian Be part of, however one which could be simply prevented.

Take, for instance, the next question:

choose distinct l_linenumber

from snowflake_sample_data.tpch_sf1.lineitem,

snowflake_sample_data.tpch_sf1.partsupp

the place (l_partkey = ps_partkey)

or

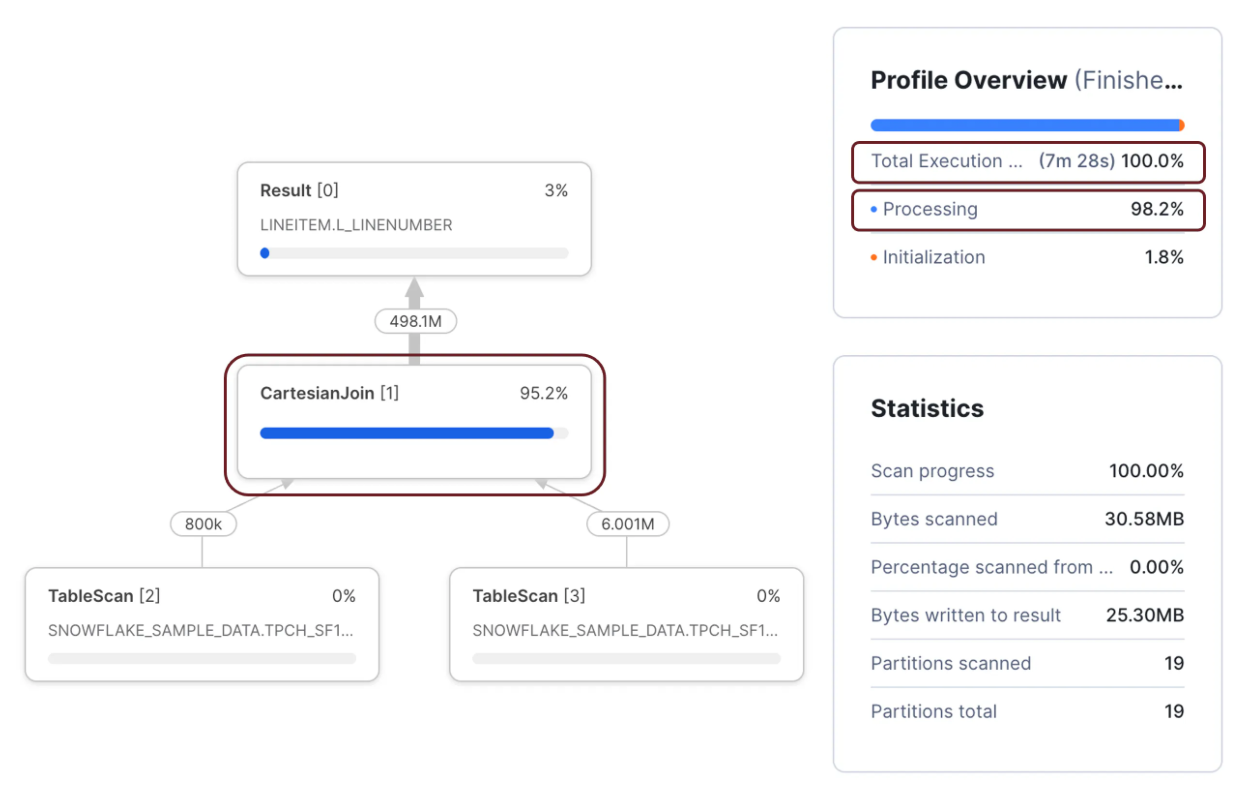

(l_suppkey = ps_suppkey);The above question produced the next Snowflake Question Profile and took 7m 28s to finish on an XSMALL warehouse regardless of scanning solely 28 micro partitions.

Nevertheless, when the identical question was rewritten (under) to make use of a UNION assertion, it took simply 3.4 seconds to finish, a 132 occasions efficiency enchancment for little or no effort.

Nevertheless, when the identical question was rewritten (under) to make use of a UNION assertion, it took simply 3.4 seconds to finish, a 132 occasions efficiency enchancment for little or no effort.

choose l_linenumber

from snowflake_sample_data.tpch_sf1.lineitem

be a part of snowflake_sample_data.tpch_sf1.partsupp

on l_partkey = ps_partkey

union

choose l_linenumber

from snowflake_sample_data.tpch_sf1.lineitem

be a part of snowflake_sample_data.tpch_sf1.partsupp

on l_suppkey = ps_suppkey;Discover the Cartesian Be part of operation accounted for 95.8% of the execution time. Additionally, the Profile Overview signifies that the question spent 98.9% of the time processing. That is price noting because it demonstrates a CPU-bound question.

Wrapping Columns within the WHERE Clause

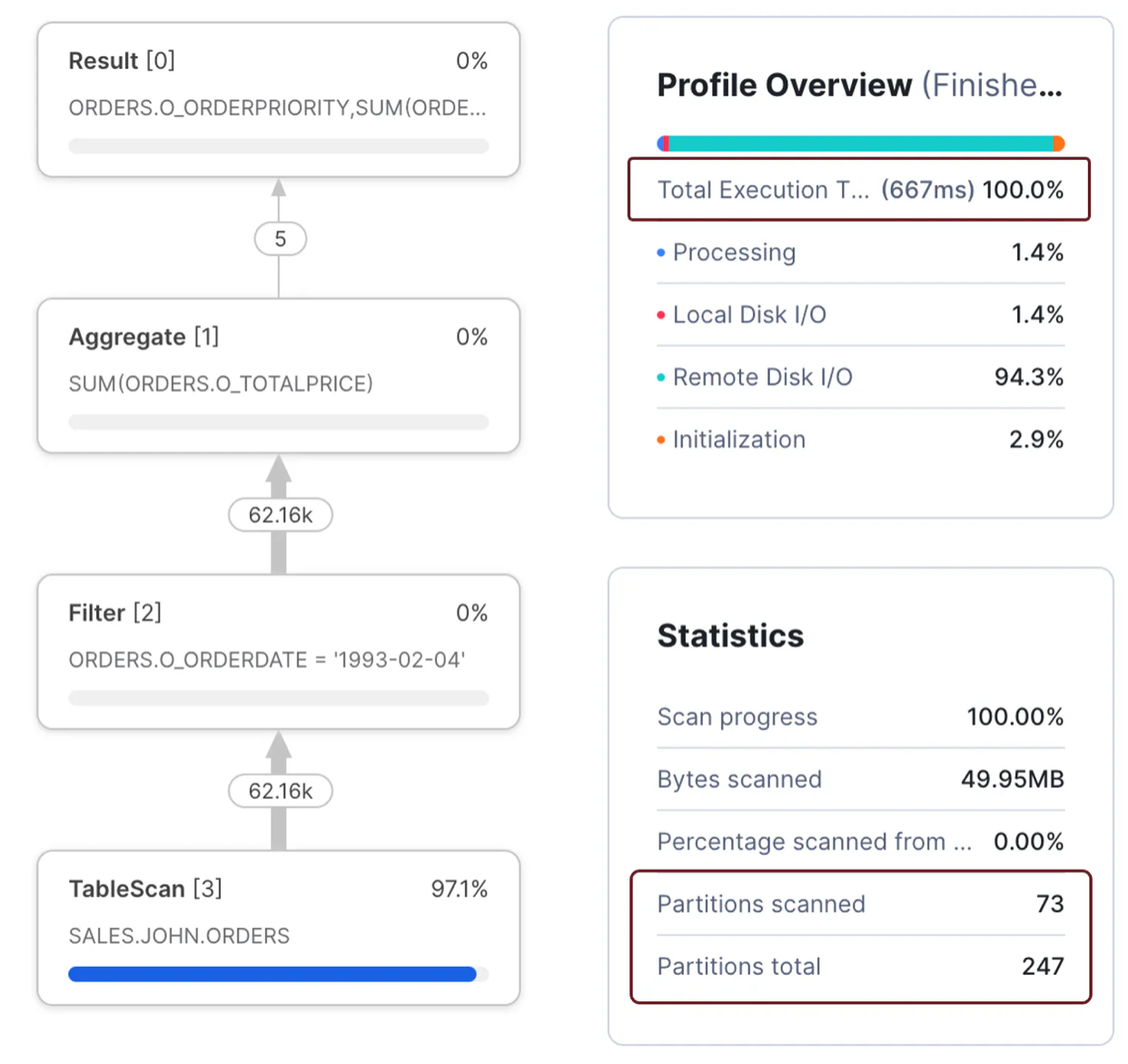

Whereas this concern is tougher to establish from the question profile alone, it illustrates one crucial statistics obtainable, the Partitions Scanned in comparison with Partitions Complete.

Take the next SQL question for instance:

choose o_orderpriority,

sum(o_totalprice)

from orders

the place o_orderdate = to_date('1993-02-04','YYYY-MM-DD')

group by all;The above question was accomplished in 667 milliseconds on an XSMALL warehouse and produced the next profile.

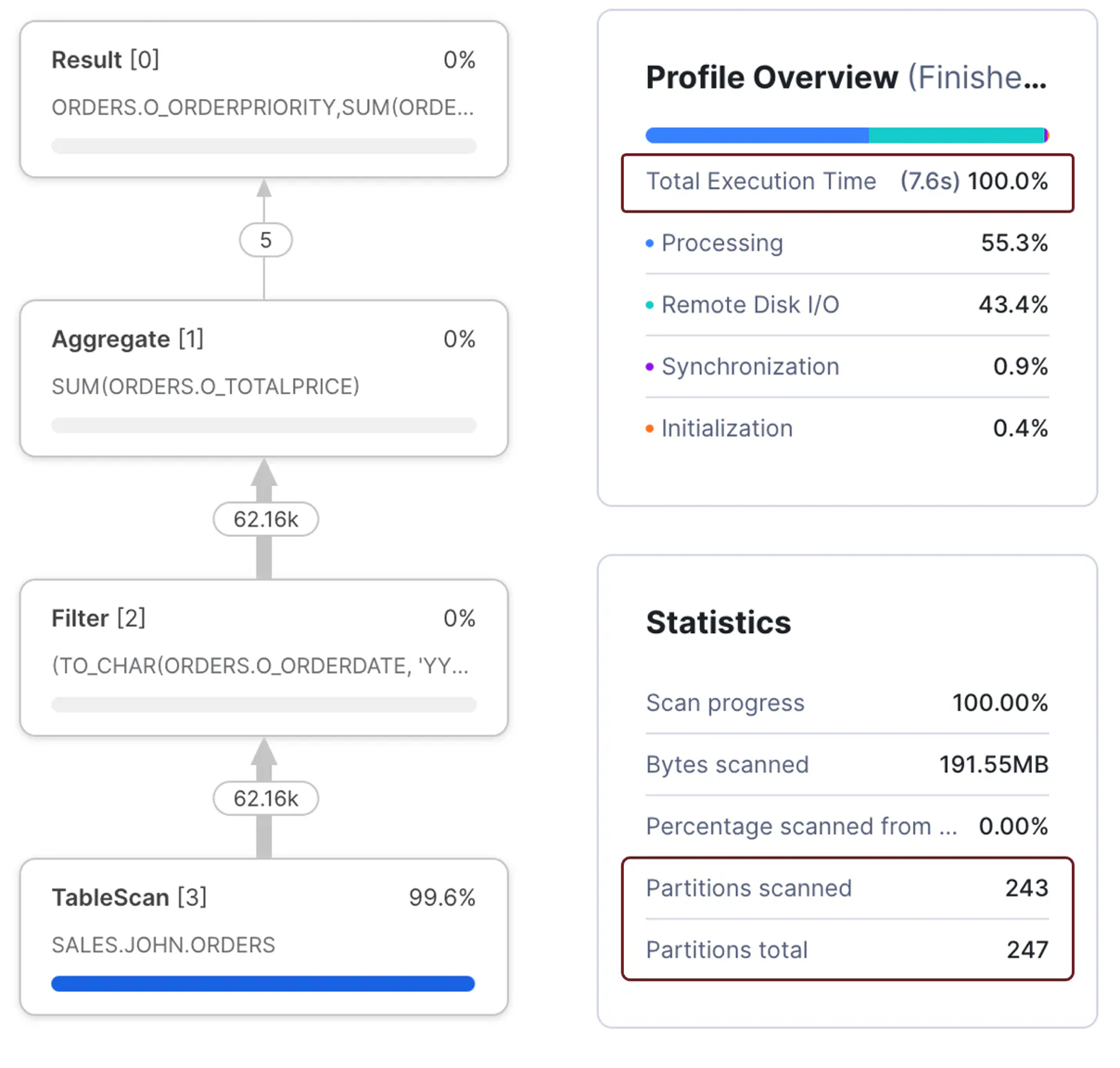

Discover the sub-second execution time and that the question solely scanned 73 of 247 micro partitions. Examine the above scenario to the next question, which took 7.6 seconds to finish – 11 occasions slower than the earlier question to provide the identical outcomes.

choose o_orderpriority,

sum(o_totalprice)

from orders

the place to_char(o_orderdate, 'YYYY-MM-DD') = '1993-02-04'

group by all;

The screenshot above reveals the second question was 11 occasions slower as a result of it wanted to scan 243 micro-partitions. The explanation lies within the WHERE clause.

Within the first question, the WHERE clause compares the ORDERDATE to a hard and fast literal. This meant that Snowflake was in a position to carry out partition elimination by date.

the place o_orderdate = to_date('1993-02-04','YYYY-MM-DD')Within the second question, the WHERE clause modified the ORDERDATE subject to a personality string, which diminished Snowflake’s means to filter out micro-partitions. This meant extra knowledge wanted to be processed which took longer to finish.

the place to_char(o_orderdate, 'YYYY-MM-DD') = '1993-02-04'Due to this fact, one of the best apply is to keep away from wrapping database columns with features, particularly not user-defined features, which severely impression question efficiency.

Figuring out Spilling to Storage within the Snowflake Question Profile

As mentioned in my article on enhancing question efficiency by avoiding spilling to storage, this tends to be an easy-to-identify and doubtlessly resolve concern.

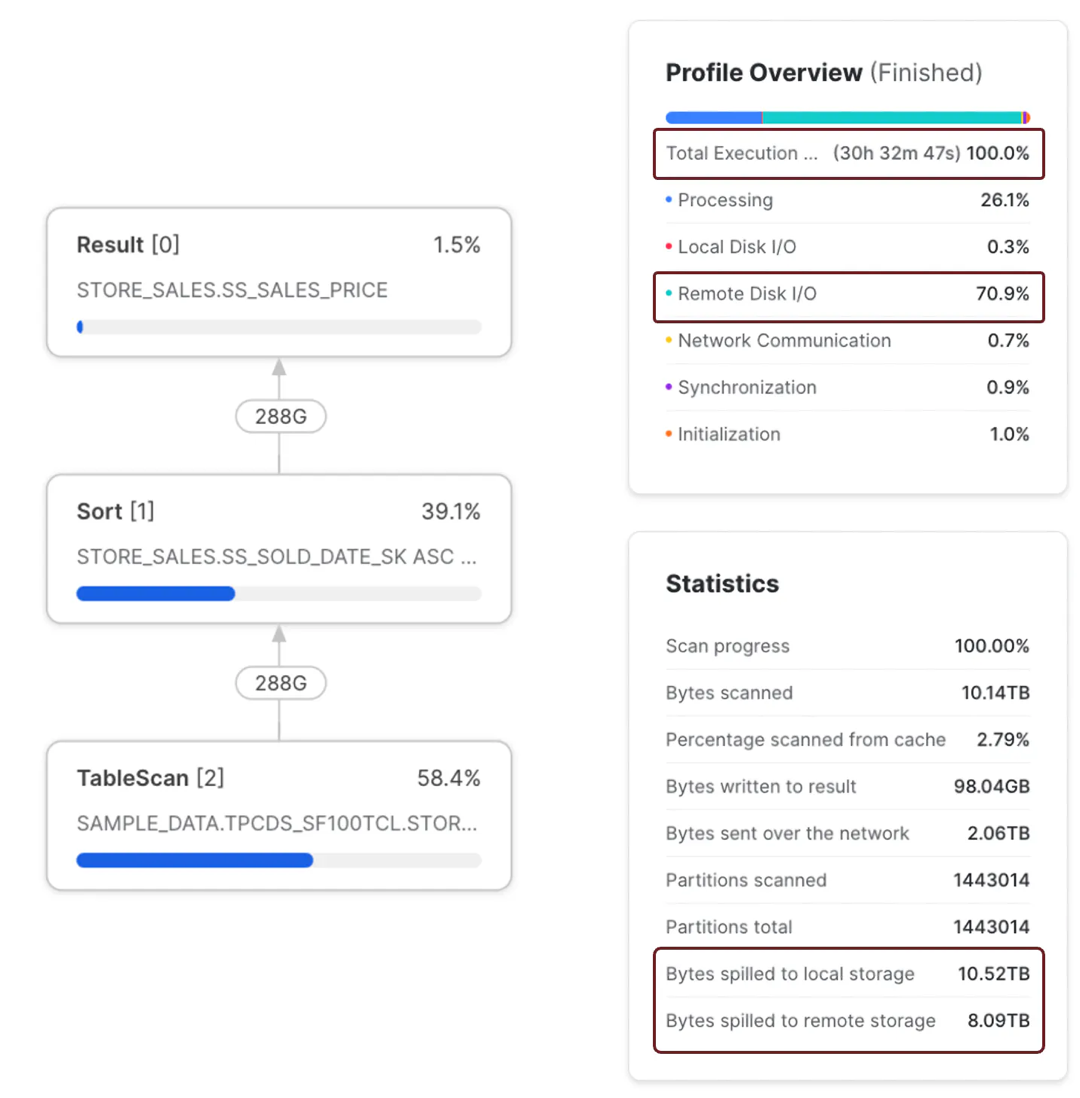

Take, for instance, this straightforward benchmark SQL question:

choose ss_sales_price

from snowflake_sample_data.TPCDS_SF100TCL.STORE_SALES

order by SS_SOLD_DATE_SK, SS_SOLD_TIME_SK, SS_ITEM_SK, SS_CUSTOMER_SK,

SS_CDEMO_SK, SS_HDEMO_SK, SS_ADDR_SK, SS_STORE_SK,

SS_PROMO_SK, SS_TICKET_NUMBER, SS_QUANTITY;The above question sorted a desk with 288 billion rows and took over 30 hours to finish on a SMALL digital warehouse. The vital level is that the Question Profile Statistics confirmed that it spilled over 10 TB to native storage and eight TB to distant storage. Moreover, as a result of it took so lengthy, it value over $183 to finish.

The screenshot above reveals the question profile, execution time, and bytes spilled to native and distant storage. It is also price noting that the question spent 70.9% of the time ready for Distant Disk I/O, in line with the info quantity spilled to Distant Storage.

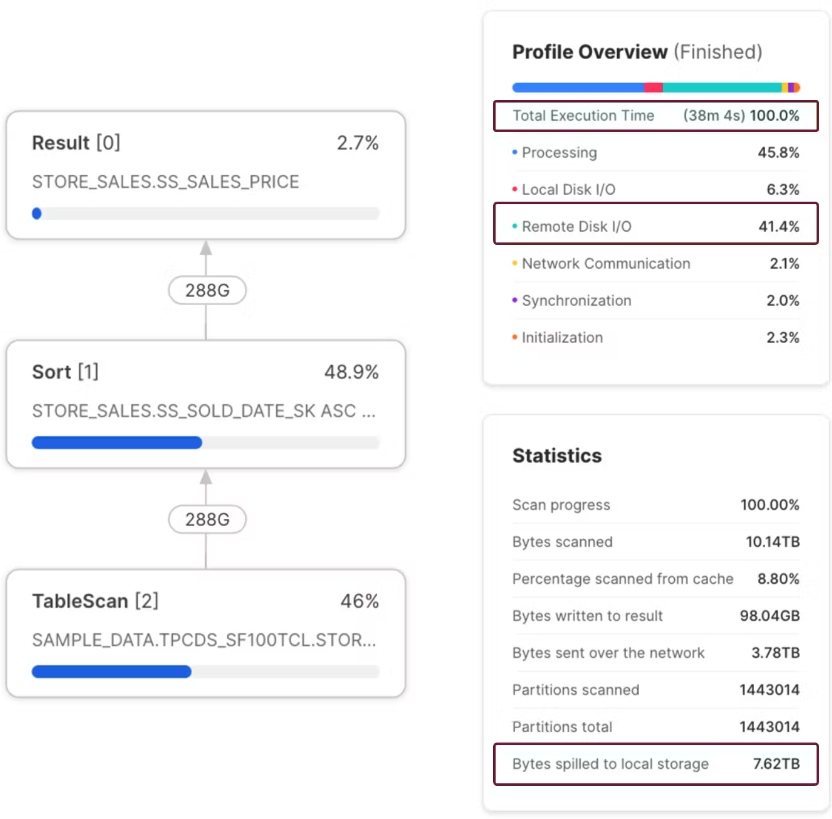

Examine the outcomes above to the screenshot under. This reveals the identical question executed on an X3LARGE warehouse.

The Question Profile above reveals that the question was accomplished in 38 minutes and produced no distant spilling. Along with finishing 48 occasions quicker than on the SMALL warehouse additionally value $121.80, a 66% discount in value. Assuming this question was executed every day, that will quantity to an annual financial savings of over $22,000.

Conclusion

The instance above illustrates my level in an article on controlling Snowflake prices. Snowflake Knowledge Engineers and Directors are inclined to put far an excessive amount of emphasis on tuning efficiency. Nevertheless, Snowflake has modified the panorama, and we have to give attention to each maximizing question efficiency and controlling prices.

The duty of managing value whereas maximizing efficiency could appear at odds with one another, however utilizing the Snowflake Question Profile and the methods described on this article, there isn’t any purpose why we will not ship each.