Producing well-structured JSON outputs is usually a advanced process, particularly when working with massive language fashions (LLMs). This text explores producing JSON outputs generated from LLMs with an instance of utilizing a Node.js-powered net software.

Giant Language Fashions (LLMs)

LLMs are subtle AI programs designed to understand and produce human-like textual content, able to dealing with duties corresponding to translation, summarization, and content material creation. Fashions like GPT (Mannequin by Open AI), BERT, and Claude have been instrumental in advancing pure language processing, making them precious instruments for chatbots and different AI-driven purposes.

JavaScript Object Notation (JSON)

JSON is a light-weight knowledge format that is straightforward to learn and write, each for folks and computer systems. It organizes knowledge into key/worth pairs (objects) and ordered lists (arrays) utilizing a textual content format that, whereas based mostly on JavaScript, is suitable with many programming languages. JSON is usually employed for knowledge trade between net servers and purposes and can be in style for configuration recordsdata and storing structured knowledge.

Open AI API

OpenAI API permits builders to combine superior AI functionalities into their purposes, merchandise, and companies by offering entry to OpenAI’s state-of-the-art language fashions and different AI applied sciences.

The API follows a RESTful design, with requests and responses formatted in JSON. It helps quite a few programming languages, aided by each official and community-built libraries. Pricing is usage-based, calculated in tokens (about 4 characters per token), with completely different prices for numerous fashions. Common updates add new fashions and capabilities, with builders beginning by buying an API key. Go to OpenAI’s API platform and join or log in.

Right here is an instance net software powered by Node.js utilizing Open AI API. A Node.js server script working is an internet software, taking enter from a consumer and calling Open AI API to get outcomes from the LLM.

require('dotenv').config();

const categorical = require('categorical');

const axios = require('axios');

const app = categorical();

const PORT = 3000;

app.use(categorical.static('public'));

app.use(categorical.json());

app.submit('/api/fetch', async (req, res) => {

strive {

const response = await axios.submit('https://api.openai.com/v1/chat/completions', {

mannequin: "gpt-4-turbo",

messages: [{ role: "system", content: "You are a helpful assistant." }, { role: "user", content: req.body.prompt }]

}, {

headers: {

'Authorization': `Bearer ${course of.env.OPENAI_API_KEY}`,

'Content material-Sort': 'software/json'

}

});

const messageContent = response.knowledge.decisions[0].message.content material;

res.json({ message: messageContent });

} catch (error) {

res.standing(500).json({ error: 'Failure to get response from OpenAI', particulars: error.message });

}

});

app.pay attention(PORT, () => {

console.log(`Server working on http://localhost:${PORT}`);

});The above code runs the online app server, however each time the consumer enters the question, the response is returned in textual content format. Right here is the way it seems to be when built-in with the Person Interface that asks the consumer for textual content.

Textual content Response from Open AI API utilizing gpt-4-turbo

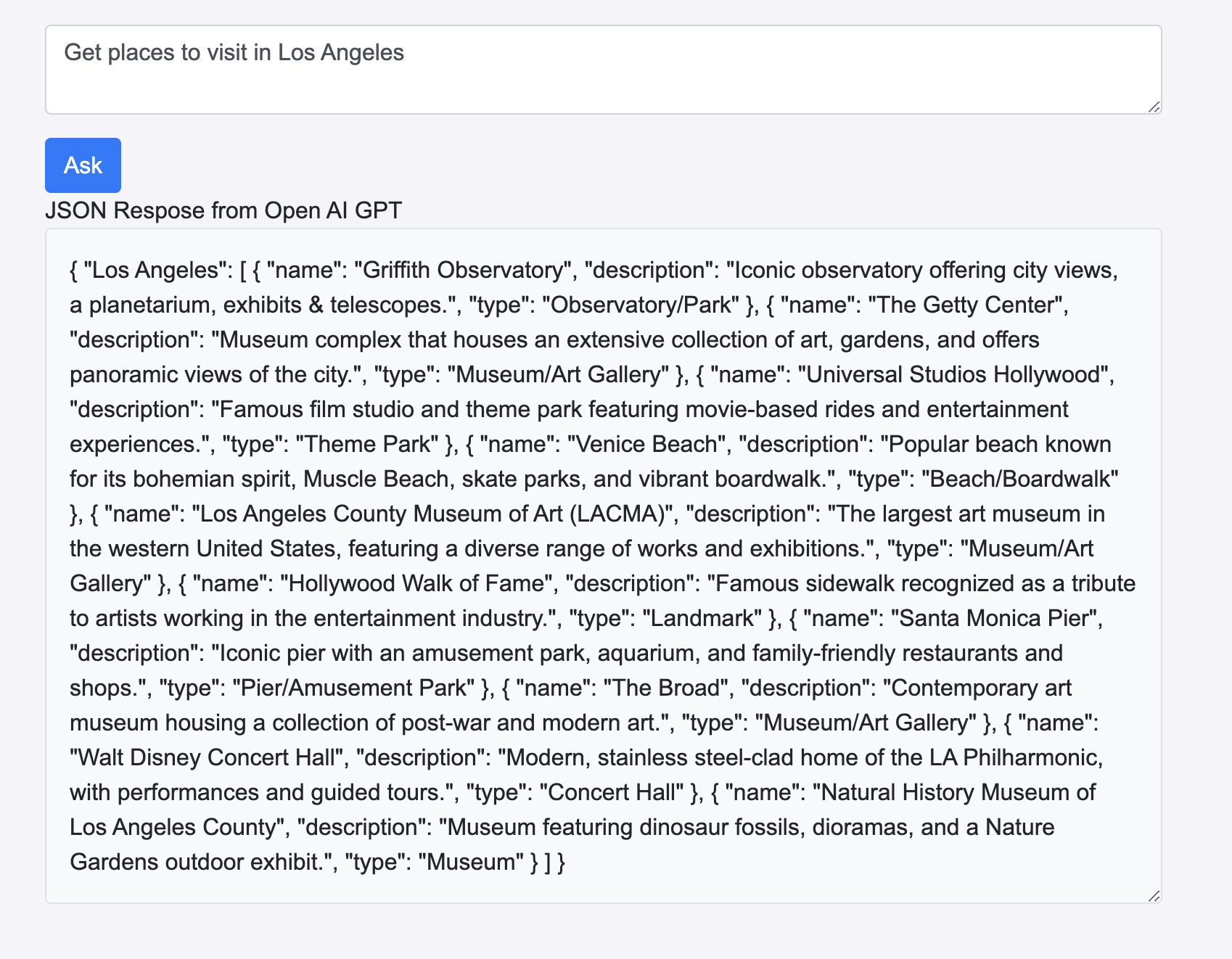

It will have been useful if the Open AI API had responded utilizing JSON with location particulars which might enable the Person Interface to be extra intuitive and actionable in order that seamless integrations with different purposes can be useful for the consumer.

Open AI API JSON Response Format

Open AI API helps the response_format parameter in API, the place the sort may be outlined.

response_format: { sort: "json_object" } const response = await axios.submit('https://api.openai.com/v1/chat/completions', {

mannequin: "gpt-4-turbo",

response_format: { sort: "json_object" },

messages: [{ role: "system", content: "You are a helpful assistant. return results in json format" }, { role: "user", content: req.body.prompt }]

}, {

headers: {

'Authorization': `Bearer ${course of.env.OPENAI_API_KEY}`,

'Content material-Sort': 'software/json'

}

});Bettering the earlier code with response format to be a JSON object will look as follows. Nonetheless, simply modifying the response format won’t return ends in JSON. The messages handed in Open AI API ought to convey the JSON format to be returned.

Right here is the modified code which is able to return ends in JSON format:

require('dotenv').config();

const categorical = require('categorical');

const axios = require('axios');

const app = categorical();

const PORT = 3000;

app.use(categorical.static('public'));

app.use(categorical.json());

app.submit('/api/fetch', async (req, res) => {

strive {

const response = await axios.submit('https://api.openai.com/v1/chat/completions', {

mannequin: "gpt-4-turbo",

response_format: { sort: "json_object" },

messages: [{ role: "system", content: "You are a helpful assistant. return results in json format" }, { role: "user", content: req.body.prompt }]

}, {

headers: {

'Authorization': `Bearer ${course of.env.OPENAI_API_KEY}`,

'Content material-Sort': 'software/json'

}

});

const messageContent = response.knowledge.decisions[0].message.content material;

res.json({ message: messageContent });

} catch (error) {

res.standing(500).json({ error: 'Failure to get response from OpenAI', particulars: error.message });

}

});

app.pay attention(PORT, () => {

console.log(`Net app Server working on http://localhost:${PORT}`);

console.log(`Utilizing OpenAI API Key: ${course of.env.OPENAI_API_KEY}`);

});The above code runs the online app server, however each time the consumer enters the question, the response is returned in JSON format as proven beneath, which might enable parsing the JSON response within the consumer interface after which integrating with third-party widgets to offer actionable Person Interfaces.

Textual content Response from Open AI API utilizing gpt-4-turbo

Open AI API JSON Response With Perform Calling

Perform calling is a robust methodology for producing structured JSON responses. It lets builders outline particular capabilities with preset parameters and return sorts. This helps the language mannequin perceive the operate’s function and produce responses that match the required construction. By narrowing down the output to match the anticipated format, this system boosts each accuracy and consistency in API interactions.

Right here is the modified model of the earlier code utilizing operate calling:

messageContent += functionCall.operate.arguments;

});

}

res.json({ message: messageContent });

} catch (error) {

res.standing(500).json({ error: ‘Failure to get response from OpenAI’, particulars: error.message });

}

});

app.pay attention(PORT, () => {});” data-lang=”text/javascript”>

require('dotenv').config();

const categorical = require('categorical');

const axios = require('axios');

const app = categorical();

const PORT = 3000;

app.use(categorical.static('public'));

app.use(categorical.json());

app.submit('/api/fetch', async (req, res) => {

strive {

const instruments = [

{

"type": "function",

"function": {

"name": "get_places_in_city",

"description": "Get the places to visit in city",

"parameters": {

"type": "object",

"properties": {

"name": {

"type": "string",

"description": "Name of the place",

},

"description": {

"type": "string",

"description": "description of the place",

},

"type":{

"type": "string",

"description": "type of the place",

}

},

"required": ["name","description","type" ],

additionalProperties: false

},

}

}

];

//const func = {"role": "function", "name": "get_places_in_city", "content": "{"name": "", "description": "", "type": ""}"};

const response = await axios.submit('https://api.openai.com/v1/chat/completions', {

mannequin: "gpt-4o",

messages: [{ role: "system", content: "You are a helpful assistant. return results in json format" }, { role: "user", content: req.body.prompt }],

instruments: instruments

}, {

headers: {

'Authorization': `Bearer ${course of.env.OPENAI_API_KEY}`,

'Content material-Sort': 'software/json'

}

});

console.error('Error in response of OpenAI API:', response.knowledge.decisions[0].message? JSON.stringify(response.knowledge.decisions[0].message, null, 2) : error.message);

const toolCalls = response.knowledge.decisions[0].message.tool_calls;

let messageContent="";

if(toolCalls){

toolCalls.forEach((functionCall)=>{

messageContent += functionCall.operate.arguments;

});

}

res.json({ message: messageContent });

} catch (error) {

res.standing(500).json({ error: 'Failure to get response from OpenAI', particulars: error.message });

}

});

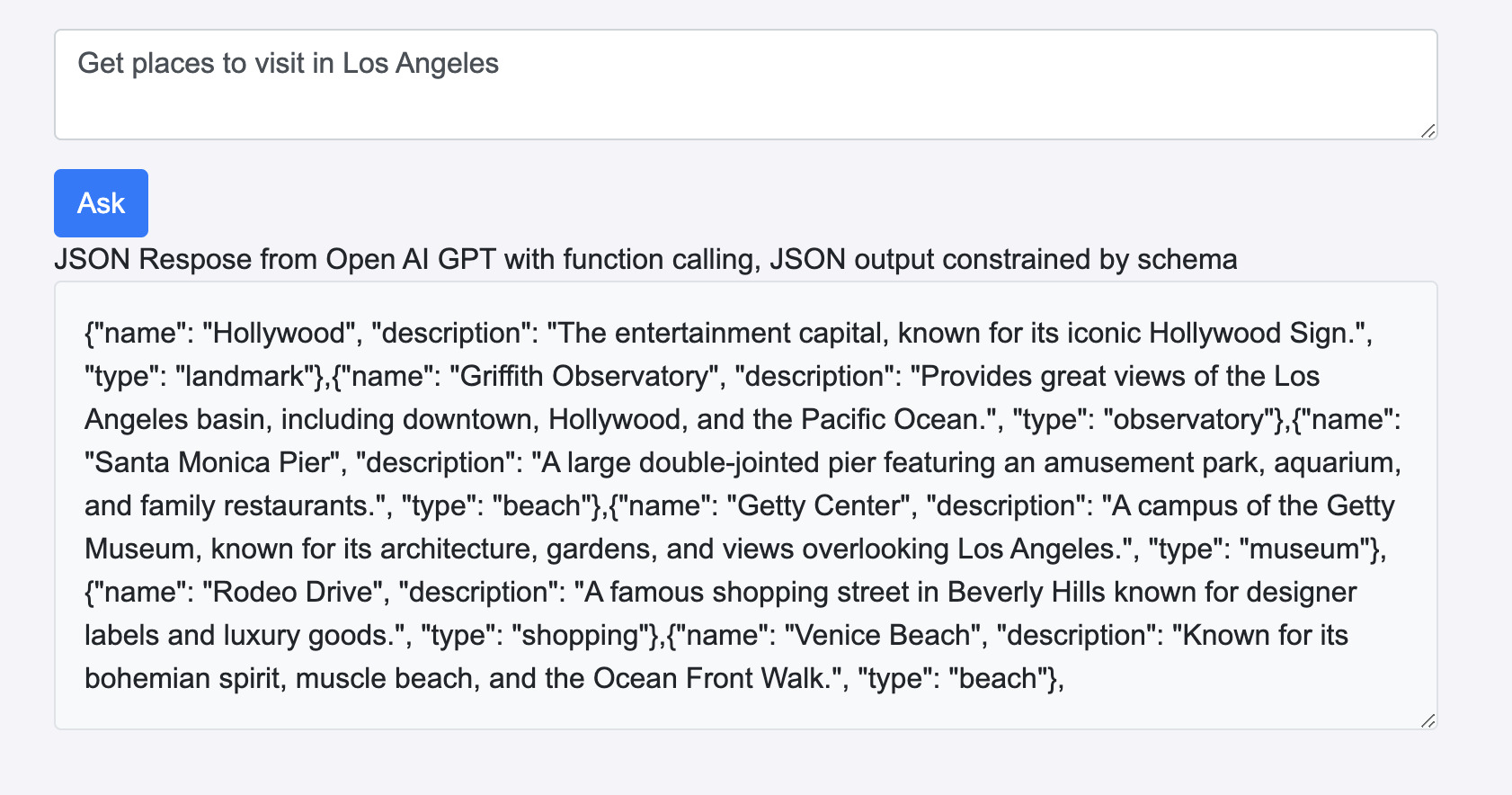

app.pay attention(PORT, () => {});This code launches an internet app server, and each time the consumer submits a question, the server responds with knowledge in JSON format. This response can then be parsed inside the consumer interface, making it potential to combine third-party widgets for creating actionable, interactive parts.

JSON response from OpenAIGPT with operate calling

Conclusion

This text with examples demonstrates learn how to modify API calls to OpenAI to request JSON-formatted responses. This method considerably enhances the usability of LLM outputs, enabling extra intuitive and actionable consumer interfaces. By specifying the response_format parameter or through the use of a operate calling method and crafting acceptable system messages, builders can make sure that LLM responses are returned in a structured JSON format.

This methodology of producing JSON outputs from LLMs facilitates seamless integration with different purposes and permits for extra subtle parsing and manipulation of AI-generated content material. As AI continues to evolve, the power to work with structured knowledge codecs like JSON will turn into more and more precious, enabling builders to create extra highly effective and user-friendly AI-driven purposes.