What Is Information Governance?

Information governance is a framework that’s developed by means of the collaboration of people with varied roles and obligations. This framework goals to determine processes, insurance policies, procedures, requirements, and metrics that assist organizations obtain their targets. These targets embrace offering dependable knowledge for enterprise operations, setting accountability, and authoritativeness, creating correct analytics to evaluate efficiency, complying with regulatory necessities, safeguarding knowledge, guaranteeing knowledge privateness, and supporting the information administration life cycle.

Making a Information Governance Board or Steering Committee is an efficient first step when integrating a Information Governance program and framework. A corporation’s governance framework needs to be circulated to all employees and administration, so everybody understands the adjustments happening.

The essential ideas wanted to efficiently govern knowledge and analytics functions. They’re:

- A give attention to enterprise values and the group’s targets

- An settlement on who’s chargeable for knowledge and who makes choices

- A mannequin emphasizing knowledge curation and knowledge lineage for Information Governance

- Resolution-making that’s clear and contains moral ideas

- Core governance parts embrace knowledge safety and danger administration

- Present ongoing coaching, with monitoring and suggestions on its effectiveness

- Remodeling the office into collaborative tradition, utilizing Information Governance to encourage broad participation

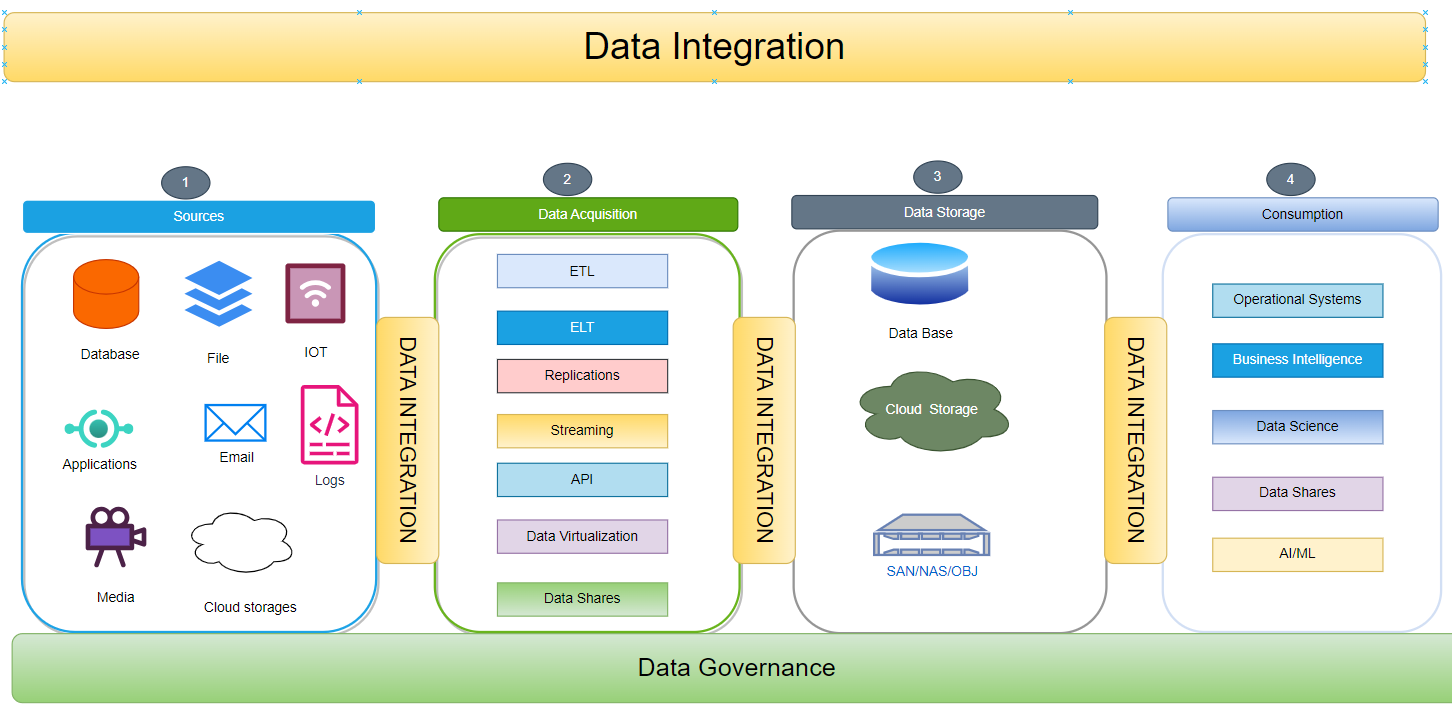

What Is Information Integration?

Information integration is the method of mixing and harmonizing knowledge from a number of sources right into a unified, coherent format that varied customers can eat, for instance: operational, analytical, and decision-making functions.

The info integration course of consists of 4 major vital parts:

1. Supply Techniques

Supply programs, comparable to databases, file programs, Web of Issues (IoT) gadgets, media continents, and cloud knowledge storage, present the uncooked info that should be built-in. The heterogeneity of those supply programs ends in knowledge that may be structured, semi-structured, or unstructured.

- Databases: Centralized or distributed repositories are designed to retailer, arrange, and handle structured knowledge. Examples embrace relational database administration programs (RDBMS) like MySQL, PostgreSQL, and Oracle. Information is usually saved in tables with predefined schemas, guaranteeing consistency and ease of querying.

- File programs: Hierarchical buildings that arrange and retailer information and directories on disk drives or different storage media. Frequent file programs embrace NTFS (Home windows), APFS (macOS), and EXT4 (Linux). Information will be of any sort, together with structured, semi-structured, or unstructured.

- Web of Issues (IoT) gadgets: Bodily gadgets (sensors, actuators, and so forth.) which might be embedded with electronics, software program, and community connectivity. IoT gadgets gather, course of, and transmit knowledge, enabling real-time monitoring and management. Information generated by IoT gadgets will be structured (e.g., sensor readings), semi-structured (e.g., system configuration), or unstructured (e.g., video footage).

- Media repositories: Platforms or programs designed to handle and retailer varied kinds of media information. Examples embrace content material administration programs (CMS) and digital asset administration (DAM) programs. Information in media repositories can embrace pictures, movies, audio information, and paperwork.

- Cloud knowledge storage: Companies that present on-demand storage and administration of information on-line. In style cloud knowledge storage platforms embrace Amazon S3, Microsoft Azure Blob Storage, and Google Cloud Storage. Information in cloud storage will be accessed and processed from anyplace with an web connection.

2. Information Acquisition

Information acquisition entails extracting and gathering info from supply programs. Completely different strategies will be employed primarily based on the supply system’s nature and particular necessities. These strategies embrace batch processes, streaming strategies using applied sciences like ETL (Extract, Remodel, Load), ELT (Extract, Load, Remodel), API (Utility Programming Interface), streaming, virtualization, knowledge replication, and knowledge sharing.

- Batch processes: Batch processes are generally used for structured knowledge. On this methodology, knowledge is gathered over a time period and processed in bulk. This strategy is advantageous for big datasets and ensures knowledge consistency and integrity.

- Utility Programming Interface (API): APIs function a communication channel between functions and knowledge sources. They permit for managed and safe entry to knowledge. APIs are generally used to combine with third-party programs and allow knowledge change.

- Streaming: Streaming entails steady knowledge ingestion and processing. It’s generally used for real-time knowledge sources comparable to sensor networks, social media feeds, and monetary markets. Streaming applied sciences allow speedy evaluation and decision-making primarily based on the most recent knowledge.

- Virtualization: Information virtualization offers a logical view of information with out bodily transferring or copying it. It permits seamless entry to knowledge from a number of sources, no matter their location or format. Virtualization is commonly used for knowledge integration and lowering knowledge silos.

- Information replication: Information replication entails copying knowledge from one system to a different. It enhances knowledge availability and redundancy. Replication will be synchronous, the place knowledge is copied in real-time, or asynchronous, the place knowledge is copied at common intervals.

- Information sharing: Information sharing entails granting licensed customers or programs entry to knowledge. It facilitates collaboration, permits insights from a number of views, and helps knowledgeable decision-making. Information sharing will be carried out by means of varied mechanisms comparable to knowledge portals, knowledge lakes, and federated databases.

3. Information Storage

Upon knowledge acquisition, storing knowledge in a repository is essential for environment friendly entry and administration. Varied knowledge storage choices can be found, every tailor-made to particular wants. These choices embrace:

- Database Administration Techniques (DBMS): Relational Database Administration Techniques (RDBMS) are software program programs designed to arrange, retailer, and retrieve knowledge in a structured format. These programs supply superior options comparable to knowledge safety, knowledge integrity, and transaction administration. Examples of in style RDBMS embrace MySQL, Oracle, and PostgreSQL. NoSQL databases, comparable to MongoDB and Cassandra, are designed to retailer and handle semi-structured knowledge. They provide flexibility and scalability, making them appropriate for dealing with giant quantities of information which will want to suit higher right into a relational mannequin.

- Cloud storage providers: Cloud storage providers supply scalable and cost-effective storage options within the cloud. They supply on-demand entry to knowledge from anyplace with an web connection. In style cloud storage providers embrace Amazon S3, Microsoft Azure Storage, and Google Cloud Storage.

- Information lakes: Information lakes are giant repositories of uncooked and unstructured knowledge of their native format. They’re typically used for large knowledge analytics and machine studying. Information lakes will be carried out utilizing Hadoop Distributed File System (HDFS) or cloud-based storage providers.

- Delta lakes: Delta lakes are a kind of information lake that helps ACID transactions and schema evolution. They supply a dependable and scalable knowledge storage resolution for knowledge engineering and analytics workloads.

- Cloud knowledge warehouse: Cloud knowledge warehouses are cloud-based knowledge storage options designed for enterprise intelligence and analytics. They supply quick question efficiency and scalability for big volumes of structured knowledge. Examples embrace Amazon Redshift, Google BigQuery, and Snowflake.

- Massive knowledge information: Massive knowledge information are giant collections of information saved in a single file. They’re typically used for knowledge evaluation and processing duties. Frequent massive knowledge file codecs embrace Parquet, Apache Avro, and Apache ORC.

- On-premises Storage Space Networks (SAN): SANs are devoted high-speed networks designed for knowledge storage. They provide quick knowledge switch speeds and supply centralized storage for a number of servers. SANs are sometimes utilized in enterprise environments with giant storage necessities.

- Community Connected Storage (NAS): NAS gadgets are file-level storage programs that hook up with a community and supply shared space for storing for a number of purchasers. They’re typically utilized in small and medium-sized companies and supply easy accessibility to knowledge from varied gadgets.

Choosing the proper knowledge storage possibility is determined by elements comparable to knowledge measurement, knowledge sort, efficiency necessities, safety wants, and value issues. Organizations could use a mix of those storage choices to satisfy their particular knowledge administration wants.

4. Consumption

That is the ultimate stage of the information integration lifecycle, the place the built-in knowledge is consumed by varied functions, knowledge analysts, enterprise analysts, knowledge scientists, AI/ML fashions, and enterprise processes. The info will be consumed in varied varieties and thru varied channels, together with:

- Operational programs: The built-in knowledge will be consumed by operational programs utilizing APIs (Utility Programming Interfaces) to help day-to-day operations and decision-making. For instance, a buyer relationship administration (CRM) system could eat knowledge about buyer interactions, purchases, and preferences to offer customized experiences and focused advertising campaigns.

- Analytics: The built-in knowledge will be consumed by analytics functions and instruments for knowledge exploration, evaluation, and reporting. Information analysts and enterprise analysts use these instruments to establish developments, patterns, and insights from the information, which will help inform enterprise choices and techniques.

- Information sharing: The built-in knowledge will be shared with exterior stakeholders, comparable to companions, suppliers, and regulators, by means of data-sharing platforms and mechanisms. Information sharing permits organizations to collaborate and change info, which might result in improved decision-making and innovation.

- Kafka: Kafka is a distributed streaming platform that can be utilized to eat and course of real-time knowledge. Built-in knowledge will be streamed into Kafka, the place it may be consumed by functions and providers that require real-time knowledge processing capabilities.

- AI/ML: The built-in knowledge will be consumed by AI (Synthetic Intelligence) and ML (Machine Studying) fashions for coaching and inference. AI/ML fashions use the information to be taught patterns and make predictions, which can be utilized for duties comparable to picture recognition, pure language processing, and fraud detection.

The consumption of built-in knowledge empowers companies to make knowledgeable choices, optimize operations, enhance buyer experiences, and drive innovation. By offering a unified and constant view of information, organizations can unlock the complete potential of their knowledge property and achieve a aggressive benefit.

What Are Information Integration Structure Patterns?

On this part, we are going to delve into an array of integration patterns, every tailor-made to offer seamless integration options. These patterns act as structured frameworks, facilitating connections and knowledge change between various programs. Broadly, they fall into three classes:

- Actual-Time Information Integration

- Close to Actual-Time Information Integration

- Batch Information Integration

1. Actual-Time Information Integration

In varied industries, real-time knowledge ingestion serves as a pivotal component. Let’s discover some sensible real-life illustrations of its functions:

- Social media feeds show the most recent posts, developments, and actions.

- Sensible houses use real-time knowledge to automate duties.

- Banks use real-time knowledge to observe transactions and investments.

- Transportation firms use real-time knowledge to optimize supply routes.

- On-line retailers use real-time knowledge to personalize buying experiences.

Understanding real-time knowledge ingestion mechanisms and architectures is important for selecting the very best strategy in your group.

Certainly, there’s a variety of Actual-Time Information Integration Architectures to select from. Amongst them mostly used architectures are:

- Streaming-Based mostly Structure

- Occasion-Pushed Integration Structure

- Lambda Structure

- Kappa Structure

Every of those architectures provides its distinctive benefits and use circumstances, catering to particular necessities and operational wants.

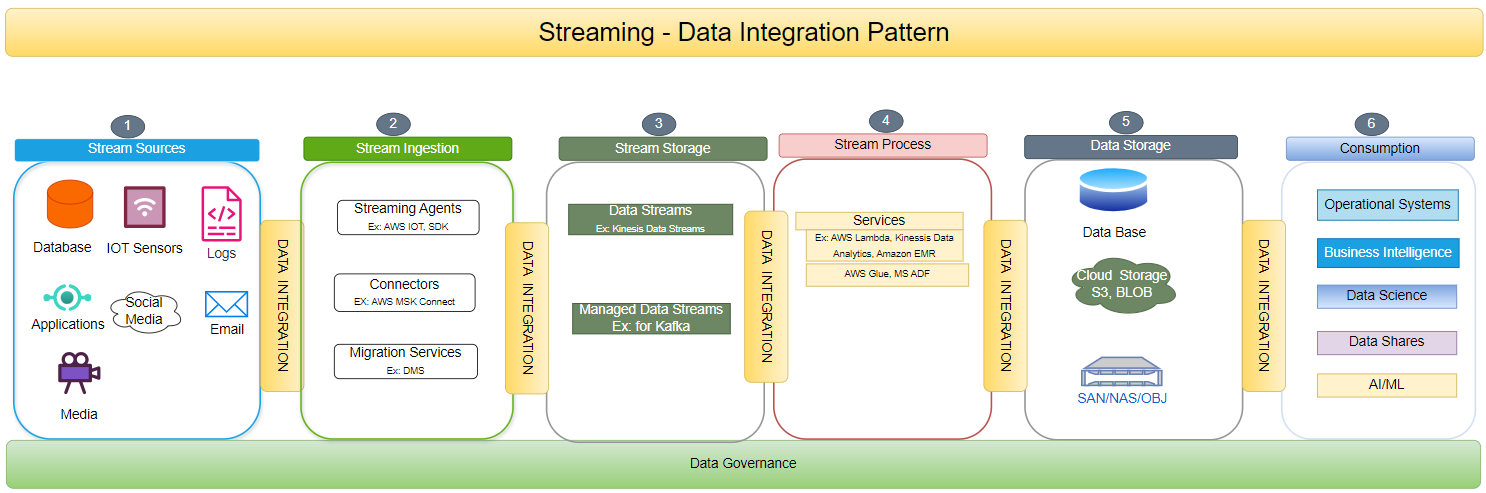

a. Streaming-Based mostly Information Integration Structure

In a streaming-based structure, knowledge streams are repeatedly ingested as they arrive. Instruments like Apache Kafka are employed for real-time knowledge assortment, processing, and distribution.

This structure is right for dealing with high-velocity, high-volume knowledge whereas guaranteeing knowledge high quality and low-latency insights.

Streaming-based structure, powered by Apache Kafka, revolutionizes knowledge processing. It entails steady knowledge ingestion, enabling real-time assortment, processing, and distribution. This strategy facilitates real-time knowledge processing, handles giant volumes of information, and prioritizes knowledge high quality and low-latency insights.

The diagram under illustrates the varied parts concerned in a streaming knowledge integration structure.

b. Occasion-Pushed Integration Structure

An event-driven structure is a extremely scalable and environment friendly strategy for contemporary functions and microservices. This structure responds to particular occasions or triggers inside a system by ingesting knowledge because the occasions happen, enabling the system to react rapidly to adjustments. This permits for environment friendly dealing with of enormous volumes of information from varied sources.

c. Lambda Integration Structure

The Lambda structure embraces a hybrid strategy, skillfully mixing the strengths of batch and real-time knowledge ingestion. It includes two parallel knowledge pipelines, every with a definite goal. The batch layer expertly handles the processing of historic knowledge, whereas the velocity layer swiftly addresses real-time knowledge. This architectural design ensures low-latency insights, upholding knowledge accuracy and consistency even in intensive distributed programs.

d. Kappa Information Integration Structure

Kappa structure is a simplified variation of Lambda structure particularly designed for real-time knowledge processing. It employs a solitary stream processing engine, comparable to Apache Flink or Apache Kafka Streams, to handle each historic and real-time knowledge, streamlining the information ingestion pipeline. This strategy minimizes complexity and upkeep bills whereas concurrently delivering fast and exact insights.

2. Close to Actual-Time Information Integration

In close to real-time knowledge integration, the information is processed and made obtainable shortly after it’s generated, which is vital for functions requiring well timed knowledge updates. A number of patterns are used for close to real-time knowledge integration, a few of them have been highlighted under:

a. Change Information Seize — Information Integration

Change Information Seize (CDC) is a technique of capturing adjustments that happen in a supply system’s knowledge and propagating these adjustments to a goal system.

b. Information Replication — Information Integration Structure

With the Information Replication Integration Structure, two databases can seamlessly and effectively replicate knowledge primarily based on particular necessities. This structure ensures that the goal database stays in sync with the supply database, offering each programs with up-to-date and constant knowledge. Consequently, the replication course of is clean, permitting for efficient knowledge switch and synchronization between the 2 databases.

c. Information Virtualization — Information Integration Structure

In Information Virtualization, a digital layer integrates disparate knowledge sources right into a unified view. It eliminates knowledge replication, dynamically routes queries to supply programs primarily based on elements like knowledge locality and efficiency, and offers a unified metadata layer. The digital layer simplifies knowledge administration, improves question efficiency, and facilitates knowledge governance and superior integration eventualities. It empowers organizations to leverage their knowledge property successfully and unlock their full potential.

3. Batch Course of: Information Integration

Batch Information Integration entails consolidating and conveying a set of messages or information in a batch to reduce community site visitors and overhead. Batch processing gathers knowledge over a time period after which processes it in batches. This strategy is especially useful when dealing with giant knowledge volumes or when the processing calls for substantial sources. Moreover, this sample permits the replication of grasp knowledge to duplicate storage for analytical functions. The benefit of this course of is the transmission of refined outcomes. The normal batch course of knowledge integration patterns are:

Conventional ETL Structure — Information Integration Structure

This architectural design adheres to the traditional Extract, Remodel, and Load (ETL) course of. Inside this structure, there are a number of parts:

- Extract: Information is obtained from a wide range of supply programs.

- Remodel: Information undergoes a change course of to transform it into the specified format.

- Load: Remodeled knowledge is then loaded into the designated goal system, comparable to an information warehouse.

Incremental Batch Processing — Information Integration Structure

This structure optimizes processing by focusing solely on new or modified knowledge from the earlier batch cycle. This strategy enhances effectivity in comparison with full batch processing and alleviates the burden on the system’s sources.

Micro Batch Processing — Information Integration Structure

In Micro Batch Processing, small batches of information are processed at common, frequent intervals. It strikes a steadiness between conventional batch processing and real-time processing. This strategy considerably reduces latency in comparison with standard batch processing methods, offering a notable benefit.

Pationed Batch Processing — Information Integration Structure

On this partitioned batch processing strategy, voluminous datasets are strategically divided into smaller, manageable partitions. These partitions can then be effectively processed independently, continuously leveraging the ability of parallelism. This system provides a compelling benefit by lowering processing time considerably, making it a gorgeous alternative for dealing with large-scale knowledge.

Conclusion

Listed here are the details to remove from this text:

- It is necessary to have a robust knowledge governance framework in place when integrating knowledge from totally different supply programs.

- The info integration patterns needs to be chosen primarily based on the use circumstances, comparable to quantity, velocity, and veracity.

- There are 3 kinds of Information integration kinds, and we should always select the suitable mannequin primarily based on totally different parameters.