Retrieval-Augmented Technology (RAG) (opens new window)programs have been designed to enhance the response high quality of a giant language mannequin (LLM). When a consumer submits a question, the RAG system extracts related data from a vector database and passes it to the LLM as context. The LLM then makes use of this context to generate a response for the consumer. This course of considerably improves the standard of LLM responses with much less “hallucination.” (opens new win

So, within the workflow above, there are two essential elements in a RAG system:

- Retriever: It identifies probably the most related data from the vector database utilizing the ability of similarity search. This stage is probably the most important a part of any RAG system because it units the muse for the standard of the ultimate output. The retriever searches a vector database to seek out paperwork related to the consumer question. It entails encoding the question and the paperwork into vectors and utilizing similarity measures to seek out the closest matches.

- Response generator: As soon as the related paperwork are retrieved, the consumer question and the retrieved paperwork are handed to the LLM mannequin to generate a coherent, related, and informative response. The generator (LLM) takes the context supplied by the retriever and the unique question to generate an correct response.

The effectiveness and efficiency of any RAG system considerably rely on these two core elements: the retriever and the generator. The retriever should effectively establish and retrieve probably the most related paperwork, whereas the generator ought to produce responses which can be coherent, related, and correct, utilizing the retrieved data. Rigorous analysis of those elements is essential to make sure optimum efficiency and reliability of the RAG mannequin earlier than deployment.

Evaluating RAG

To judge an RAG system, we generally use two sorts of evaluations:

- Retrieval Analysis

- Response Analysis

Not like conventional machine studying methods, the place there are well-defined quantitative metrics (comparable to Gini, R-squared, AIC, BIC, confusion matrix, and so on.), the analysis of RAG programs is extra advanced. This complexity arises as a result of the responses generated by RAG programs are unstructured textual content, requiring a mixture of qualitative and quantitative metrics to evaluate their efficiency precisely.

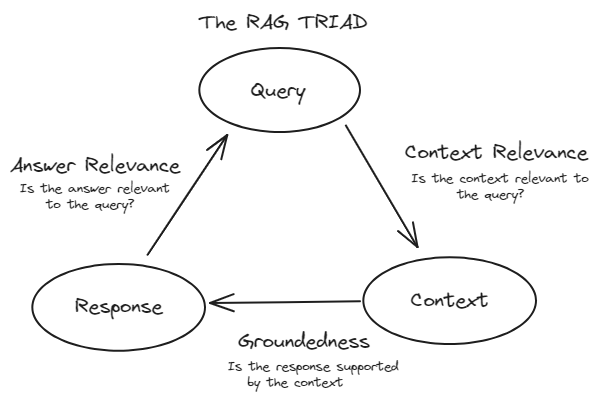

TRIAD Framework

To successfully consider RAG programs, we generally observe the TRIAD framework. This framework consists of three main elements:

- Context Relevance: This part evaluates the retrieval a part of the RAG system. It evaluates how precisely the paperwork had been retrieved from the massive dataset. Metrics like precision, recall, MRR, and MAP are used right here.

- Faithfulness (Groundedness): This part falls beneath the response analysis. It checks if the generated response is factually correct and grounded within the retrieved paperwork. Strategies comparable to human analysis, automated fact-checking instruments, and consistency checks are used to evaluate faithfulness.

- Reply Relevance: That is additionally a part of the Response Analysis. It measures how nicely the generated response addresses the consumer’s question and offers helpful data. Metrics like BLEU, ROUGE, METEOR, and embedding-based evaluations are used.

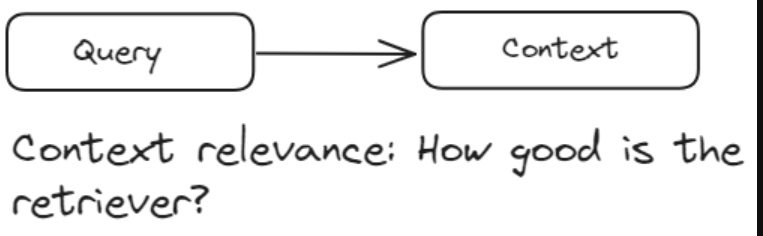

Retrieval Analysis

Retrieval evaluations are utilized to the retriever part of an RAG system, which usually makes use of a vector database. These evaluations measure how successfully the retriever identifies and ranks related paperwork in response to a consumer question. The first purpose of retrieval evaluations is to evaluate context relevance—how nicely the retrieved paperwork align with the consumer’s question. It ensures that the context supplied to the era part is pertinent and correct.

Every of the metrics presents a novel perspective on the standard of the retrieved paperwork and contributes to a complete understanding of context relevance.

Precision

Precision measures the accuracy of the retrieved paperwork. It’s the ratio of the variety of related paperwork retrieved to the complete variety of paperwork retrieved. It’s outlined as:

Which means precision evaluates how most of the paperwork retrieved by the system are literally related to the consumer’s question. For instance, if the retriever retrieves 10 paperwork and 7 of them are related, the precision could be 0.7 or 70%.

Precision evaluates, “Out of all of the paperwork that the system retrieved, what number of had been really related?”

Precision is particularly necessary when presenting irrelevant data can have damaging penalties. For instance, excessive precision in a medical data retrieval system is essential as a result of offering irrelevant medical paperwork might result in misinformation and doubtlessly dangerous outcomes.

Recall

Recall measures the comprehensiveness of the retrieved paperwork. It’s the ratio of the variety of related paperwork retrieved to the complete variety of related paperwork within the database for the given question. It’s outlined as:

Which means recall evaluates how most of the related paperwork that exist within the database had been efficiently retrieved by the system.

Recall evaluates: “Out of all the relevant documents that exist in the database, how many did the system manage to retrieve?”

Recall is important in conditions the place lacking out on related data could be expensive. For example, in a authorized data retrieval system, excessive recall is crucial as a result of failing to retrieve a related authorized doc might result in incomplete case analysis and doubtlessly have an effect on the end result of authorized proceedings.

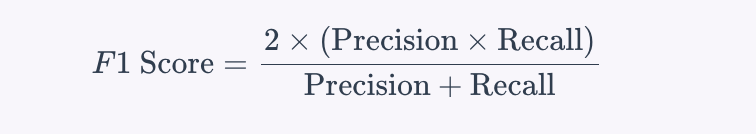

Stability Between Precision and Recall

Balancing precision and recall is commonly needed, as bettering one can generally scale back the opposite. The purpose is to seek out an optimum stability that fits the particular wants of the applying. This stability is typically quantified utilizing the F1 rating, which is the harmonic imply of precision and recall:

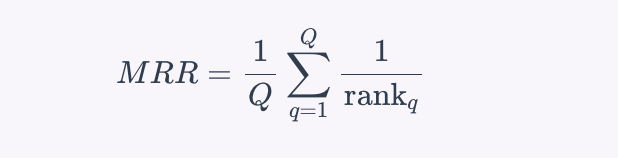

Imply Reciprocal Rank (MRR)

Imply Reciprocal Rank (MRR) is a metric that evaluates the effectiveness of the retrieval system by contemplating the rank place of the primary related doc. It’s significantly helpful when solely the primary related doc is of major curiosity. The reciprocal rank is the inverse of the rank at which the primary related doc is discovered. MRR is the typical of those reciprocal ranks throughout a number of queries. The formulation for MRR is:

The place Q is the variety of queries and is the rank place of the primary related doc for the q-th question.

MRR evaluates “On average, how quickly is the first relevant document retrieved in response to a user query?”

For instance, in a RAG-based question-answering system, MRR is essential as a result of it displays how shortly the system can current the proper reply to the consumer. If the proper reply seems on the high of the record extra ceaselessly, the MRR worth might be greater, indicating a more practical retrieval system.

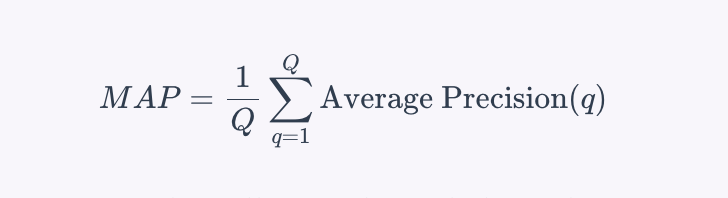

Imply Common Precision (MAP)

Imply Common Precision (MAP) is a metric that evaluates the precision of retrieval throughout a number of queries. It takes into consideration each the precision of the retrieval and the order of the retrieved paperwork. MAP is outlined because the imply of the typical precision scores for a set of queries. To calculate the typical precision for a single question, the precision is computed at every place within the ranked record of retrieved paperwork, contemplating solely the top-Ok retrieved paperwork, the place every precision is weighted by whether or not the doc is related or not. The formulation for MAP throughout a number of queries is:

The place ( Q ) is the variety of queries, and is the typical precision for the question ( q ).

MAP evaluates, “On common, how exact are the top-ranked paperwork retrieved by the system throughout a number of queries?”

For instance, in a RAG-based search engine, MAP is essential as a result of it considers the precision of the retrieval at completely different ranks, making certain that related paperwork seem greater within the search outcomes, which boosts the consumer expertise by presenting probably the most related data first.

An Overview of the Retrieval Evaluations

- Precision: High quality of retrieved outcomes

- Recall: Completeness of retrieved outcomes

- MRR: How shortly the primary related doc is retrieved

- MAP: Complete analysis combining precision and rank of related paperwork

Response Analysis

Response evaluations are utilized to the era part of a system. These evaluations measure how successfully the system generates responses primarily based on the context supplied by the retrieved paperwork. We divide response evaluations into two sorts:

- Faithfulness (Groundedness)

- Reply Relevance

Faithfulness (Groundedness)

Faithfulness evaluates whether or not the generated response is correct and grounded within the retrieved paperwork. It ensures that the response doesn’t comprise hallucinations or incorrect data. This metric is essential as a result of it traces the generated response again to its supply, making certain the knowledge relies on a verifiable floor fact. Faithfulness helps forestall hallucinations, the place the system generates plausible-sounding however factually incorrect responses.

To measure faithfulness, the next strategies are generally used:

- Human analysis: Consultants manually assess whether or not the generated responses are factually correct and accurately referenced from the retrieved paperwork. This course of entails checking every response in opposition to the supply paperwork to make sure all claims are substantiated.

- Automated fact-checking instruments: These instruments evaluate the generated response in opposition to a database of verified details to establish inaccuracies. They supply an automatic technique to examine the validity of the knowledge with out human intervention.

- Consistency checks: These consider if the mannequin persistently offers the identical factual data throughout completely different queries. This ensures that the mannequin is dependable and doesn’t produce contradictory data.

Reply Relevance

Reply relevance measures how nicely the generated response addresses the consumer’s question and offers helpful data.

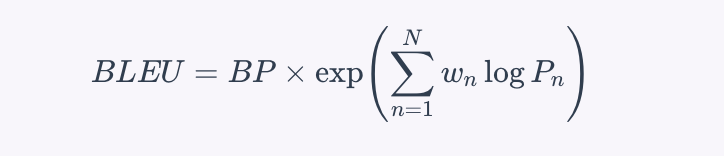

BLEU (Bilingual Analysis Understudy)

BLEU measures the overlap between the generated response and a set of reference responses, specializing in the precision of n-grams. It’s calculated by measuring the overlap of n-grams (contiguous sequences of n phrases) between the generated and reference responses. The formulation for the BLEU rating is:

The place (BP) is the brevity penalty to penalize quick responses, (P_n) is the precision of n-grams, and (w_n) are the weights for every n-gram degree. BLEU quantitatively measures how intently the generated response matches the reference response.

ROUGE (Recall-Oriented Understudy for Gisting Analysis)

ROUGE measures the overlap of n-grams, phrase sequences, and phrase pairs between the generated and reference responses, contemplating each recall and precision. The commonest variant, ROUGE-N, measures the overlap of n-grams between the generated and reference responses. The formulation for ROUGE-N is:

ROUGE evaluates each the precision and recall, offering a balanced measure of how a lot related content material from the reference is current within the generated response.

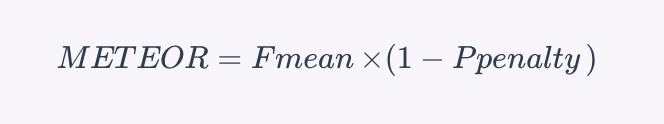

METEOR (Metric for Analysis of Translation with Express ORdering)

METEOR considers synonymy, stemming, and phrase order to judge the similarity between the generated response and the reference responses. The formulation for the METEOR rating is:

The place $ F_{textual content{imply}}$ is the harmonic imply of precision and recall, and is a penalty for incorrect phrase order and different errors. METEOR offers a extra nuanced evaluation than BLEU or ROUGE by contemplating synonyms and stemming.

Embedding-Primarily based Analysis

This methodology makes use of vector representations of phrases (embeddings) to measure the semantic similarity between the generated response and the reference responses. Strategies comparable to cosine similarity are used to check the embeddings, offering an analysis primarily based on the that means of the phrases slightly than their actual matches.

Suggestions and Methods To Optimize RAG Methods

There are some basic suggestions and methods that you should utilize to optimize your RAG programs:

Use Re-Rating Strategies

Re-ranking has been probably the most broadly used approach to optimize the efficiency of any RAG system. It takes the preliminary set of retrieved paperwork and additional ranks probably the most related ones primarily based on their similarity. We are able to extra precisely assess doc relevance utilizing methods like cross-encoders and BERT-based re-rankers. This ensures that the paperwork supplied to the generator are contextually wealthy and extremely related, main to higher responses.

Tune Hyperparameters

Recurrently tuning hyperparameters like chunk measurement, overlap, and the variety of high retrieved paperwork can optimize the efficiency of the retrieval part. Experimenting with completely different settings and evaluating their affect on retrieval high quality can result in higher total efficiency of the RAG system.

Embedding Fashions

Deciding on an acceptable embedding mannequin is essential for optimizing a RAG system’s retrieval part. The appropriate mannequin, whether or not general-purpose or domain-specific, can considerably improve the system’s skill to precisely symbolize and retrieve related data. By selecting a mannequin that aligns together with your particular use case, you possibly can enhance the precision of similarity searches and the general efficiency of your RAG system. Think about components such because the mannequin’s coaching knowledge, dimensionality, and efficiency metrics when making your choice.

Chunking Methods

Customizing chunk sizes and overlaps can enormously enhance the efficiency of RAG programs by capturing extra related data for the LLM. For instance, Langchain’s semantic chunking splits paperwork primarily based on semantics, making certain every chunk is contextually coherent. Adaptive chunking methods that fluctuate primarily based on doc sorts (comparable to PDFs, tables, and pictures) may help in retaining extra contextually acceptable data.

Position of Vector Databases in RAG Methods

Vector databases are integral to the efficiency of RAG programs. When a consumer submits a question, the RAG system’s retriever part leverages the vector database to seek out probably the most related paperwork primarily based on vector similarity. This course of is essential for offering the language mannequin with the appropriate context to generate correct and related responses. A strong vector database ensures quick and exact retrieval, instantly influencing the general effectiveness and responsiveness of the RAG system.

Conclusion

Growing an RAG system is just not inherently tough, however evaluating RAG programs is essential for measuring efficiency, enabling steady enchancment, aligning with enterprise goals, balancing prices, making certain reliability, and adapting to new strategies. This complete analysis course of helps in constructing a strong, environment friendly, and user-centric RAG system.

By addressing these important elements, vector databases function the muse for high-performing RAG programs, enabling them to ship correct, related, and well timed responses whereas effectively managing large-scale, advanced knowledge.