Giant Language Fashions

Giant language fashions (LLMs) symbolize a major development in Synthetic Intelligence, significantly within the subject of Pure Language Processing. These fashions are designed to know, generate, and work together with pure language in a method that carefully mimics human communication. Educated on huge datasets comprising textual content from various sources, LLMs study to acknowledge patterns, contexts, and nuances inside language, enabling them to carry out duties akin to translation, summarization, question-answering, and artistic writing. Their potential to generate coherent and contextually related textual content makes them priceless instruments in varied functions, together with customer support, content material creation, and academic help.

Basic-purpose LLMs are highly effective and spectacular however include points like non-determinism in outputs and formatting, and excessive useful resource and monetary prices. Smaller, task-specific LLMs distilled from bigger, general-purpose LLMs might be a sexy choice for a lot of duties.

Price/High quality Commerce-Off

Whereas massive and highly effective LLMs provide unparalleled capabilities in duties demanding deep language understanding and technology, their inherent complexity presents important challenges for real-world deployment, significantly in situations requiring low latency and cost-effectiveness.

Excessive Latency

- Actual-time interactions: LLMs typically function with excessive latency, that means there is a noticeable delay between enter and output. This may be detrimental in functions requiring real-time responses.

- Person expertise: Excessive latency can create a irritating person expertise, resulting in person churn and decreased engagement.

- Restricted scalability: Scaling LLMs for giant numbers of customers whereas sustaining low latency might be difficult.

Inference Price

- Computational assets: Working an LLM for inference, particularly a big one, requires important computational energy.

- Price per request: The price of using an LLM can fluctuate relying on the mannequin measurement, complexity of the duty, and the variety of requests. For real-time functions with excessive request volumes, these prices can shortly escalate.

Exploring smaller, extra environment friendly LLMs particularly tailor-made for particular duties generally is a cost-effective different whereas nonetheless reaching acceptable efficiency.

Distilling Job-Particular Giant Language Fashions

Distillation [1] entails producing a considerable amount of knowledge from a big/costly LLM (known as a trainer mannequin), and coaching a smaller, extra environment friendly, and performant LLM (known as a pupil mannequin) on it to realize high quality similar to the bigger LLM.

This works particularly effectively for deploying an LLM to carry out a single activity (eg: summarizing textual content, extracting key items of knowledge from massive quantities of textual content, and so on.)

Producing Coaching Knowledge

Coaching knowledge of the order of hundreds to thousands and thousands of examples is required to coach a high-quality mannequin. We solely want the inputs of the examples, as an example: for the duty of summarizing paperwork, we solely want the enter paperwork.

Piece-1: Coaching Inputs

The technique for producing coaching knowledge is dependent upon whether or not you have already got a supply of inputs, or are ranging from scratch:

Present System as Enter Supply: If there’s an present system for which you’re constructing this mannequin, the inputs might be extracted from the system.

Present System as Enter Supply

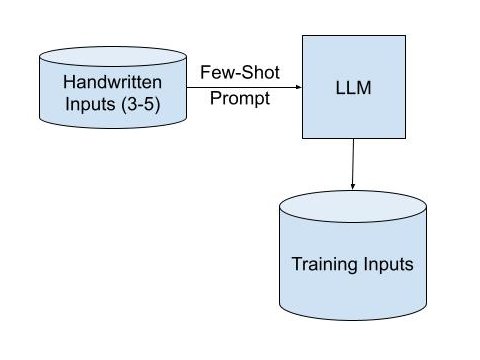

LLMs to Generate Inputs: If there’s no large-scale supply of high-quality inputs, few-shot prompting of a big/highly effective LLM with a small quantity (3-5) of examples can yield a big quantity of coaching knowledge inputs. Observe that it is a one-time expense for creating training-data and never a recurring expense all through the lifetime of the task-specific LLM that you simply’re attempting to deploy.

LLMs as Enter Supply

In both of those strategies, it’s greatest to have a broad set of natural-sounding inputs, with a purpose to greatest replicate the inputs anticipated within the real-world software the place the LLM might be deployed. LLMs are nice at recognizing patterns, so it’s useful to not have repetitive, templated, or synthetic coaching inputs.

Piece-2: Coaching Outputs

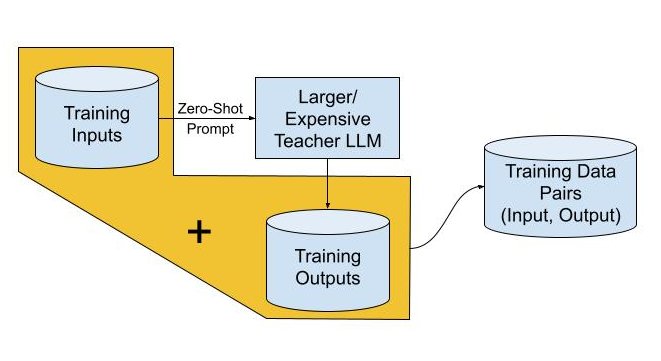

The very best quality (ie., massive, costly) LLM can be utilized to generate high-quality outputs for every of the coaching inputs. The perfect LLMs have sturdy zero-shot efficiency and are in a position to comply with directions effectively, so the duty might be formulated as directions (eg: “Summarize the text below. Avoid complex sentences in order to be readable in a hurry.”).

Distillation Knowledge Technology

The pairs of coaching inputs and outputs, ideally between hundreds to thousands and thousands, type the coaching dataset for distillation into the coed LLM.

Distillation Into Smaller, Extra Environment friendly LLMs

The scholar mannequin is skilled to imitate the trainer’s outputs for the given inputs. You need to use varied loss features to measure the distinction between the trainer’s outputs and the coed’s outputs.

The coaching course of usually entails Supervised Effective-Tuning of the coed mannequin utilizing the enter prompts and output textual content from the trainer ie., the pairs generated above. The mannequin then updates its weights to reduce the distinction between the 2.

Benefits of Distilled Pupil LLMs

- Cheaper serving and inference: Probably the most important benefit of a distilled pupil LLM is its decreased measurement. This interprets to decrease computational calls for, leading to decrease prices for deploying and working the mannequin.

- Decrease latency: Smaller fashions inherently course of info sooner. This results in decrease latency, that means responses are generated shortly.

- Comparable high quality to trainer LLM: The important thing to profitable distillation lies within the huge coaching knowledge derived from the highly effective “teacher” LLM. The scholar mannequin successfully learns from the trainer’s data and experience, permitting it to realize comparable efficiency on the particular duties for which it was skilled.

Disadvantages of Distilled Pupil LLMs

- Job-specific nature: Distilled fashions are sometimes extremely specialised for the particular activity they had been skilled on. They could excel within the particular activity, however fall quick in different areas. This limits their versatility.

- Misplaced general-purpose talents: The fine-tuning course of focuses on maximizing efficiency for the designated activity, typically on the expense of broader data and flexibility.

Conclusions

Distillation is a strong approach for making Giant Language Fashions environment friendly and performant, which generally is a important issue when deploying LLMs for particular duties. Nonetheless, it is essential to be aware of their limitations, significantly their task-specific nature and the potential lack of general-purpose talents.

References

Distillation Paper