In at this time’s data-driven world, retaining your information processing pipelines up-to-date is essential for sustaining effectivity and leveraging new options. Upgrading Spark variations generally is a daunting job, however with the best instruments and techniques, it may be streamlined and automatic.

Upgrading Spark pipelines is important for leveraging the most recent options and enhancements. This improve course of not solely ensures compatibility with newer variations but additionally aligns with the ideas of contemporary information architectures just like the Open Information Lakehouse (Apache Iceberg). On this information, we’ll focus on the strategic significance of Spark code upgrades and introduce a strong toolkit designed to streamline this course of.

Toolkit Overview

Challenge Particulars

For our demonstration, we used a pattern undertaking named spark-refactor-demo, which covers upgrades from Spark 2.4.8 to three.3.1. The undertaking is written in Scala and makes use of sbt and gradle for builds. Key information to notice embody construct.sbt, plugins.sbt, gradle.properties, and construct.gradle.

Introducing Scalafix

What Is Scalafix?

Scalafix is a refactoring and linting device for Scala, notably helpful for tasks present process model upgrades. It helps automate the migration of code to newer variations, making certain the codebase stays trendy, environment friendly, and appropriate with new options and enhancements.

Options and Use Circumstances

Scalafix presents quite a few advantages:

- Model upgrades: Updates deprecated syntax and APIs

- Coding requirements enforcement: Ensures a constant coding type

- Code high quality assurance: Identifies and fixes frequent points and anti-patterns

- Giant-scale refactoring: Applies uniform transformations throughout the codebase

- Customized rule creation: Permits customers to outline particular guidelines tailor-made to their wants

- Automated code refactoring: Rewrites Scala-based Spark code primarily based on customized guidelines

- Linting: Checks code for potential points and ensures adherence to coding requirements

Creating Customized Scalafix Guidelines

- Customized guidelines may be outlined to focus on particular codebases or repositories.

- The foundations are written in Scala and may be built-in into the undertaking’s construct course of.

package deal examplefix

import scalafix.v1._

class MyCustomRule extends SyntacticRule("MyCustomRule") {

override def repair(implicit doc: SyntacticDocument): Patch = {

doc.tree.gather {

case t @ Importer(_, importees) if importees.exists(_.is[Importee.Wildcard]) =>

Patch.replaceTree(t, "import mypackage._") + Patch.lint(Diagnostic("Rule", "Avoid wildcard imports", t.pos))

}.asPatch } }

Finest Practices for Scalafix Rule Growth

- Align the Scala binary model together with your construct.

- Use

scalafixDependenciessetting key for any exterior Scalafix rule.

// construct.sbt for a single-project construct

libraryDependencies +=

"ch.epfl.scala" %% "scalafix-core" % _root_.scalafix.sbt.BuildInfo.scalafixVersion % ScalafixConfig

// Command to run Scalafix together with your customized rule

sbt "scalafix MyCustomRule"Improve and Refactoring Course of

Step-By-Step Information

- Outline variations: Set the preliminary and goal variations of Spark.

INITIAL_VERSION=${INITIAL_VERSION:-2.4.8}

TARGET_VERSION=${TARGET_VERSION:-3.3.1}- Construct present undertaking: Clear, compile, take a look at, and package deal the present undertaking utilizing

sbt.

sbt clear compile take a look at package deal- Add Scalafix dependencies: Replace

construct.sbtandplugins.sbtto incorporate Scalafix dependencies.

cat >> construct.sbt > undertaking/plugins.sbt - Outline Scalafix guidelines: Specify the foundations for refactoring in

.scalafix.conf.

rules = [

UnionRewrite,

AccumulatorUpgrade,

ScalaTestImportChange,

………………………………… more

- Identify potential issues: Define and run Scalafix warn rules to identify any anti-patterns or potential issues in

.scalafix.conf.

guidelines = [

GroupByKeyWarn,

MetadataWarnQQ

]

UnionRewrite.deprecatedMethod {

"unionAll" = "union"

}

OrganizeImports {

……………… extrasbt scalafix || (echo "Linter warnings were found"; immediate)Code Overview and Last Construct

Overview Adjustments

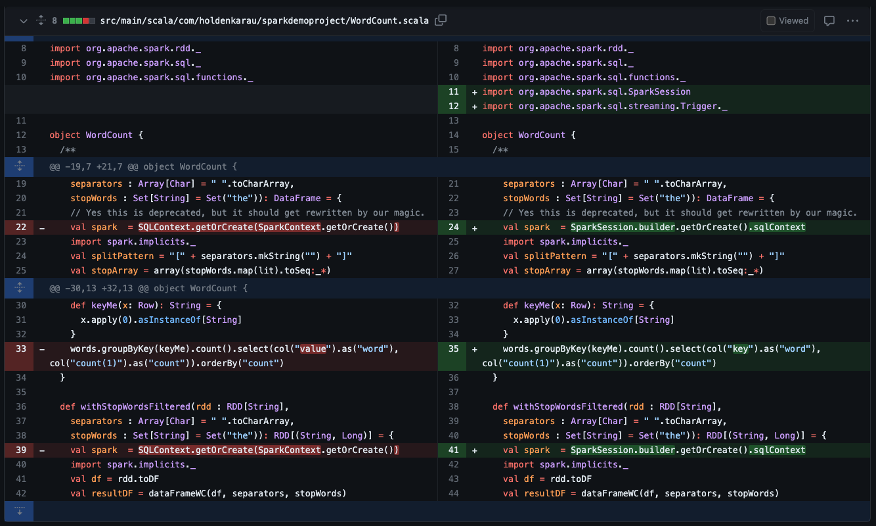

Use instruments like Git diff or VS Code to evaluation the modifications made by Scalafix. Confirm that the refactored code aligns together with your expectations and coding requirements.

Listed below are two instance PR’s as a part of this spark-refactor-demo undertaking:

Replace Dependencies for Last Construct

Replace the library dependencies in construct.sbt to mirror the goal Spark model, and construct the brand new codebase.

sparkVersion := "3.3.1"

libraryDependencies ++= Seq(

"org.apache.spark" %% "spark-streaming" % "3.3.1" % "provided",

"org.apache.spark" %% "spark-sql" % "3.3.1" % "provided",

"org.scalatest" %% "scalatest" % "3.2.2" % "test",

"org.scalacheck" %% "scalacheck" % "1.15.2" % "test",

"com.holdenkarau" %% "spark-testing-base" % "3.3.1_1.3.0" % "test"

)

scalafixDependencies in ThisBuild +=

"com.holdenkarau" %% "spark-scalafix-rules-3.3.1" % "0.1.9"

semanticdbEnabled in ThisBuild := true

Construct new codebase:

sbt clear compile take a look at package dealNow the Code base is Upgraded from Spark 2.4.8 to Spark 3.3.1.

Conclusion

Upgrading Spark pipelines does not need to be a difficult job. With instruments like Scalafix, you possibly can automate and streamline the method, making certain your codebase is trendy, environment friendly, and able to leverage the most recent options of Spark. Comply with this information to facilitate a easy improve and luxuriate in the advantages of a extra strong and highly effective Spark atmosphere.