Our firm makes use of synthetic intelligence (AI) and machine studying to streamline the comparability and buying course of for automotive insurance coverage and automotive loans. As our knowledge grew, we had issues with AWS Redshift which was sluggish and costly. Altering to ClickHouse made our question efficiency sooner and enormously reduce our prices. However this additionally precipitated storage challenges like disk failures and knowledge restoration.

To keep away from in depth upkeep, we adopted JuiceFS, a distributed file system with excessive efficiency. We innovatively use its snapshot characteristic to implement a primary-replica structure for ClickHouse. This structure ensures excessive availability and stability of the information whereas considerably enhancing system efficiency and knowledge restoration capabilities. Over greater than a 12 months, it has operated with out downtime and replication errors, delivering anticipated efficiency.

On this submit, I’ll deep dive into our software challenges, the answer we discovered, and our future plans. I hope this text gives useful insights for startups and small groups in massive corporations.

Information Structure: From Redshift to ClickHouse

Initially, we selected Redshift for analytical queries. Nonetheless, as knowledge quantity grew, we encountered extreme efficiency and price challenges. For instance, when producing funnel and A/B take a look at experiences, we confronted loading occasions of as much as tens of minutes. Even on a fairly sized Redshift cluster, these operations have been too sluggish. This made our knowledge service unavailable.

Subsequently, we regarded for a sooner, more cost effective resolution, and we selected ClickHouse regardless of its limitations on real-time updates and deletions. The change to ClickHouse introduced vital advantages:

- Report loading occasions have been diminished from tens of minutes to seconds. We’re in a position to course of knowledge way more effectively.

- The entire bills have been reduce to not more than 25% of what that they had been.

Our design was centered on ClickHouse, with Snowflake serving as a backup for the 1% of knowledge processes that ClickHouse could not deal with. This setup enabled seamless knowledge trade between ClickHouse and Snowflake.

Jerry knowledge structure

ClickHouse Deployment and Challenges

We initially maintained a stand-alone deployment for plenty of causes:

- Efficiency: Stand-alone deployments keep away from the overhead of clusters and carry out properly below equal computing assets.

- Upkeep prices: Stand-alone deployments have the bottom upkeep prices. This covers not solely integration upkeep prices but in addition software knowledge settings and software layer publicity upkeep prices.

- {Hardware} capabilities: Present {hardware} can help large-scale stand-alone ClickHouse deployments. For instance, we are able to now get EC2 cases on AWS with 24 TB of reminiscence and 488 vCPUs. This surpasses many deployed ClickHouse clusters in scale. These cases additionally supply the disk bandwidth to fulfill our deliberate capability.

Subsequently, contemplating reminiscence, CPU, and storage bandwidth, stand-alone ClickHouse is a suitable resolution that shall be efficient for the foreseeable future.

Nonetheless, there are particular inherent points with the ClickHouse method:

- {Hardware} failures could cause lengthy downtime for ClickHouse. This threatens the applying’s stability and continuance.

- ClickHouse knowledge migration and backup are nonetheless troublesome duties. They require a dependable resolution.

After we deployed ClickHouse, we bumped into the next issues:

- Scaling and sustaining storage: Sustaining applicable disk utilization charges turned troublesome because of the speedy enlargement of knowledge.

- Failures of the disk: ClickHouse is designed to make aggressive use of {hardware} assets with a purpose to present the most effective question efficiency. Because of this, learn and write operations occur ceaselessly. They typically exceed disk bandwidth. This will increase the danger of disk {hardware} failures. When such failures happen, restoration can take a number of hours to over ten hours. This relies on the information quantity. We have heard that different customers had related experiences. Though knowledge evaluation techniques are usually thought-about replicas of different system’s knowledge, the influence of those failures remains to be vital. Subsequently, we have to be prepared for any {hardware} failures. Information migration, backup, and restoration are extraordinarily troublesome operations that take extra time and vitality to finish efficiently.

Our Answer

We chosen JuiceFS to deal with our ache factors for the next causes:

- JuiceFS was the one obtainable POSIX file system that would run on object storage.

- Limitless capability: We’ve not needed to fear about storage capability since we began utilizing it.

- Important value financial savings: Our bills are considerably decrease with JuiceFS than with different options.

- Robust snapshot functionality: JuiceFS successfully implements the Git branching mechanism on the file system stage. When two completely different ideas merge so seamlessly, they typically produce extremely artistic options. This makes beforehand difficult issues a lot simpler to resolve.

Constructing a Major-Reproduction Structure of ClickHouse

We got here up with the concept of migrating ClickHouse to a shared storage atmosphere primarily based on JuiceFS. The article Exploring Storage and Computing Separation for ClickHouse supplied some insights for us.

To validate this method, we carried out a collection of exams. The outcomes confirmed that with caching enabled, JuiceFS learn efficiency was near that of native disks. That is much like the take a look at outcomes on this article.

Though write efficiency dropped to 10% to 50% of disk write velocity, this was acceptable for us.

The tuning changes we made for JuiceFS mounting are as follows:

- To jot down asynchronously and forestall attainable blocking issues, we enabled the writeback characteristic.

- In cache settings, we set

attrcachetoto “3,600.0 seconds” andcache-sizeto “2,300,000.” We enabled the metacache characteristic. - Contemplating the potential of longer I/O runtime on JuiceFS than on native drives, we launched the block-interrupt characteristic.

Growing cache hit charges was our optimization purpose. We used JuiceFS Cloud Service to extend the cache hit fee to 95%. If we want additional enchancment, we’ll contemplate including extra disk assets.

The mixture of ClickHouse and JuiceFS considerably diminished our operational workload. We not have to ceaselessly broaden disk house. As a substitute, we deal with monitoring cache hit charges. This enormously alleviated the urgency of disk enlargement. Moreover, knowledge migration isn’t needed within the occasion of {hardware} failures. This lowered attainable dangers and losses significantly.

We enormously benefited from the simple knowledge backup and restoration choices that the JuiceFS snapshot functionality provided. Because of snapshots, we are able to view the unique state of the information and resume database providers at any time sooner or later. This method addresses points that have been beforehand dealt with on the software stage by implementing options on the file system stage. As well as, the snapshot characteristic could be very quick and economical, since just one copy of the information is saved. Customers of JuiceFS Group Version can use the clone characteristic to realize related performance.

Furthermore, with out the necessity for knowledge migration, downtime was dramatically diminished. We might shortly reply to failures or enable automated techniques to mount directories on one other server, guaranteeing service continuity. It’s value mentioning that ClickHouse startup time is just a few minutes, which additional improves system restoration velocity.

Moreover, our learn efficiency remained secure after the migration. Your entire firm observed no distinction. This demonstrated the efficiency stability of this resolution.

Lastly, our prices considerably decreased.

Why We Arrange a Major-Reproduction Structure

After migrating to ClickHouse, we encountered a number of points that led us to think about constructing a primary-replica structure:

- Useful resource competition precipitated efficiency degradation. In our setup, all duties ran on the identical ClickHouse occasion. This led to frequent conflicts between extract, remodel, and cargo (ETL) duties and reporting duties, which affected total efficiency.

- {Hardware} failures precipitated downtime. Our firm wanted to entry knowledge always, so lengthy downtime was unacceptable. Subsequently, we sought an answer, which led us to the answer of a primary-replica structure.

JuiceFS helps a number of mount factors in numerous areas. We tried to mount the JuiceFS file system elsewhere and run ClickHouse on the similar location. In the course of the implementation, nevertheless, we encountered some points:

- Via file lock mechanisms, ClickHouse restricted a file to be run by just one occasion, which offered a problem. Happily, this difficulty was simple to resolve by modifying the ClickHouse supply code to deal with the locking.

- Even throughout read-only operations, ClickHouse retained some state data, resembling write-time cache.

- Metadata synchronization was additionally an issue. When operating a number of ClickHouse cases on JuiceFS, some knowledge written by one occasion may not be acknowledged by others. Fixing the issue required restarting cases.

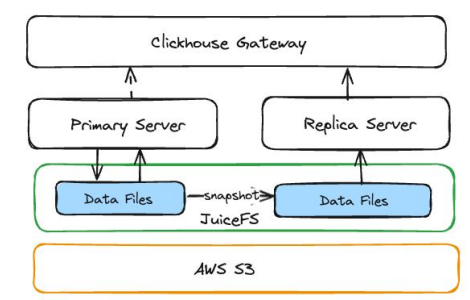

So we used JuiceFS snapshots to arrange a primary-replica structure. This methodology works like an everyday primary-backup system. The first occasion handles all knowledge updates, together with synchronization and extract, remodel, and cargo (ETL) operations. The duplicate occasion focuses on question performance.

ClickHouse primary-replica structure

How We Created a Reproduction Occasion for ClickHouse

1. Making a Snapshot

We used the JuiceFS snapshot command to create a snapshot listing from the ClickHouse knowledge listing on the first occasion and deploy a ClickHouse service on this listing.

2. Pausing Kafka Shopper Queues

Earlier than beginning the ClickHouse occasion, we should cease the consumption of stateful content material from different knowledge sources. For us, this meant pausing the Kafka message queue to keep away from competing for Kafka knowledge with the first occasion.

3. Run ClickHouse on the Snapshot Listing

After beginning the ClickHouse service, we injected some metadata to offer details about the ClickHouse creation time to customers.

4. Delete ClickHouse Information Mutation

On the duplicate occasion, we deleted all knowledge mutations to enhance system efficiency.

5. Performing Steady Replication

Snapshots solely save the state through which they have been created. To make sure that it reads the newest knowledge, we periodically substitute the unique occasion with a duplicate one. This methodology is easy to make use of and environment friendly as a result of every copy occasion begins with two copies and a pointer to 1. Even when we want ten minutes or extra, we usually run it each hour to go well with our wants.

Our ClickHouse primary-replica structure has been operating stably for over a 12 months. It has accomplished greater than 20,000 replication operations with out failure, demonstrating excessive reliability. Workload isolation and the steadiness of knowledge replicas are key to bettering efficiency. We efficiently elevated total report availability from lower than 95% to 99%, with none application-layer optimizations. As well as, this structure helps elastic scaling, enormously enhancing flexibility. This permits us to develop and deploy new ClickHouse providers as wanted with out complicated operations.

What’s Subsequent

Our plans for the long run:

- We’ll develop an optimized management interface to automate occasion lifecycle administration, creation operations, and cache administration.

- We additionally plan to optimize write efficiency. From the applying layer, given the strong help for the Parquet open format, we are able to immediately write most hundreds into the storage system exterior ClickHouse for simpler entry. This permits us to make use of conventional strategies to realize parallel writes, thereby bettering write efficiency.

- We observed a brand new venture, chDB, which permits customers to embed ClickHouse performance immediately in a Python atmosphere with out requiring a ClickHouse server. Combining CHDB with our present storage resolution, we are able to obtain a totally serverless ClickHouse. This can be a path we’re at present exploring.